Unless you've been living in a cave for the past year, you're aware that generative AI is the most disruptive technology since the birth of the smartphone. But what, exactly, is it?

Generative AI is a type of artificial intelligence system or – to be more precise – a machine learning model focused on generating content in response to text prompts. The content generated could be text, images, logo design, audio, video, presentations or code.

Even though generative AI has only recently taken the world by storm, it's not a new technology. It was first introduced in the 1960s in chatbots. In 2014 it became a focused area of machine learning (ML), thanks to the introduction of GANs (generative adversarial networks). These are a type of ML algorithm that has made it possible for generative AI to create remarkably (and sometimes worryingly) authentic images, videos, and audio of real people, hence the rise of deepfakes.

AI is a field of computer science that aims to create intelligent machines or systems that can perform tasks that typically require human intelligence.

Generative AI is a subfield of AI focused on creating systems capable of generating new content, such as images, text, music, or video.

This includes emerging technologies like text to video AI and other innovations further expanding the creative horizons of AI. To explore more AI-driven innovations and tools shaping design, productivity, and marketing, visit Best AI Tools Directory. It’s a curated platform featuring thousands of the latest AI solutions transforming industries worldwide.

What are some examples of generative AI models?

Although AI technology has been around for some time, in 2022, it was suddenly put in the hands of consumers with text-to-image models such as Stable Diffusion, Dall-E 2, and Midjourney.

Companies now experiment with an AI logo generator to quickly test unique branding concepts and design directions.

This was followed by ChatGPT - a large language model (LLM) that captivated the masses with its ability to generate very convincing text in response to any given prompt. The AI bug spread like wildfire, and other LLMs, such as LLaMA, LaMDA, and BARD, quickly followed. This momentum soon jumped from text to production, with platforms like LTX Studio introducing high-fidelity, synchronized video and audio.

Another notable example of generative AI models is GitHub Copilot, a tool trained on all public code repositories in GitHub that can convert natural language into executable software code.

While artificial intelligence (AI) focuses on creating machines that can emulate human intelligence, machine learning (ML) focuses on training machines on data to improve their performance on specific tasks without being explicitly programmed.

How does generative AI work?

As impressive as they may seem, generative AI models are not real AI. But ChatGPT has passed the Turing test, medical school exams, and law school exams. This has led people to ascribe intelligence to such generative AI models that they don’t possess. As a result, people are investing power in GenAI models that they don’t have. That could lead to some very poor decisions if people don’t calm down and take the time to understand how these tools work. So let’s demythologize these generative AI models.

Neural networks

Generative AI models use neural networks to identify patterns within existing data to generate new and original content. Neural networks model structures we see in the human brain. Put a brain under a microscope, and you'll see an enormous number of nerve cells called neurons. These connect to one another in vast networks, and they look for patterns in their network connections. When they recognize a pattern, they communicate with their network. These networks can learn and ultimately produce what appears to be intelligent behavior.

The study of neural nets began in the 1940s with the notion that they could be simulated by electrical circuits. Nowadays, neural networks are applied to software and attempt to model structures used in the brain. It was only in this century that they really began to work. The reason for this recent success is quite straightforward. To train a neural network, you need two things: lots of data and plenty of computing power. Both have become abundantly available in the last 20 years. An AI image enhancer is a vivid example of this in action: the neural network learns from millions of high-resolution image pairs until it can reconstruct fine detail from a blurry input entirely on its own.

Parameters

A word you’ll hear a lot in connection with neural networks is ‘parameters’. A parameter is a network component, and when people in the AI world talk about parameters in a neural network, they’re referring to scale. The impressive thing about large language models (LLMs) is that you can improve their performance by adding more parameters to the network. GPT-4, for example, reportedly has 1 trillion parameters, while GPT-3 has 175 billion.

Transformers

Transformers are another thing that played a big role in generative AI becoming mainstream. GPT stands for Generative Pretrained Transformer. Sorry to disappoint you, but that doesn’t refer to the heroic Autobots of the media franchise. Most LLMs use a specific neural network architecture called a transformer. Transformers have features that make them highly suited to language processing. A transformer can read vast amounts of text, identify patterns in how words and expressions relate to each other, and then predict which words should follow. Transformers made it possible to train LLMs with only a few labeled examples. That means the LLMs could be trained on large amounts of raw data in a self-supervised fashion.

The role of web scraping in generative AI

I mentioned earlier that there are two reasons neural networks took off this century: computing power and lots of data. Let’s hone in now on the ‘lots of data’ part.

Web scraping, or web data extraction as it is sometimes called, was fundamental in acquiring the vast quantity of data required to train generative AI models. All large language models are trained on scraped data. GPT, for example, was trained on 575 gigabytes of text collected from the web. GPT-3 was trained on extracted web data from the Internet Archive, Library Genesis (Libgen), Wikipedia, CommonCrawl, Google Patents, GitHub, and more. Even image models are trained on data scraped from the web.

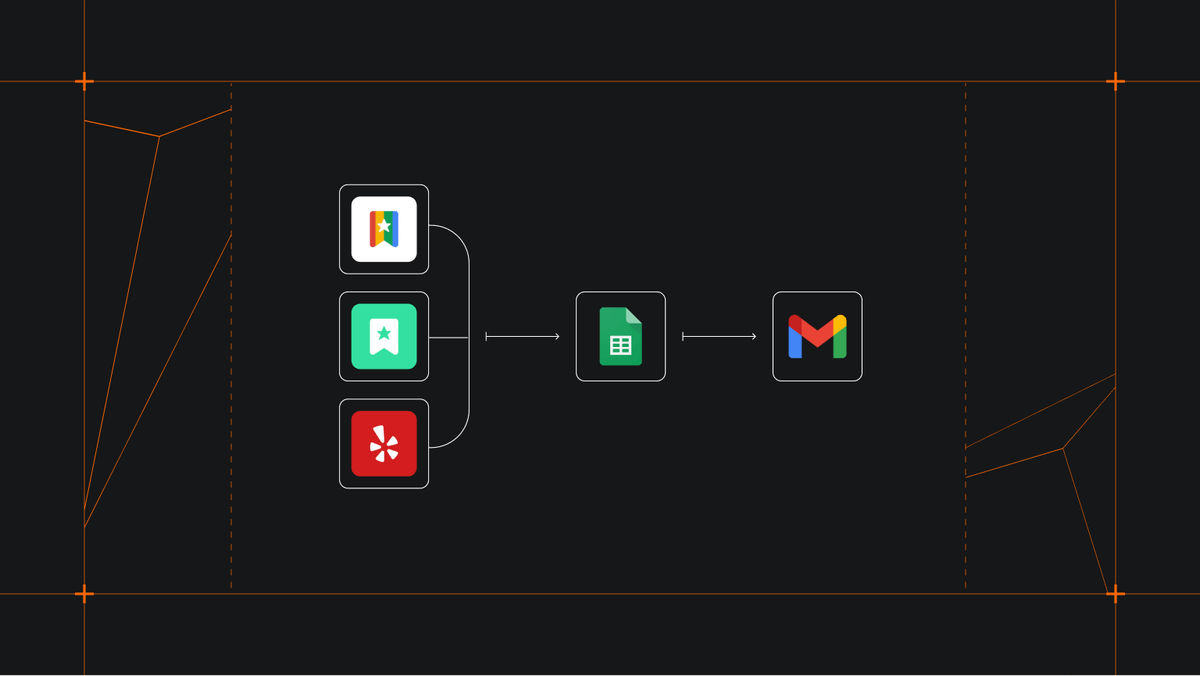

Web scraping isn’t a one-and-done activity when it comes to building generative AI models. You need to continuously feed and fine-tuning GenAI models with relevant and up-to-date information to improve and customize them. This is where integrating web scrapers with other powerful tools for generative AI comes into play.

One such tool is LangChain, which has rapidly become the library of choice for building on top of GenAI models. It allows you to invoke LLMs from different vendors, handle variable injection, and do few-shot training. It's a much better solution than using a vendor’s API directly. Here's an example of how you can integrate LangChain with your web scrapers to customize ChatGPT responses.

How do you build a generative AI model?

Web scraping is just one of the key parts of building generative AI solutions. The building process has three main steps: prototyping, development, and deployment. Below are the processes within each step.

| Prototyping | Development | Deployment |

|---|---|---|

| Data collection | Data preparation and coding | Pipelining |

| Preprocessing | Architecture creation | Model configuration |

| Selecting GenAI algorithms | Error handling | Testing and debugging |

| Development environment setup | Setting up infrastructure | Monitoring |

| Prototype modeling | Model optimization | Scaling |

| Result analysis |

Step 1. Prototyping

1. Data collection

Data collection involves gathering relevant datasets to provide the information required for training the generative AI model. The data should be diverse, representative, and aligned with the project's objectives.

2. Preprocessing

The preprocessing step involves cleaning the data by removing duplicates, handling missing values, and converting it into a standardized format. Data transformation techniques such as normalization and feature scaling are applied to ensure that the data is suitable for training.

3. Selecting generative AI algorithms

Generative AI algorithms are based on the desired output and the nature of the problem. Algorithms could include Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), or Transformers, among others.

4. Prototype modeling

With the selected algorithms, a basic version of the generative model is created. This prototype model gives a preliminary understanding of how the chosen algorithms perform on the given data.

5. Result analysis

The model's outputs are analyzed and evaluated. This analysis helps evaluate the model's initial performance, strengths, weaknesses, and potential areas for improvement.

Step 2. Development

1. Data preparation and coding

The preprocessed data is further refined, structured, and organized for use in the model training process. Coding involves implementing the logic and structure of the generative model using programming languages and libraries suitable for AI development.

2. Architecture creation

Designing the model architecture includes determining the number of layers, types of layers (e.g., convolutional, recurrent), and their connections. The architecture heavily influences the model's capacity to learn and generate meaningful outputs.

3. Error handling

Robust error-handling mechanisms are integrated into the model to ensure that it can gracefully handle unexpected inputs, exceptions, and potential failures during runtime.

4. Model optimization

This involves fine-tuning the model's hyperparameters, such as learning rates and regularization strengths, to enhance its performance. Optimization techniques aim to make the model converge faster and produce higher-quality outputs.

5. Development environment setup

The development environment is set up with the necessary tools, libraries, and frameworks for efficient coding, testing, and debugging of the generative AI model.

Step 3. Deployment

1. Pipelining

A streamlined pipeline is created to handle input data, process it through the generative model, and deliver the generated outputs. This ensures a smooth and automated process for end users.

2. Model configuration

The model's final configurations are defined, including input and output formats, pre-processing steps, and any post-processing required to refine the generated outputs.

3. Testing and debugging

Rigorous testing and debugging are conducted to identify and rectify any errors, anomalies, or performance issues in the model. This step ensures the model's reliability and stability in a production environment.

4. Setting up infrastructure

The necessary infrastructure, including hardware and software resources, is prepared to host the generative AI model in a production environment.

5. Monitoring

Monitoring mechanisms are established to track the model's performance, detect deviations from expected behavior, and gather insights for ongoing improvements.

6. Scaling

To accommodate increased usage demands, strategies for scaling the model's infrastructure and resources are implemented. This ensures the model's responsiveness and efficiency as the user base grows.

Building AI models begins with data collection

As you can see, you can’t start building a GenAI model without data collection. In other words, generative AI solutions always begin with web scraping.

So whether you want to extract documents from the web and load them to vector databases for querying and prompt generation, extract text and images from the web to generate training datasets for new AI models, or use domain-specific data from the web to fine-tune an existing model, you need a reliable web scraping platform.

And yeah, it’s probably become obvious by now that I’m going to end by saying that Apify is the platform to use, but it's true! 😁

Extract text content from the web to feed vector databases and fine-tune or train large language models such as ChatGPT or LLaMA.

FAQs

Can AI do web scraping?

It's possible to combine AI algorithms with web scraping processes to automate some data extraction activities, such as transforming pages to JSON arrays. AI web scraping is more resilient to page changes than regular scraping as it doesn’t use CSS selectors. However, AI models are restricted by limited context memory.

What is the difference between AI and generative AI?

AI aims to create intelligent machines or systems that can perform tasks that typically require human intelligence. Generative AI is a subfield of artificial intelligence focused on creating systems capable of generating new content, such as images, text, music, or video.

What is the difference between AI, Machine Learning, and Deep Learning?

Artificial Intelligence (AI) is a field of data science focused on creating machines that can emulate human intelligence.

Machine Learning (ML) is a subset of AI that focuses on teaching machines to perform specific tasks with accuracy by identifying patterns. ML uses algorithms to learn from data and make informed decisions based on what it has learned.

Deep Learning (DL) is a subfield of ML that structures algorithms in layers to create an artificial neural network that can learn in a self-supervised fashion.

Are Large Language Models AI?

Large language models, or LLMs, are generative AI models that use deep learning methods to understand and generate text in a human-like fashion.

Can LLMs do web scraping?

LLMs are currently unable to scrape websites directly, but they can help generate code for scraping websites if you prompt them with the target elements you want to scrape. Note that the code may not be functional, and website structure and design changes may impact the targeted elements and attributes.