Two ways to get data

No business can operate without data, but how do you collect it? Whether you're gathering market data to track media trends, automating data entry tasks, or whatever your use case may be, you have two options: web scraping and APIs. But why choose one over the other?

We'll go through the differences between web scraping and APIs, which will help you decide which one to use for your own projects.

What's web scraping?

The classic definition of web scraping is a method of collecting data from websites. It uses automated processes that involve writing code or scripts using different programming languages or tools that simulate human browsing behavior, navigating web pages, and capturing specific information. This method is especially useful when data is not available through APIs or when you need data from websites that do not offer an API.

The main aim of web scraping is to convert unstructured data into structured data to store it in organized databases. There are numerous web scraping procedures like HTTP programming, DOM parsing, and HTML parsing.

What's an API?

API stands for Application Programming Interface. APIs are a structured way for different software systems to communicate with each other. They provide a set of rules and protocols that allow you to request and retrieve specific data or perform actions from a web service or application. Many online platforms and services offer APIs to access their data and functionality programmatically.

Extracting data using an API is sometimes referred to as API scraping. Unlike web scraping, which involves parsing HTML and simulating human interactions with websites, API scraping depends on the structured and programmatic access provided by APIs.

Web scraping and APIs: How do they work?

Before we delve deeper into the differences between them, let’s talk about the inner workings of web scrapers and APIs.

How do web scrapers work?

Web scrapers begin by sending an HTTP request to a specific URL to fetch the webpage's HTML content. Then, using different libraries or packages, you can parse the HTML to locate and extract the desired data elements, such as text, images, or links. There are many different web scraping tools available to choose from, depending on your project requirements.

How does API scraping work?

API scraping involves extracting data from web services or applications through their publicly available APIs. You send an HTTP requests to specific API endpoints, specifying the data you want to retrieve or the actions you want to perform. The API processes these requests and sends back structured responses, often in JSON or XML format, containing the requested data.

Key differences

Here are some of the most common differences between web scraping and API scraping:

| Web Scraping | API |

|---|---|

| Web scraping allows for the extraction of data from any website. | An API is limited to the data that is provided by the service owner. |

| The data provided by a web scraper is not structured and consistent. | An API provides clear, concise, and structured data. |

| Web scrapers provide real-time data that is available on the website. | API-fetched data may be subject to update intervals. |

| It can be done free of cost, requiring almost no premium tools. | There is often a limit on fetching data, which means you need to pay for APIs. |

| As it relies on the HTML structure of a website and requires regular updating, web scraping requires frequent updates and maintenance. | APIs require less maintenance as they are versioned, and changes are communicated by the service owner. |

How to scrape a website using Beautiful Soup

For those new to web scraping, one of the most popular tools is Beautiful Soup. So we'll show you how to get started on your first web scraping project using this Python library along with Python Requests.

To start with, you can install both these libraries as project dependencies in your Python project:

pip install beautifulsoup4 requests

Once both libraries are installed, you're ready to scrape any website. Start off by using the requests library for making HTTP requests and the BeautifulSoup library for parsing HTML content.

Example:

import requests

from bs4 import BeautifulSoup

url = '<https://example.com/>'

Next, an HTTP request is sent to the specified URL using requests.get(url). The response from the server is stored in the response variable.

response = requests.get(url)

if response.status_code == 200:

soup = BeautifulSoup(response.text, 'html.parser')

title = soup.title.text

print(f'Title found is: {title}')

else:

print(f'Can\\'t fetch the data from your API. Status Code: {response.status_code}')

Here, we're using a response status code to check whether the response is successful. 200 = success. So the HTML content of the page is parsed using BeautifulSoup. The title of the webpage is then extracted from the parsed HTML, specifically from the title tag, which is then printed to the console.

How to scrape a website using an API in Python

We've seen how scraping websites works with BeautifulSoup, so let’s see how to use an API with the same library. FYI, you can learn more about how to use Python to connect and interact with APIs here.

Start off by installing the requests library as a project dependency in your project:

pip install requests

To use the API for scraping, you'll first make a GET request to an API endpoint that returns information about users by replacing the url and key with the actual API endpoint and your API key:

import requests

# Specify the API endpoint and authentication details

url = 'https://api.example.com/users_data'

key = 'your_api_key_here'

Then set up headers with the authorization token and send a GET request to the API endpoint using requests.get and see if the request is successful (that's status code 200 in your case). If it's successful, you then parse the JSON response using response.json() and iterate through the user data and print relevant information.

# Set up headers with authentication

headers = {'Authorization': f'Bearer {key}'}

# Send a GET request to the API endpoint

response = requests.get(url, headers=headers)

if response.status_code == 200:

data = response.json()

# Find user's information and prints

for user in data['users_data']:

print(f"UserName: {user['user_name']}, UserEmail: {user['user_email']}")

else:

print(f'Can\\'t fetch the data from your API. Status Code: {response.status_code}')

The given example will print the user's data, i.e., user_name and user_email.

Web scraping or API? Which one should you choose?

Now comes the main question, which technology should you choose? The choice between web scraping and API depends on your specific requirements. To generalize the concept, here are some recommendations:

- Use web scraping when you need data from websites that don't offer APIs or when you need real-time or up-to-date information. Also, if you're not sure about the business value of the data, use web scraping to get a sample dataset.

- Use an API when the service provider offers one that meets your needs or when you need data from a webpage that's not available.

What to check before deciding

Before you make a decision, do some research on the data source you're targeting. Take the time to check if the website offers an API, review its terms of service, and check the feasibility of web scraping. You should also consider the long-term implications of your choice, such as data quality, maintenance requirements, and legal compliance.

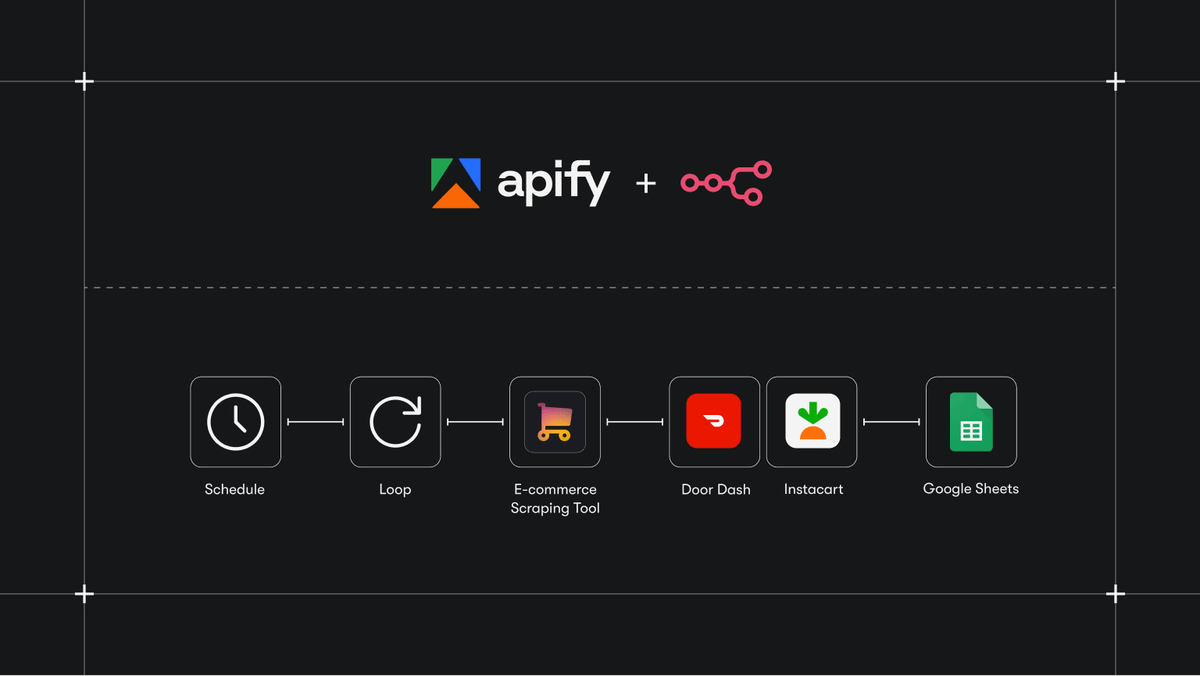

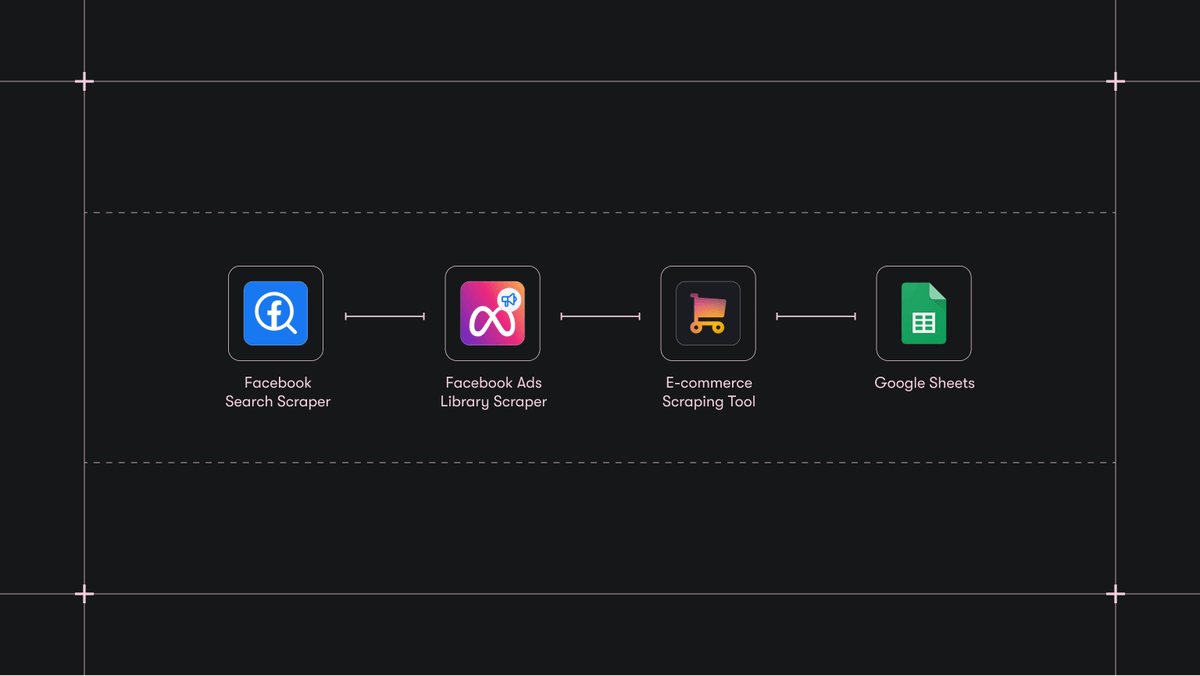

Whether you want pre-built web scraping tools or an API, Apify offers a versatile platform and infrastructure to meet your needs. If you want to build your own scrapers with an efficient web scraping library that makes it easier to handle the challenges of crawling and scraping websites at scale, check out Crawlee.