Are you annoyed by copy-pasting data from the web to your Excel sheets? No wonder it’s endless work. But do you know there are online tools out there that can help you scrape data from any website to Excel automatically?

Yes, it’s true. Instead of copy-pasting, you can:

- Implement Excel web queries

- Use Excel’s VBA language

- Use prebuilt web scraping tools

Let’s take a look at how to deploy each of these methods. By the end, you’ll know which method is the most suitable for your project.

Method 1: Using web scraping tools

Web scraping lets you automate the data extraction process and minimize the effort put into gathering web data in bulk.

You can scrape any unstructured data from the website, be it product names, prices, headings, descriptions, or whatever else.

You can then store the data in a structured format and export it into machine-readable formats such as XLS, aka Excel.

Now, how to do web scraping? There are two ways: a) by using prebuilt scrapers, or b) by building your own web scraper. Let’s take a look at both options.

Apify is a full-stack platform that lets you do both - you can build your own scraper with our web scraping templates or use a ready-made Actor from Apify Store to get the job done.

For now, let’s take a look at how you can get data from any website in 3 simple steps using scrapers found on Apify Store.

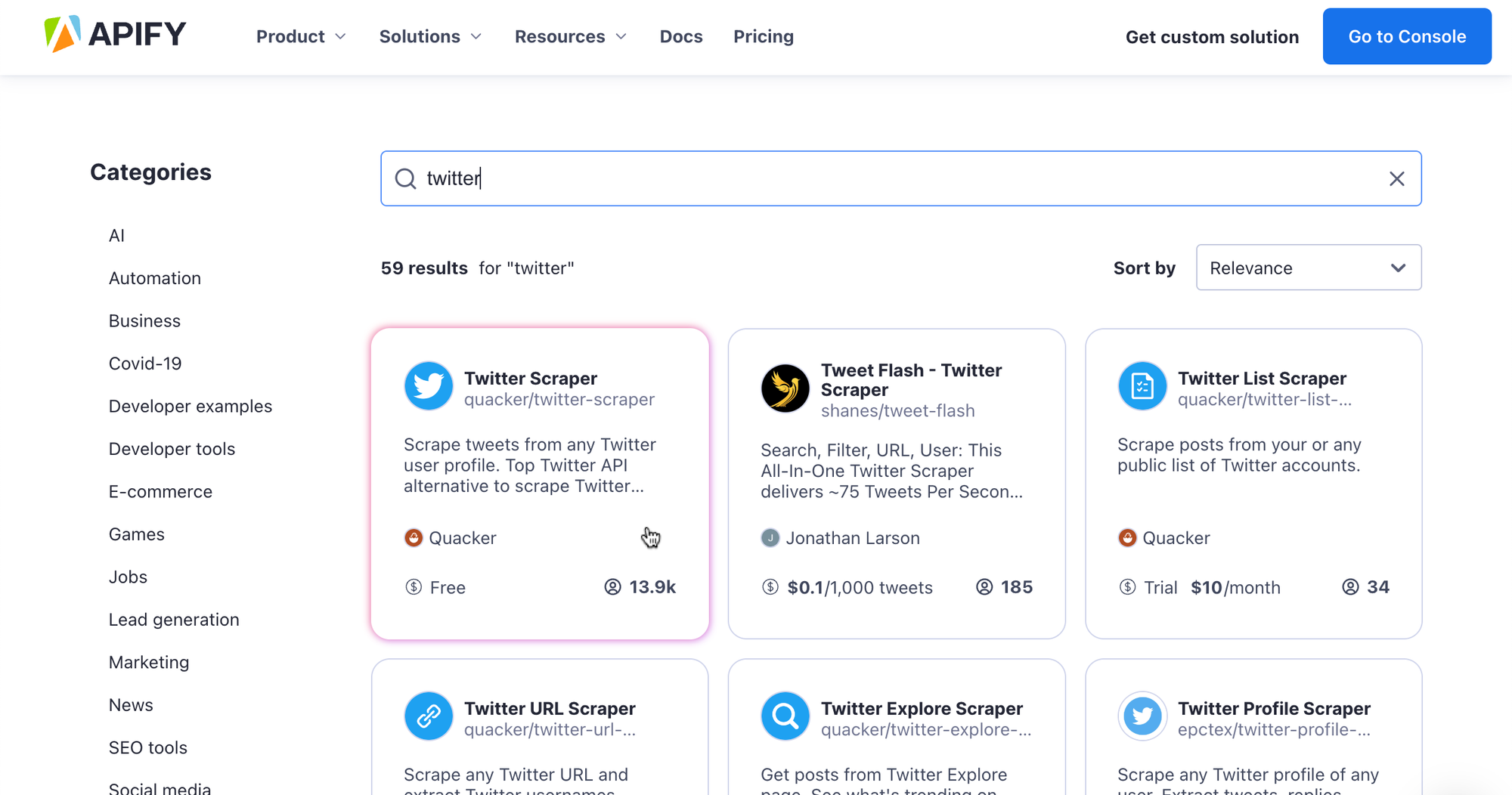

Step 1. Find the right tool

Apify Store works just like any other app store: just search for an Actor you’d like to use based on the website you want to scrape.

Most scrapers are free, although you may come across some paid ones - no need to worry; even these offer a free trial.

After you find your scraper, click the Try for Free button.

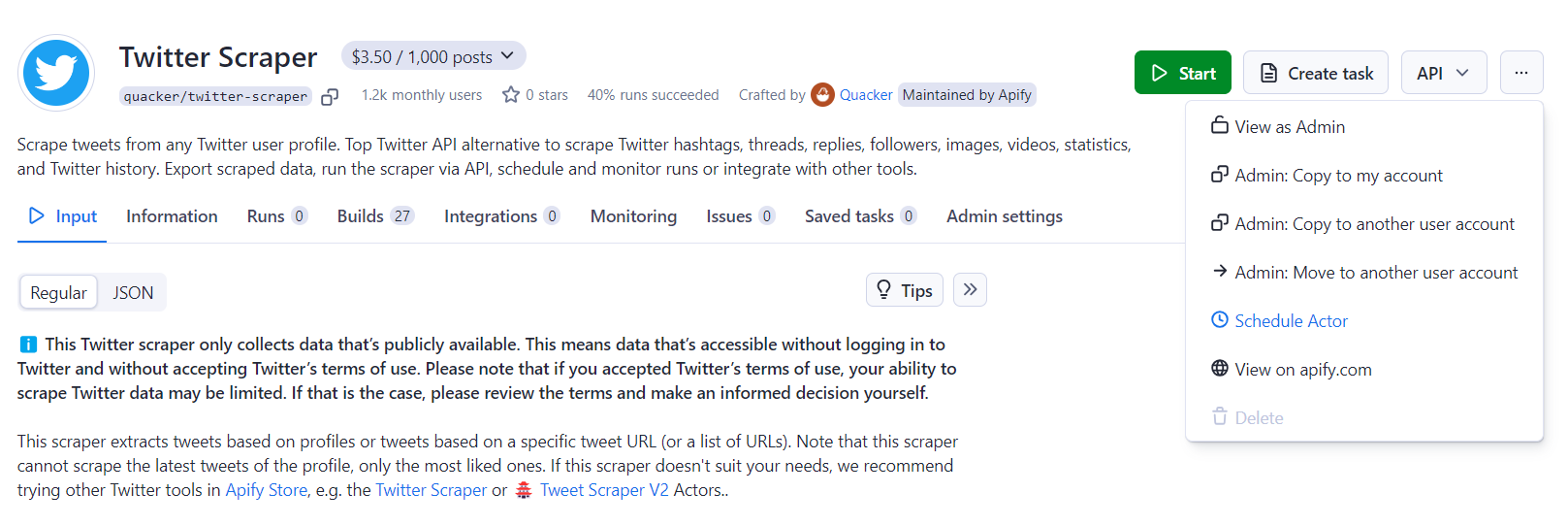

For this example, we’re going to be using Twitter Scraper.

Find a suitable Actor on Apify Store

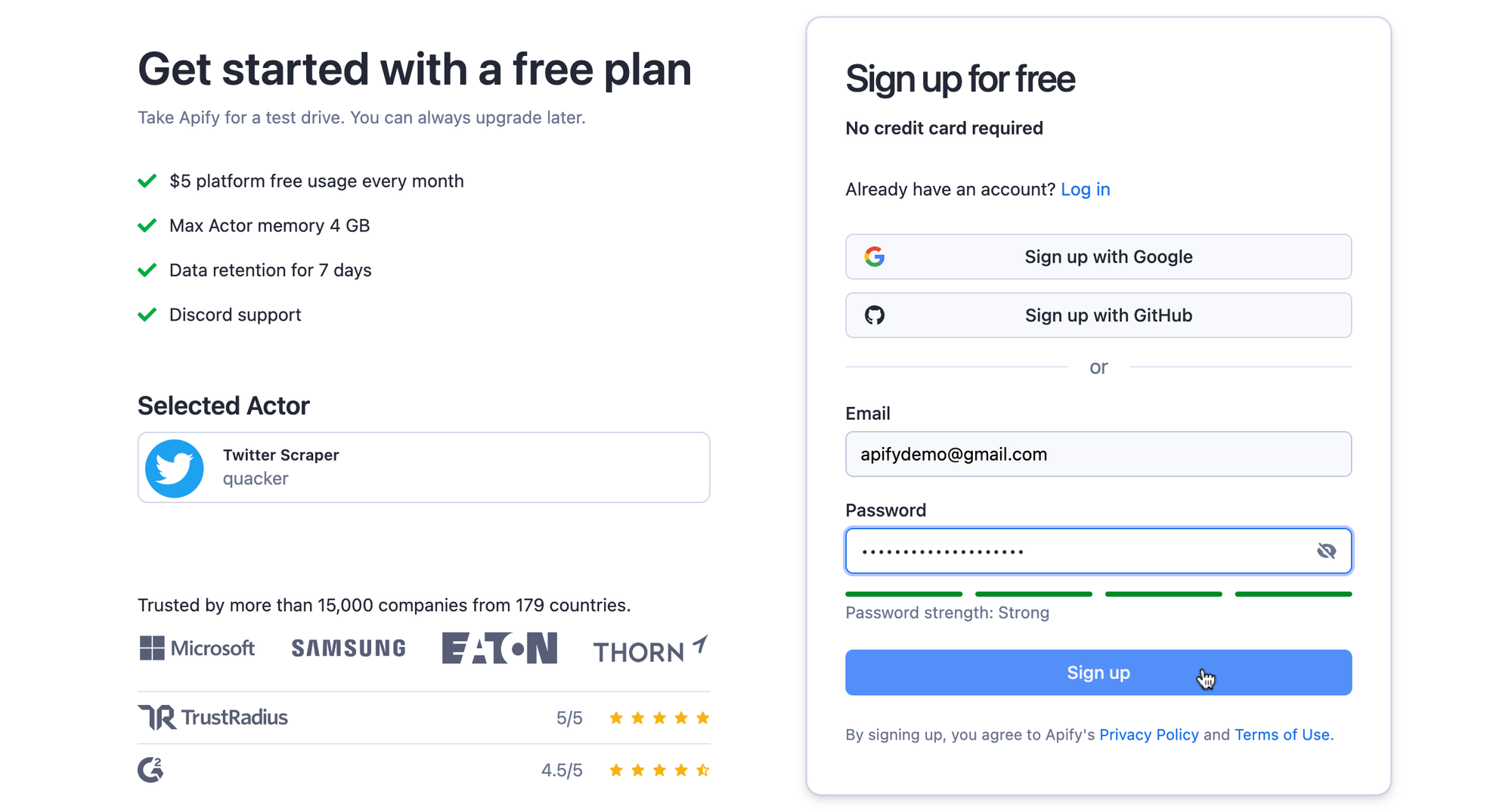

You’ll then be prompted to sign in or make an account if you don’t already have one. You can speed up the process by signing in through your Google or GitHub profile.

Sign in or make an account to access Apify Console

Step 2. Choose the data you want to scrape

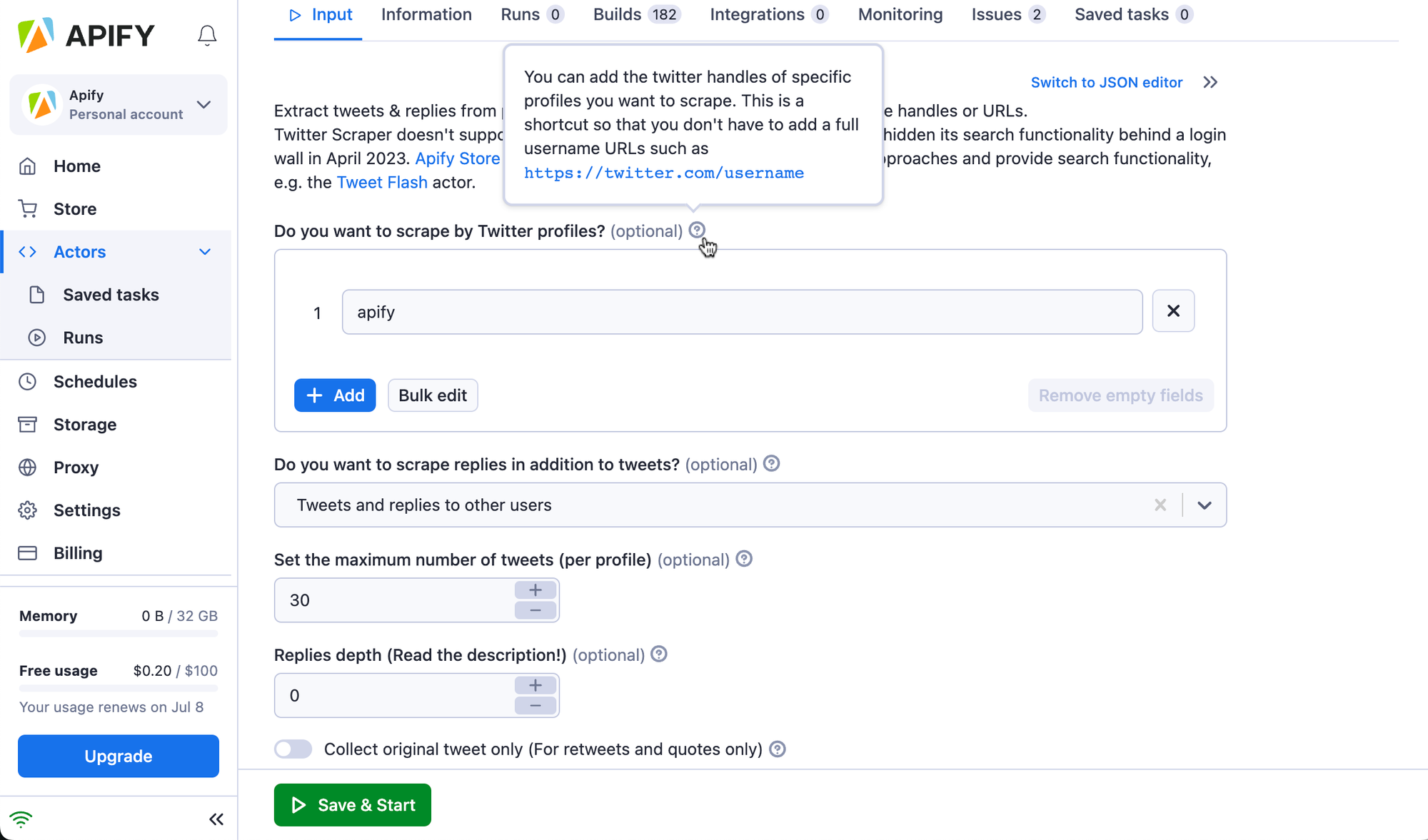

Now you’re ready to go. Fill in the input schema to tell your Actor what data you want from the website of your choice. Using Twitter Scraper, we want to scrape the top 30 results from the @apify profile.

If you’re not sure what the individual inputs mean, hover your mouse over the question mark next to them to get an explanation.

Fill out the Actor's input schema

Step 3. Run the scraper and download data from website to Excel

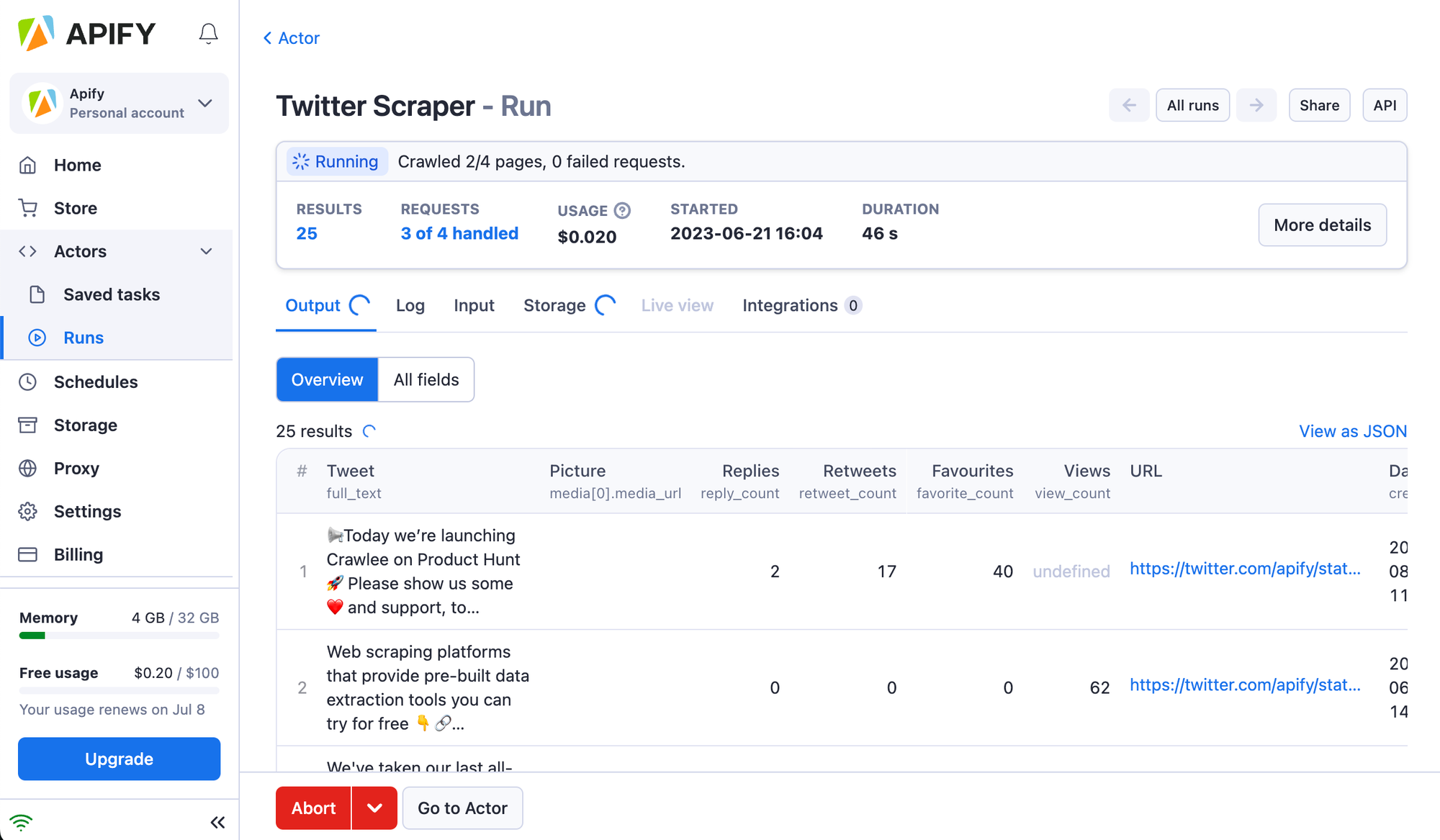

After you’re done filling out the input, hit Save & Start to kick off the Actor run, and just wait for the scraper to finish.

You can watch your results load in the Output tab

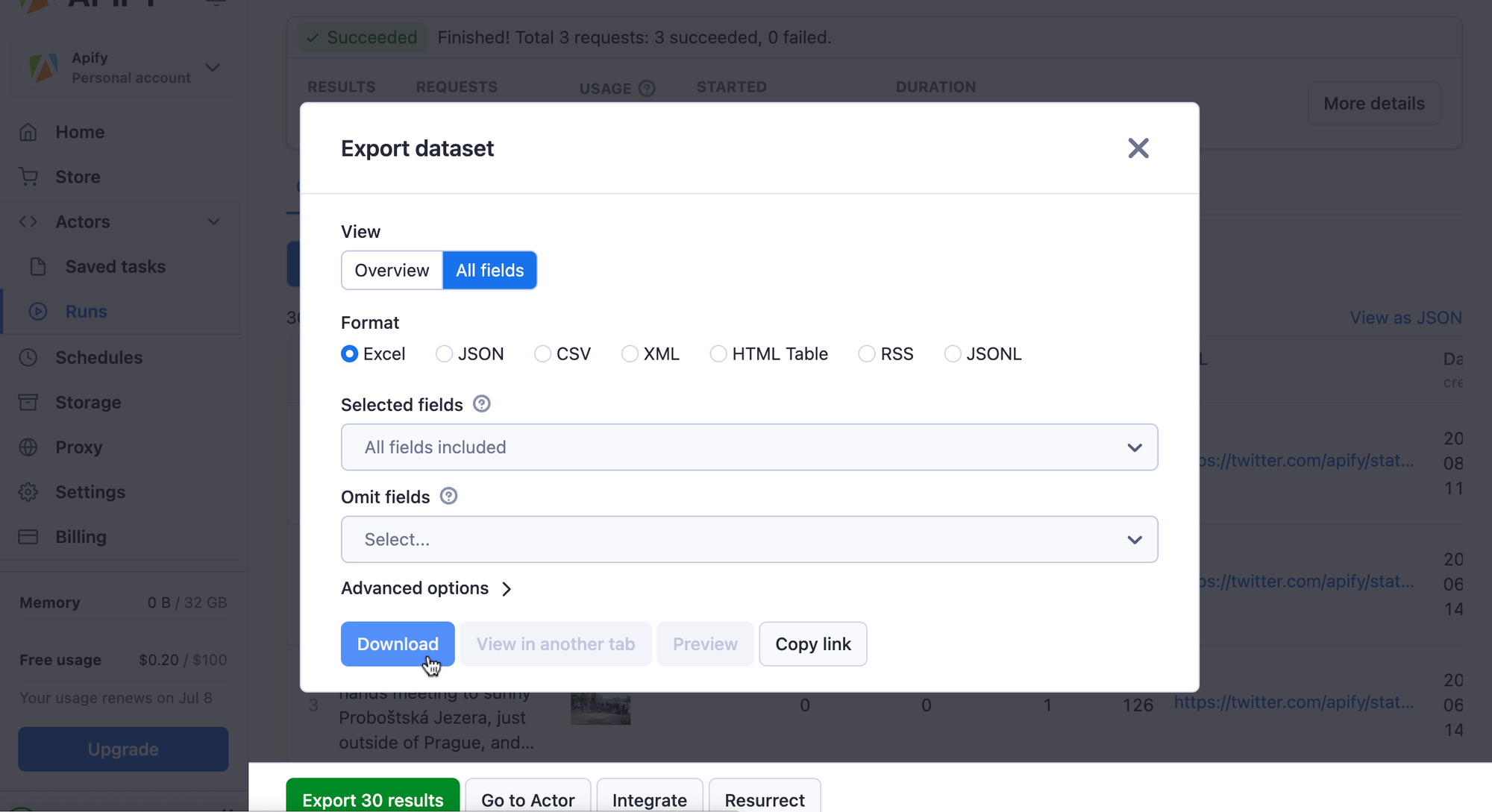

When your run is finished, you can hit the Export results button at the bottom. Here, you can choose your desired output format (in your case, it’s Excel, of course) and which fields from the table you want (or don’t want) to export.

Download your data in an Excel format

Method 2: Using Excel’s VBA language

Microsoft’s VBA (Visual Basic for Applications) implements the event-driven programming language Visual Basic into the Office ecosystem. It allows you to automate processes, create macros, and custom forms, or customize applications to fit your business needs.

However, implementing data extraction into your Excel sheet with VBA requires advanced programming and web scraping knowledge.

If you’re an experienced developer, here’s how to do it:

Step 1: Enable the Developer tab

Open Excel and go to the File menu. Select Options, then Customize Ribbon. Check the Developer option and click OK.

Step 2: Open the VBA editor

Go to the Developer tab and click Visual Basic. This will open the VBA editor where you can write your script.

Step 3: Write the VBA script

Write a VBA script to extract data from the website. You might need to use libraries such as Selenium or Microsoft XML HTTP.

Step 4: Run the VBA script

Execute your VBA script to fetch the data and populate your Excel sheet. You may need to debug and adjust the script based on the website's structure and data format.

Method 3: Implementing Excel web queries

Are you a Windows user? If so, one more way opens for you to get automated structured data straight to your Excel sheet. You can use Microsoft’s Power Query feature.

Here’s a quick guide on how to do it:

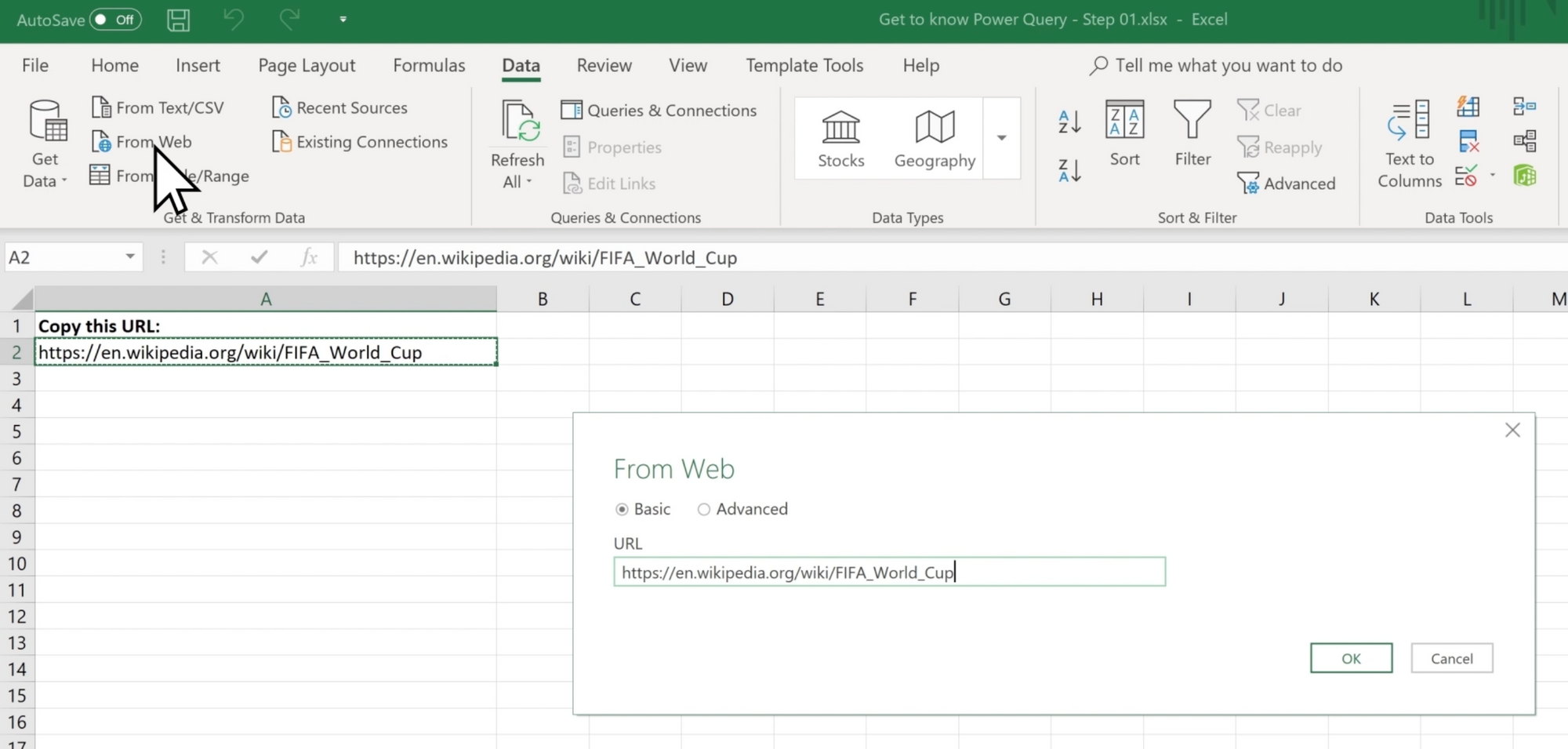

Step 1: Go to the Data tab

Open Excel and go to the Data tab.

Step 2: Select *From Web*

Click the From Web button to open a new query.

Step 3: Enter the URL

Input the URL of the website from which you want to extract data. The software will then process the page and find any usable tables that it can pull from the page

Step 4: Import the data

Make one more click to import the data into your Excel sheet. You can refresh this query anytime to update your data.

Which method is the best for pulling data from a website into Excel?

It’s impossible to say which method is the best for scraping data to any website directly to Excel. It depends on the needs of your specific project. Here is a table summarizing what are the key factors for each method.

| Method | Ease of use | Flexibility | Operating system compatibility |

|---|---|---|---|

| Manual copy and pasting | Easy | Low | All |

| Implementing web queries | Moderate | Medium | Windows only |

| Excel’s VBA language | Hard | High | All |

| Apify Store scraper | Easy | Very High | All |

How to extract data from a website to Excel automatically?

Now you know how to use web scraping tools, but it would be annoying to do it manually all the time, right?

Luckily, you don’t have to.

You can automate an Apify Actor so it runs every day, week, month…whatever you prefer—without you even lifting a finger.

Here’s how to set up the automation:

Step 1: Configure the scraper

Set up the input configuration for your Actor. Just give it the website and the data you want to scrape. It’s pretty much the same as previously when you run the scraper for the first time.

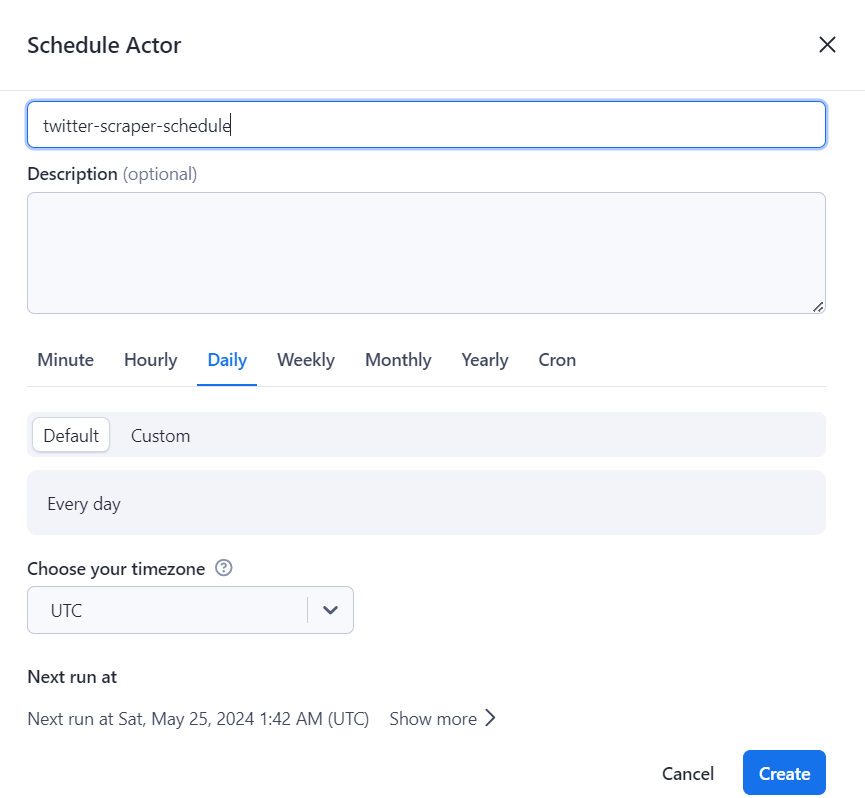

Step 2: Schedule the Actor

The real magic comes right now. Click on the three dots in the right-hand corner and select Schedule Actor.

Then, select when you want the scraper to run and at what frequency (hourly, daily, weekly…, etc.), add a description, and click Create.

Step 2: Automate export to Excel

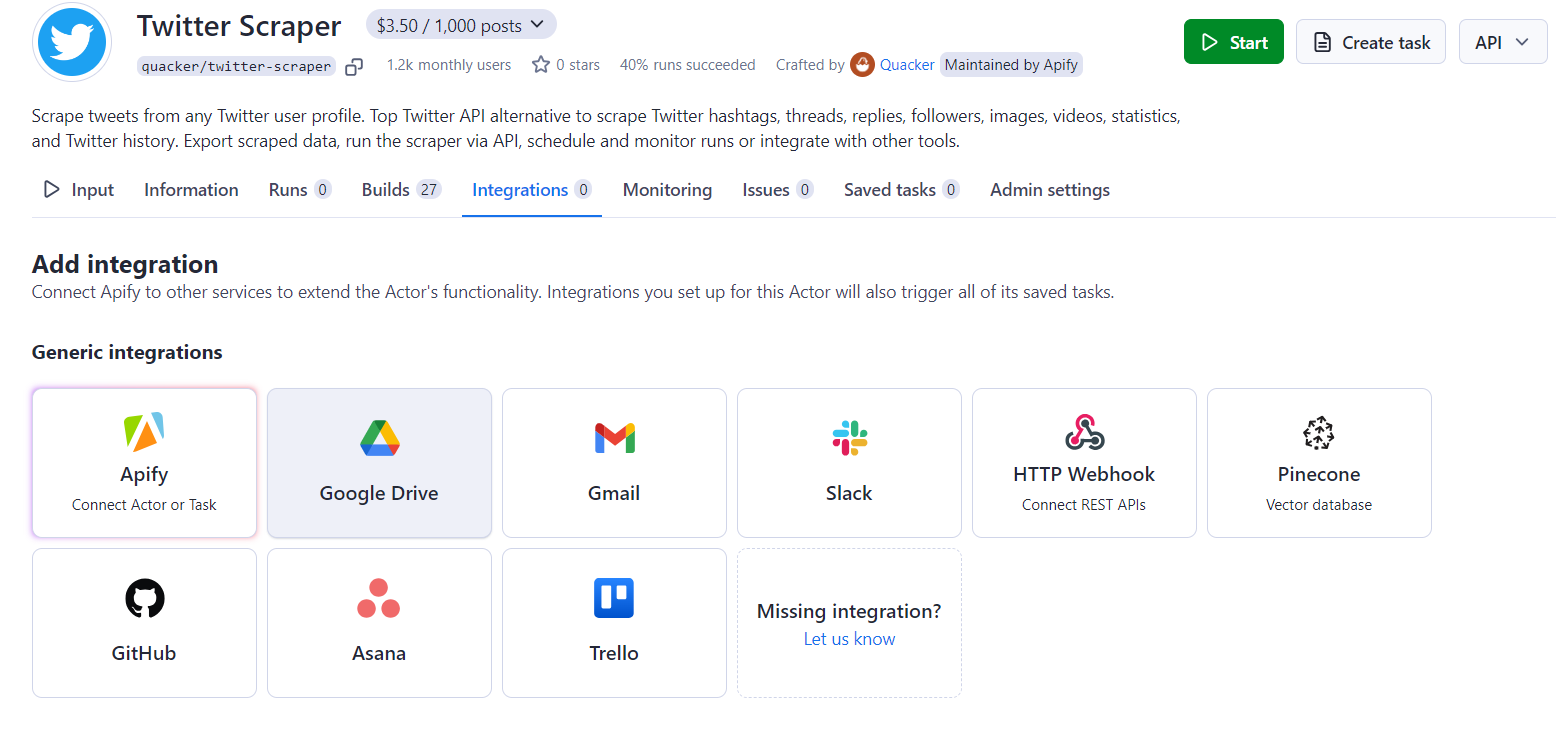

The last thing you need is to automate the downloads so you don’t have to do it manually. For this to work, you need to integrate the Actor with, let’s say, Google Drive or Gmail. The scraped datasets are uploaded there automatically every time the Actor run ends.

How to do it? Click on the Integrations tab and select the integration you want to use:

Then, you’ll need to log in to your Google account to enable this feature. And that’s it.

It’s your turn to try it!

Conclusion

Now you know there are several ways to pull data from a website into Excel, and it’s not easy to say which one you should use. So, let’s briefly recap what ways we are talking about and their pros and cons.

- Manual copy-pasting: Simple but time-consuming and impractical…this is not the right way for sure.

- Excel web queries are good enough for structured data - unfortunately, you don’t find a lot of them on the web. Besides, using Excel web queries is limited to Windows and requires some setup.

- Visual Basic for Applications (VBA) is a powerful and flexible tool, but you’ll need advanced programming skills, and it can be complex to maintain. Moreover, if you posses programming skills on this level, it might be easier to just write a web scraper of your own.

- Web scraping tools are highly flexible and easily automated, they are ideal for large and complex datasets. You only need to know how to run, schedule, and integrate them - a piece of cake for any person living in the 21st century.

So…which solution are you gonna use?