Why you need to improve AI models

Did you know that AI models degrade over time?

No? Hardly surprising. It's not something that often pops up in the deluge of content about the latest AI news, planned upgrades, and the next big step for deep learning.

So, sorry to burst that bubble. But it's a fact.

No matter how sophisticated the algorithm or how diverse the training dataset is, if you don't retrain and improve your AI models, not only will they not get better, they'll get worse.

Why is that? You're lucky I'm not busy. I'll explain it to you.

91% of machine learning models degrade over time

The problem of temporal quality degradation in AI

The quality degradation of AI models stems from the fact that they become dependent on the data as it was at the time of training. Data-producing environments often alter over time, and their statistical properties change alongside them. In other words, as a model is tested on current datasets in quickly changing contexts, the model's predictive ability inevitably declines. This is known as “concept drift”, “model drift”, or “AI aging”, which can significantly impact the quality of AI models.

The problem of AI model collapse

Another form of AI degeneration is a phenomenon known as model collapse. It occurs when AI is trained on synthetic data. By synthetic data, we mean artificially generated information created to augment or replace real data to improve machine learning models.

This problem of model collapse has been exacerbated by people filling the internet with AI-generated content and then feeding that content to AI models.

Researchers from the UK and Canada have demonstrated that the use of model-generated content in training causes irreversible defects in the resulting models. This is because they forget information about important but less common aspects of the data in the underlying data distribution. As a result, they begin to produce increasingly similar outputs.

Model collapse is a degenerative process affecting generations of learned generative models, where generated data ends up polluting the training set of the next generation of models

3 ways to improve AI models

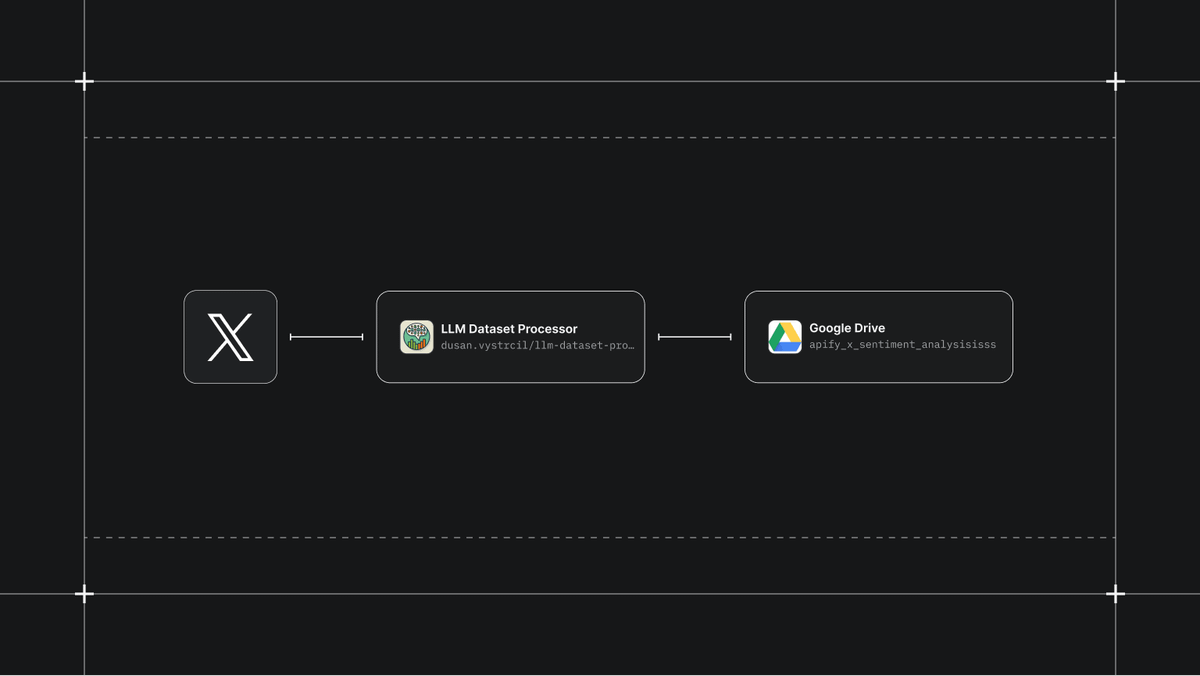

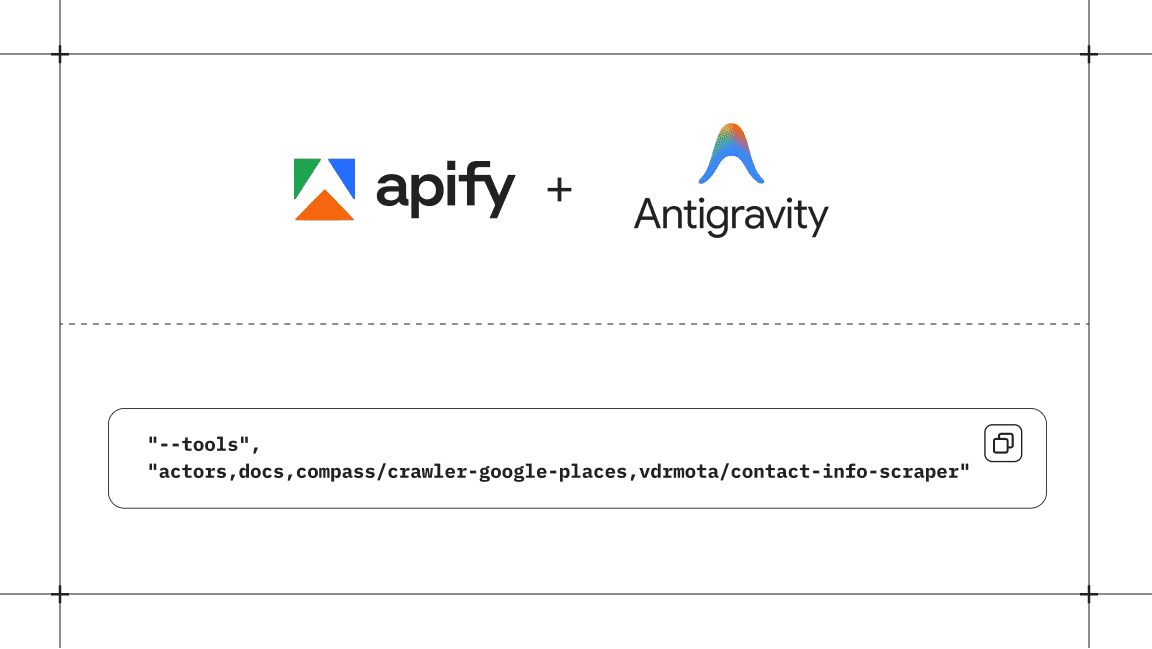

1. Web scraping to feed AI models with the right data

With their ever-growing user base, AI solutions companies continue to become more complex as better and more diverse data is needed to develop them. Web scraping is the go-to solution for this problem. Web scraping is a method of harvesting data from websites and the most efficient way to collect web data to expand your training dataset and improve LLMs.

Web scraping is also used for customizing and fine-tuning generative AI. By adding information relevant to your use case (for example, feeding a language model data from your website to create an AI chatbot), you can make sure that the information the language model provides to customers is accurate.

If you're web scraping for large language models like ChatGPT or image models like MidJourney, you want to avoid extracting AI-generated content to get quality training datasets.

The problem is there's no mass labeling mechanism to differentiate between AI-generated and human-generated data. So unless you're extracting information that you're confident is human content (from before the time of ChatGPT, for example), there's no easy way to make the distinction and therefore be certain that you're introducing human-generated datasets back into the AI model's training.

A more reliable solution is to ensure that you retain a copy of an exclusively human-produced dataset to periodically refresh and retrain the model. But the problem of degradation means you can’t have one dataset of human-produced content forever. You’ll need reliable, up-to-date information as well, which means you’ll need new datasets of human-produced content to retrain the AI.

2. Enhancing data quality

Web scraping to expand your dataset isn't enough to improve generative AI. The extracted data needs to be processed before feeding it to the model. For example, if you use a web scraping tool like Website Content Crawler, you can simultaneously use it to remove duplicate text lines and HTML elements you don't want in the training dataset. This is useful for data annotation, data labeling, and improving model accuracy.

3. Augmenting data

Data augmentation, like synthetic data, aims to increase the size and diversity of the training data for machine learning models. A key difference is that synthetic data is generated from scratch, while data augmentation uses an existing training dataset to create new examples.

Synthetic data can introduce bias or lose realism, which is why it's the main cause of model collapse. Augmented data, however, maintains the quality and diversity of the training dataset. Combining the two achieves the best results in machine learning applications.

While data augmentation is most popular in the area of computer vision applications (for example, flipping, rotating, or scaling an image to create a new data entry), it's also one of many handy techniques for NLP (natural language processing). In the context of language models, augmenting data involves altering the text (for example, replacing words with synonyms without changing the meaning) to create a new data entry.

Should you retrain your AI model?

Retraining AI regularly is the most obvious solution to AI degeneration. You should monitor how your model performs after deployment compared with how well it functioned during training. If you see a decline in performance, then it's time to retrain the model with additional sources of ground truth, manual data labeling, and large data volumes.

The final word: AI needs human-generated datasets

I said earlier that the problem with collecting web data for re-training AI models is the data pollution caused by AI-generated content. While web scraping is definitely the best way to feed specific web data to LLMs (to customize AI chatbots, for example), it won't solve the problem of degradation and model collapse on its own.

You need to monitor your AI model, retrain it with fresh data, and make sure you have human-generated content for the retraining. For that, content produced by LLMs won't help. You'll need human writers like me! 😀

We've all heard about the AI arms race, but there'll soon be a scramble for human content, too. Only those companies and platforms with access to human-generated data will be able to create the best quality generative AI models.

Frequently asked questions about AI

How do I train an AI model?

Whether your AI is an LLM or an image model, the basic steps for training AI are the same:

- Prepare the training data.

- Create a dataset.

- Train the model.

- Evaluate and iterate on your model.

- Get predictions from your model.

- Interpret prediction results.

How can I make my AI more reliable?

To improve an AI model, you need to train and optimize it with appropriate and diverse datasets and algorithms. This will improve accuracy and efficiency and help to reduce variance.

How do I build a generative AI model?

The process of building generative AI models has three main steps: prototyping, development, and deployment. The prototyping stage begins with data collection and ends with result analysis. The development stage begins with data preparation and ends with model optimization. The deployment stage begins with pipelining and ends with scaling.

How are large language models trained?

LLMs like GPT and BERT are trained with a large dataset from various data sources scraped from the web. This is what enables large language models to generate output for a wide range of tasks.

What is web scraping?

Web scraping is a technique used to automatically extract data from websites and online sources. It involves using software tools or scripts to access web pages, parse the HTML content, and retrieve specific information.

Can AI do web scraping?

It's possible to combine AI algorithms with web scraping processes to automate some data extraction activities, such as transforming pages to JSON arrays. AI web scraping is more resilient to page changes than regular scraping as it doesn’t use CSS selectors. However, AI models are restricted by limited context memory.

What is data augmentation?

Data augmentation is a collection of techniques that manage the process of automatically generating high-quality data on top of existing data. It is common In computer vision applications and sometimes used in natural language processing.

What is degradation in AI?

As a model is tested on current datasets in quickly changing contexts, the model's predictive ability inevitably declines. This change in accuracy leads to model degradation. This process of decreasing performance is also known as model drift.

What is AI model collapse?

Model collapse is a degenerative process affecting generations of learned generative models, where generated data ends up polluting the training set of the next generation of models.