We're Apify, a full-stack web scraping and browser automation platform. As a result, it serves as a bridge between the web's vast data resources and sophisticated AI tools like Hugging Face.

With this article on computer vision and image classification, we bring our Hugging Face series to an end.

Transformers for vision tasks

So far, we have seen Hugging Face being applied to NLP tasks only. In 2021/22, when I was new to Hugging Face and transformers, I had a misconception that they were limited only to NLP tasks. So, naturally, it was surprising to see computer vision tasks on Hugging Face as well. But luckily, we can use the Hugging Face API for vision tasks as well. This article is dedicated to covering them.

Vision transformers

It would be unfair to jump directly into the subject without saying a few words about the transformer model behind all these vision tasks. Personally, it took me some time to realize that we can use transformers for computer vision tasks.

In one of the iconic papers of the current decade (or perhaps the whole history of AI), the Google Research team presented Vision Transformers in 2021. Vision transformers (often abbreviated as ViTs) are a revelation. Without using any convolution mechanism, they're able to achieve (or often supersede) the performance of traditional CNNs.

To put it another way, since CNNs are applied in a sequential manner, they keep the positional context, but vision transformers feed chunks of the image (16 × 16 in the original model) as tokens. Hence, they operate without any significant contextual information and defy both conventional and convolutional computer vision.

For the sake of brevity, I'll conclude on vision transformers here and move to the meat of the topic: image classification.

Image classification

Image classification is a pretty straightforward task.

We have an image X and the ML model, f(X). After processing the image, the model (already trained on some dataset(s)) returns y, the class of the image. It's quite useful to have a number of applications. From visual search on search engines to our smartphones tagging the gallery items, image classification is everywhere.

So enough preamble. Let’s try it out.

!pip install transformers

from transformers import pipeline

imageClassifier = pipeline("image-classification")

By default, it uses the original ViT model (one used in the paper presenting ViTs), vit-base-patch16-224 (it was trained on the images of size 224 × 224 with patches of size 16 × 16) by Google Research.

To give you an idea of how popular this model is, I'd like to share a little stat: in September 2023 alone, this model was used by Hugging Face users more than 1M times.

Since we have to fetch the images, we need to import the respective libraries:

import requests

from PIL import Image

from io import BytesIO

I'm assuming that the Pillow library is already installed. Now, I'll take an image from the WikiArt and try image classification on it.

imageUrl = "<https://uploads7.wikiart.org/images/vincent-van-gogh/still-life-vase-with-fifteen-sunflowers-1888-1.jpg!Large.jpg>"

response = requests.get(imageUrl)

randomImage = Image.open(BytesIO(response.content))

y = imageClassifier(randomImage)

y

# Output:

# [{'score': 0.8306444883346558, 'label': 'daisy'},

# {'score': 0.035788558423519135, 'label': 'vase'},

# {'score': 0.024422869086265564, 'label': 'pot, flowerpot'},

# {'score': 0.0025065126828849316, 'label': 'cardoon'},

# {'score': 0.001841061981394887, 'label': 'hamper'}]

As you can see, it returns the probabilities of some of the most probable classes. I'd like to try it out, so I'm converting it into a function.

def ClassifyImage(url):

response = requests.get(url)

randomImage = Image.open(BytesIO(response.content))

y = imageClassifier(randomImage)

return y

The image earlier was a straightforward one with flowers and a vase. Let’s try some more complex ones with even more objects, like “The Starry Night”.

ClassifyImage("<https://uploads4.wikiart.org/00142/images/vincent-van-gogh/the-starry-night.jpg!Large.jpg>")

# [{'score': 0.8935281038284302,

# 'label': 'book jacket, dust cover, dust jacket, dust wrapper'},

# {'score': 0.013123863376677036, 'label': 'comic book'},

# {'score': 0.010687396861612797, 'label': 'prayer rug, prayer mat'},

# {'score': 0.004971278831362724, 'label': 'jigsaw puzzle'},

# {'score': 0.003436336060985923, 'label': 'binder, ring-binder'}]

As we can see, it got it horribly wrong. It’s understandable that this image can be tougher to comprehend, but I feel that misclassifying such an iconic image (it's been used a lot in computer vision, too, like the classical style transfer papers in the mid-2010s) is something Google Research won’t be too proud of. Obviously, one or a few examples can’t evaluate any model. Let’s try another:

ClassifyImage("<https://p.imgci.com/db/PICTURES/CMS/367700/367784.jpg>")

# [{'score': 0.24710936844348907, 'label': 'ballplayer, baseball player'},

# {'score': 0.10306838899850845, 'label': 'baseball'},

# {'score': 0.0459364615380764, 'label': 'croquet ball'},

# {'score': 0.028997069224715233, 'label': 'crutch'},

# {'score': 0.02327670156955719, 'label': 'knee pad'}]

Since cricket is pretty similar to baseball and croquet, it's a pretty good one. Actually, it also highlights the important issue of bias in AI models towards the prevalent datasets.

Other models

Instead of ransacking the default model, we can also try out some other models as well. As of writing this (Oct 2023), there are 6,000+ models available on Hugging Face, such as:

- Mobile ViT by Apple - similar to mobilenet, Mobile ViT provides us with a small-sized ViT. It can be used in a number of applications for relatively low-resource devices, like mobile phones.

- Age classifier - a fine-tuned model which takes an image of a person and predicts its age.

While exploring models, I observed some things:

- Still, the majority of models are either purely convolutional (like resnet-50 by Microsoft or ConvNext by meta) or a combination of convolutional layers with transformers.

- pyTorch IMage Models (TIMM) has a huge contribution of models (1,000+). Exploring them can be pretty handy if you're looking for a certain model.

- The majority of transformer models are fine-tuned versions of the original ViT model.

Now, I'm curious to check the age classifier ViT, so let’s use it.

del imageClassifier

imageClassifierAge = pipeline("image-classification", model="nateraw/vit-age-classifier")

I'll reuse the aforementioned ClassifyImage() here:

def PredictAge(url):

response = requests.get(url)

randomImage = Image.open(BytesIO(response.content))

y = imageClassifierAge(randomImage)

return y

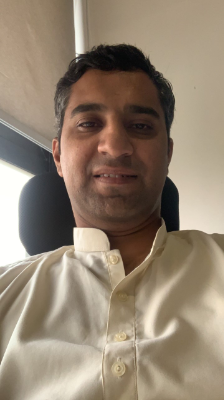

Charity begins at home. I tried my own picture, and boom: it’s pretty spot on.

PredictAge("<https://media.iclr.cc/Conferences/ICLR2023/img/talha-headshot.png>")

# [{'score': 0.6846951842308044, 'label': '30-39'},

# {'score': 0.1642615795135498, 'label': '20-29'},

# {'score': 0.13719308376312256, 'label': '40-49'},

# {'score': 0.007695643696933985, 'label': '50-59'},

# {'score': 0.0058859908021986485, 'label': '10-19'}]

I tried it on a famous picture of Victor Hugo, too.

PredictAge("<https://www.les-crises.fr/wp-content/uploads/2015/12/Victor_Hugo_by_%C3%89tienne_Carjat_1876_-_full3.jpg>")

# [{'score': 0.46074625849723816, 'label': '60-69'},

# {'score': 0.21264179050922394, 'label': '50-59'},

# {'score': 0.17364054918289185, 'label': 'more than 70'},

# {'score': 0.0924532413482666, 'label': '40-49'},

# {'score': 0.027529381215572357, 'label': '30-39'}]

The old boy was in his 70s, so he should consider himself lucky to be considered a bit younger. I think (just a hypothesis) that it can be explained due to his firm face (fewer wrinkles than a typical person of this age).

I've thoroughly enjoyed these models, and I'll continue to explore further as I have heaps of tabs opened in the browser. I'm sure you'll keep enjoying these models too. Ciao!