For 30 years, APIs have been how software talks to software. A human engineer reads the documentation, writes the integration code, and the API gets called when a user clicks a button. But now that AI agents are becoming a significant new class of consumer, that model is changing.

Agents are calling endpoints thousands of times per second and processing data at scales no human workflow could match. More than 30% of the increase in API demand is expected to come from AI tools and LLMs in 2026. The API economy is being repriced, redesigned, and repurposed for a world of non-human clients.

The problem with APIs built for humans

Most APIs were built with a developer in mind. Ambiguity was fine because humans could infer intent. Inconsistency was tolerable because developers could debug and adapt.

Agents can do neither. They need machine-readable schemas, predictable response structures, and explicit error handling. They can't infer intent from vague field names or navigate documentation built for human eyes.

The consequences are already visible. Agents are calling APIs at machine speed with perfect persistence, and a single misunderstood endpoint becomes a flood of broken calls. Yet only 24% of developers design APIs with AI agents in mind. The result is agents hallucinating nonexistent parameters, missing auth requirements, and generating code that fails on first execution.

Agent-readable API design

Because APIs were designed to be read by humans, every layer assumes a person is present. Specs assume someone will infer context from incomplete descriptions. Errors assume someone will debug. Documentation assumes someone will browse. Auth assumes someone will click. Designing APIs for agents means stripping that assumption out, layer by layer.

Specifications

When an AI coding tool integrates a payment API, the agent reads the spec, selects endpoints, writes the code, and handles errors - often before the developer has read a line of output. But specs rot: field names lose meaning without descriptions, enums accumulate undocumented values, and endpoints get renamed without their descriptions following. When an agent selects an endpoint, it performs something close to semantic search against those descriptions. "Gets the data" loses to "Returns a paginated list of invoices filtered by status and date range, sorted by created_at descending." Every description should be a signal for the agent.

There’s a subtler context problem, too. APIs are typically written for developers who already know the product, understand the payment flow, know which endpoint to call first, and can infer what comes next. Agents have none of that context. A good description for an agent needs to say what the endpoint does, what preconditions are required before calling it, and what the logical next steps are. Not just inputs and outputs.

Error responses

A response of {"error": "Model not found"} tells an agent nothing useful. A response that returns what was requested, a suggested correction, and a pointer to where valid values can be retrieved gives the agent everything it needs to retry without human intervention. Redesigning error responses around this principle can cut wasted token consumption by over 60%.

Documentation format

Converting docs to token-efficient formats like Markdown and llms.txt can reduce AI token consumption by 90% or more compared to HTML. APIs legible to agents also rank better in AI search tools like Perplexity and ChatGPT. Writing for machine consumers and writing for answer engine visibility are the same discipline.

Authentication

Traditional two-factor flows are a complete blocker for autonomous agents. The solution isn’t just switching to machine-compatible auth, but adopting just-in-time authorization. That means issuing short-lived tokens with limited scopes that expire as soon as they’re no longer needed, so as not to block legitimate agent activity.

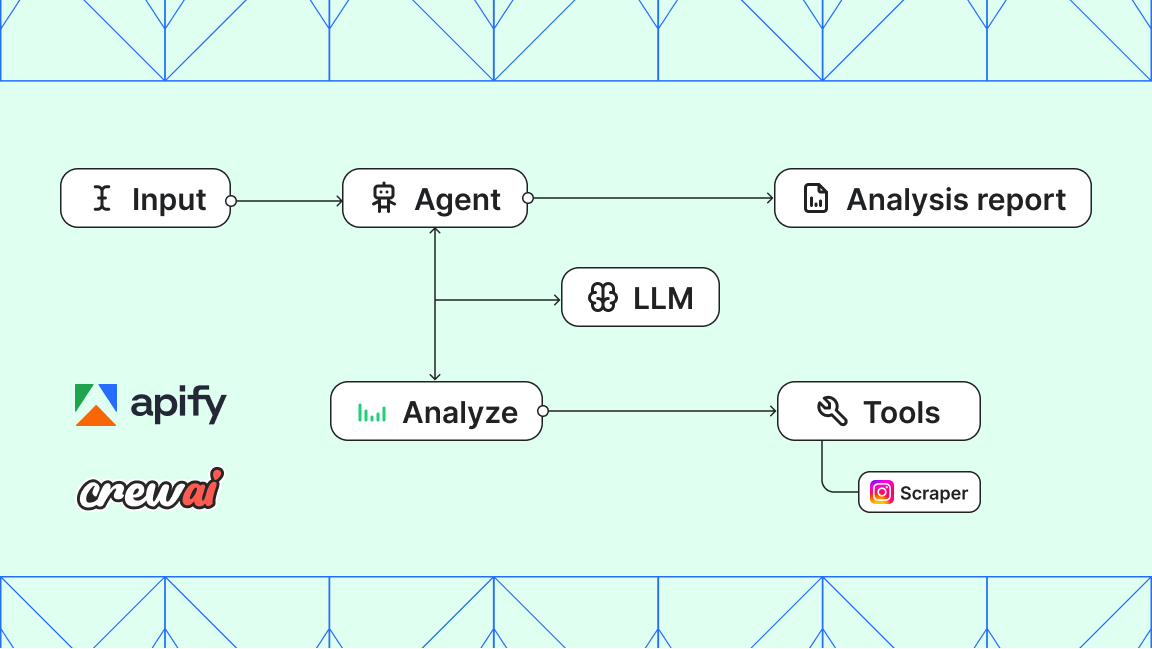

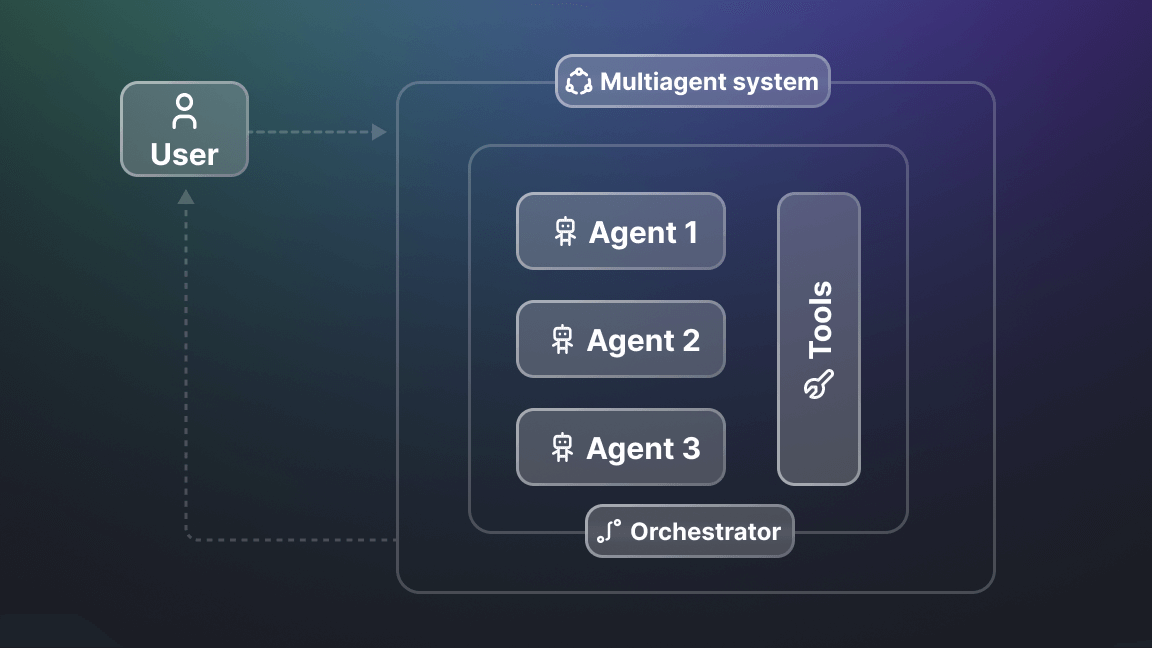

MCP servers

If you want agents to actually find and use your API, you need an MCP server. That's just the practical reality now. Anthropic's Model Context Protocol has become the interface layer between agents and external tools, with adoption across Google, Microsoft, and OpenAI's developer tooling.

Not having an MCP server is increasingly a distribution disadvantage: an API with an MCP server gets invoked by agents that were never specifically programmed to use it. But simply mapping every API endpoint to an MCP tool creates a poor agent experience: too many tools, unclear descriptions, and no workflow logic. The best MCP servers are designed around tasks agents actually perform by consolidating multiple API calls into single, well-described operations that agents can select with certainty.

Pricing models

Another problem is that the usual subscription tier model doesn’t map well to how agents consume APIs. An agent may call dozens of endpoints in a single session at sub-cent cost per call, then sit idle for hours. A flat monthly fee is a poor proxy for value delivered.

The more appropriate model is event-based pricing: charging for specific actions rather than access. Apify's pay-per-event model is a working example. Actor creators define discrete chargeable events, like a scraped item or an external API call, and charge per occurrence. Users set a maximum cost per run, and the Actor checks whether another charge falls within that limit before proceeding. That’s a pricing model that makes agentic workflows commercially viable at scale.

How to prepare for the AI client base

40% of enterprise applications will be integrated with task-specific AI agents by the end of 2026, up from less than 5% in 2025. The design assumptions built into most APIs today - that the consumer can read, infer, debug, and authenticate like a human - no longer hold. So, what needs to change?

Here's where to start:

- Audit existing specs for description quality, not just syntactic validity, and add workflow context, not just input/output.

- Check whether authentication flows work without browser interaction.

- Evaluate pricing against event-based alternatives.

- Publish an MCP server; platforms that have done this, and layered payment protocols like x402 and identity infrastructure like Skyfire on top, show what full agent-native access looks like in practice.

The providers that do this now are building for the agent client base that’s already arriving.