Two pricing models defined SaaS for most of its history: a flat monthly subscription, or a per-seat fee that scaled with headcount. Both worked because software usage was predictable. A team of fifty using a CRM next month looks a lot like a team of fifty using it this month. The cost of serving them is steady. The price can be steady too.

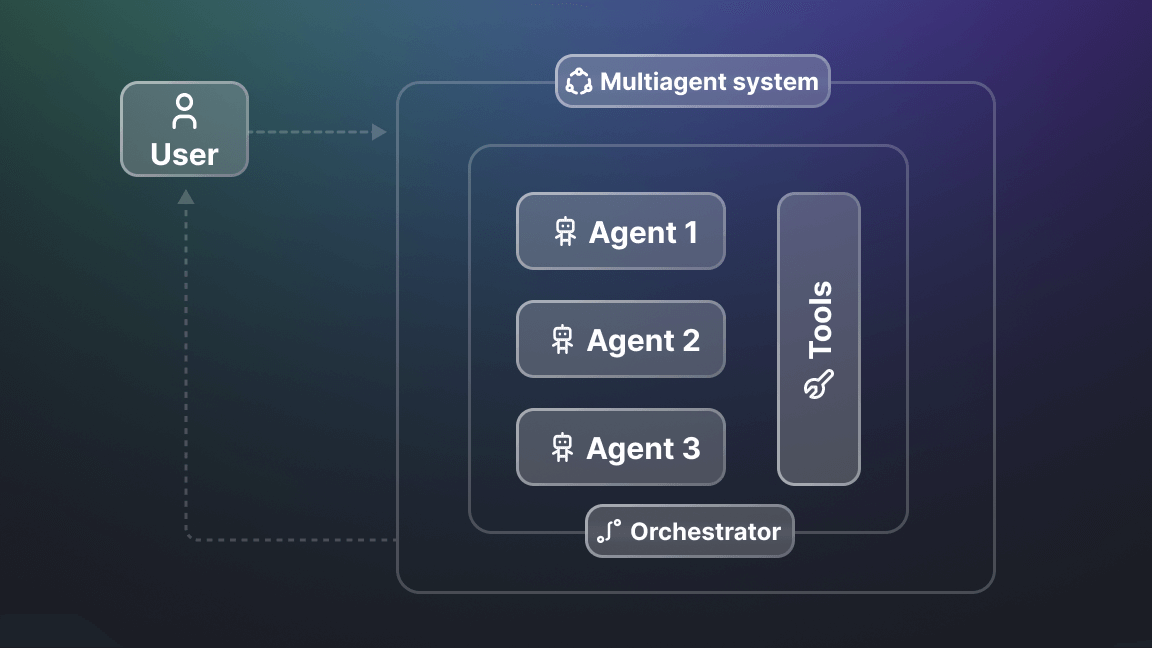

Those models don't work with agents, because an AI agent doesn't log in and click around at human pace; it triggers tool calls in bursts, branches based on what it finds, and can do nothing for three days, then run a hundred jobs in an hour. The cost of serving an agent isn't steady. Pricing it like it is means overcharging customers whose agents barely run or undercharging the ones whose agents call your API ten thousand times a day.

That’s why software companies are leaning into hybrid subscription-plus-consumption models. OpenAI and Anthropic ship consumer subscriptions and per-token API pricing in the same product line. AWS has priced this way for nearly two decades, with GCP not far behind. Vercel, Modal, Apify, and most agent-adjacent infrastructure providers run hybrid models where a baseline subscription sits underneath metered usage.

But "subscription plus consumption" raises the question: which costs go on which layer? Get that wrong, and you end up with the worst of both: flat fees on variable things, and metering on costs that never change.

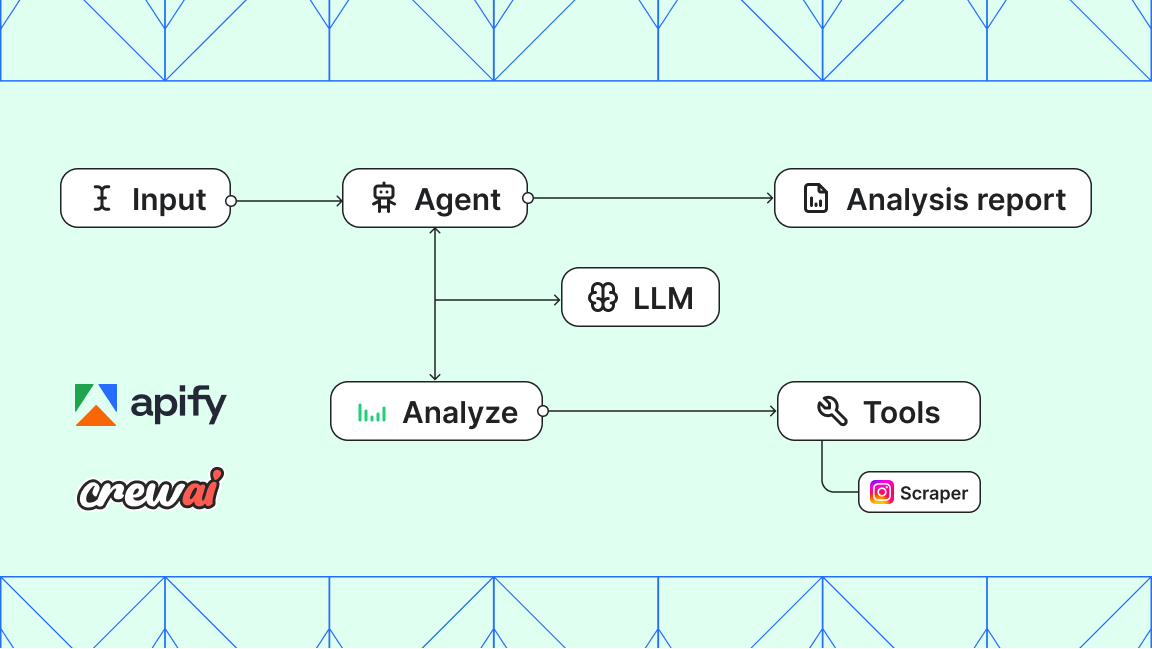

- 📖 Related reading: How to build and monetize an AI agent on Apify is a step-by-step guide to creating, publishing, and monetizing AI agents on the Apify platform using CrewAI and Python.

What gets metered, what doesn’t

The rule in a nutshell: predictable costs go on the subscription, and costs that vary with what the customer (or their agent) actually does go on the meter.

That maps cleanly onto the technical stack. Infrastructure availability (compute capacity reserved, storage allocated, concurrent runs supported) is predictable. The provider knows roughly what it costs to keep that capacity ready, and the customer knows roughly what they need. Subscription pricing matches that shape: a known fee for a known floor.

Per-action work is different. An LLM call, a scrape, a tool invocation, a request to an external API: the volume of these depends on what your agent decides to do, not what plan you’re on. Bundling them into a flat fee means either guessing at how much you’ll use (and getting it wrong in both directions) or charging you for capacity nobody used.

- 📖 Related reading: Agentic commerce and the AI economy stack: Infrastructure for autonomous payments explains the key protocols shaping agentic commerce: MCP, ACP, x402, UCP, L402, and MPP.

Examples of the pricing split in practice

AWS: pay-as-you-go from the start

AWS set the template the rest of the industry now copies. EC2 is billed per second of compute, S3 per gigabyte stored and per request, and every other service on its own metered unit. There’s no flat subscription required to start. Reserved Instances and Enterprise Support sit on top for customers who want price predictability or volume discounts, but the underlying model has been "only pay for what you use" since 2006.

GCP: same shape, different defaults

Google Cloud Platform runs the same pay-as-you-go structure with slightly different ergonomics. Sustained-use discounts apply automatically once an instance runs long enough, without needing a contract, and per-second compute billing has been the default for years. Committed-use contracts layer in for customers who want to lock in lower rates. The split is the same as AWS: a known-rate meter underneath, optional commitments on top.

Vercel: bundled subscription, metered overages

Vercel puts the split in a slightly different place. The Pro plan runs $20 per seat per month, with each seat including 1 TB of bandwidth, 10 million edge requests, and a $20 usage credit that covers compute, function invocations, and build minutes. Anything past those caps is billed per unit consumed. Small projects get a predictable bill, big projects get a meter, and the subscription also buys you team features on top of the baseline.

Modal: tiered plans, per-second compute underneath

Modal’s Starter, Team, and Enterprise tiers handle support, team features, and concurrency limits, while compute is billed per second of CPU and per second of GPU at standardized rates. Compute is the real cost driver for any production workload, with the subscription mostly buying you a seat at the table. The shape is closer to AWS than to OpenAI’s API, but the hybrid pattern is the same.

OpenAI and Anthropic: pay-per-token with optional commitments

Both companies run this split on the API side. There’s no monthly subscription to start. You pay per token, and that’s where the cost actually sits for any serious workload. Enterprise tiers add commitments and rate guarantees on top (Anthropic, for instance, now structures its enterprise plans around pre-committed monthly consumption), but the underlying usage stays metered. The model handles the obvious case: a team running an agent that processes a million documents this month and zero next month should only pay for what it used.

The consumer subscriptions (ChatGPT Plus, Claude Pro, and the various Team and Enterprise tiers) sit on a different product line, but follow the same logic: a flat fee for predictable access, and per-token or per-message limits underneath.

Apify: subscription plus pay-per-event

Apify draws the same line one layer further down, closer to the tools your agent actually calls. The platform subscription covers compute credits, concurrent runs, storage, and proxies. These are predictable infrastructure costs that scale with the size of your operation, not what it does. On top of that, individual tools (Actors) charge on a pay-per-event (PPE) basis. The developer of each Actor picks the events that count (names like page-scraped, api-call, or actor-start, depending on what the Actor does) and you’re billed only when those events trigger.

What’s worth noticing is what PPE actually fixes at the tool layer. Apify’s previous model priced individual Actors as monthly rentals - a flat fee for unlimited use. That worked for humans running things on a schedule, but it failed agents in two ways. You paid for tools your agent barely used. And there was no per-run spending cap, so an agent stuck in a loop just kept the meter running. PPE handles both. You only pay when an event triggers, and the tool itself enforces a per-run spending cap that you set - surfaced inside the Actor as the ACTOR_MAX_TOTAL_CHARGE_USD environment variable.

- 📖 Related reading: How to build an AI agent that pays for Apify Actors with Skyfire. Let your agents autonomously discover, pay for, and run web scraping tools without human intervention.

What good consumption pricing needs

Whatever the specific model (per-token, per-event, per-second of compute), consumption pricing for agents has to do three things to actually work in production.

First, it has to map to discrete actions, not opaque resource units. You should be able to look at an invoice line and figure out what it represents. "1,200 product detail extractions" is legible. "47 compute units" usually isn't.

Second, spending controls have to live inside the tool, not in your orchestration layer. If you have to build cost logic into your own agent code, the pricing model has externalized its risk onto you. Token-based APIs handle this with max_tokens parameters and budget alerts; Apify does it with the per-run spending cap and an SDK flag (eventChargeLimitReached) that lets an Actor exit cleanly when it hits the budget. Either way, the tool enforces the limit, not the agent.

Third, prices need to be comparable. If two tools both claim to scrape LinkedIn profiles, but one charges per profile and the other per compute-second, you can’t build a sensible cost model around them. Standardizing on a common metering primitive is what makes a tool ecosystem usable for agents, which have to pick between tools based on price. That only works if the prices are in the same units.

Where this is heading

The shift to subscription-plus-consumption isn’t really about pricing strategy. It’s about acknowledging that agents change the cost structure of software. Once a system can call your API on its own schedule and at its own volume, a flat fee stops describing the relationship.

The two-layer model (predictable infrastructure on a subscription, variable behavior on a meter) is what you get when you take that seriously. The platforms that get this right will be the ones that draw the line in the right place: subscription for what’s reserved, consumption for what’s used, and tooling that makes both legible to the people building on top.