Scraping web data from Yahoo Finance can be challenging for three reasons:

- It has a complex HTML structure

- It's updated frequently

- It requires precise CSS or XPath selectors

This guide will show you how to overcome these challenges using Python, step by step. You'll find this tutorial easy to follow, and by the end, you'll have fully functional code ready to extract the financial data you need from Yahoo Finance.

Does Yahoo Finance allow scraping?

You can generally scrape Yahoo Finance, and most of the data available on the website is publicly accessible. However, if you want to practice ethical web scraping, it's important to respect their terms of service and avoid overwhelming their servers.

How to scrape Yahoo Finance using Python

Follow this step-by-step tutorial to learn how to create a web scraper for Yahoo Finance using Python.

1. Setup and prerequisites

Before you start scraping Yahoo Finance with Python, make sure your development environment is ready:

- Install Python: Download and install the latest version of Python from the official Python website.

- Choose an IDE: Use an IDE like PyCharm, Visual Studio Code, or Jupyter Notebook for your development work.

- Basic knowledge: Make sure you understand CSS selectors and are comfortable using browser DevTools to inspect page elements.

Next, create a new project using Poetry:

poetry new yahoo-finance-scraper

This command will generate the following project structure:

yahoo-finance-scraper/

├── pyproject.toml

├── README.md

├── yahoo_finance_scraper/

│ └── __init__.py

└── tests/

└── __init__.py

Navigate into the project directory and install Playwright:

cd yahoo-finance-scraper

poetry add playwright

poetry run playwright install

Yahoo Finance uses JavaScript to load content dynamically. Playwright can render JavaScript, making it suitable for scraping dynamic content from Yahoo Finance.

Open the pyproject.toml file to check your project's dependencies, which should include:

[tool.poetry.dependencies]

python = "^3.12"

playwright = "^1.46.0"

Finally, create a main.py file within the yahoo_finance_scraper folder to write your scraping logic.

Your updated project structure should look like this:

yahoo-finance-scraper/

├── pyproject.toml

├── README.md

├── yahoo_finance_scraper/

│ ├── __init__.py

│ └── main.py

└── tests/

└── __init__.py

Your environment is now set up, and you're ready to start writing the Python Playwright code to scrape Yahoo Finance.

Note: If you prefer not to set up all this on your local machine, you can deploy your code directly on Apify. Later in this tutorial, I'll show you how to deploy and run your scraper on Apify.

2. Connect to the target Yahoo Finance page

To begin, let's launch a Chromium browser instance using Playwright. While Playwright supports various browser engines, we'll use Chromium for this tutorial:

from playwright.async_api import async_playwright, Playwright

async def main():

async with async_playwright() as playwright:

browser = await playwright.chromium.launch(headless=False) # Launch a Chromium browser

context = await browser.new_context()

page = await context.new_page()

if __name__ == "__main__":

asyncio.run(main())

To run this script, you'll need to execute the main() function using an event loop at the end of your script.

Next, navigate to the Yahoo Finance page for the stock you want to scrape. The URL for a Yahoo Finance stock page looks like this:

https://finance.yahoo.com/quote/{ticker_symbol}

A ticker symbol is a unique code that identifies a publicly traded company on a stock exchange, such as AAPL for Apple Inc. or TSLA for Tesla, Inc. When the ticker symbol changes, the URL also changes. Therefore, you should replace {ticker_symbol} with the specific stock ticker you want to scrape.

import asyncio

from playwright.async_api import async_playwright, Playwright

async def main():

async with async_playwright() as playwright:

# ...

ticker_symbol = "AAPL" # Replace with the desired ticker symbol

yahoo_finance_url = f"https://finance.yahoo.com/quote/{ticker_symbol}"

await page.goto(yahoo_finance_url) # Navigate to the Yahoo Finance page

if __name__ == "__main__":

asyncio.run(main())

Here's the complete script so far:

import asyncio

from playwright.async_api import async_playwright, Playwright

async def main():

async with async_playwright() as playwright:

# Launch a Chromium browser

browser = await playwright.chromium.launch(headless=False)

context = await browser.new_context()

page = await context.new_page()

ticker_symbol = "AAPL" # Replace with the desired ticker symbol

yahoo_finance_url = f"https://finance.yahoo.com/quote/{ticker_symbol}"

await page.goto(yahoo_finance_url) # Navigate to the Yahoo Finance page

# Wait for a few seconds

await asyncio.sleep(3)

# Close the browser

await browser.close()

if __name__ == "__main__":

asyncio.run(main())

When you run this script, it will open the Yahoo Finance page for some seconds before terminating.

Great! Now, you just have to change the ticker symbol to scrape data for any stock of your choice.

Note: launching the browser with the UI (headless=False) is perfect for testing and debugging. If you want to save resources and run the browser in the background, switch to headless mode:

browser = await playwright.chromium.launch(headless=True)

3. Bypass cookies modal

When accessing Yahoo Finance from a European IP address, you may encounter a cookies consent modal that needs to be addressed before you can proceed with scraping.

To continue to the desired page, you'll need to interact with the modal by clicking "Accept all" or "Reject all." To do this, right-click on the "Accept All" button and select "Inspect" to open your browser's DevTools:

In the DevTools, you can see that the button can be selected using the following CSS selector:

button.accept-all

To automate clicking this button in Playwright, you can use the following script:

import asyncio

from playwright.async_api import async_playwright, Playwright

async def main():

async with async_playwright() as playwright:

browser = await playwright.chromium.launch(headless=False)

context = await browser.new_context()

page = await context.new_page()

ticker_symbol = "AAPL"

url = f"https://finance.yahoo.com/quote/{ticker_symbol}"

await page.goto(url)

try:

# Click the "Accept All" button to bypass the modal

await page.locator("button.accept-all").click()

except:

pass

await browser.close()

# Run the main function

if __name__ == "__main__":

asyncio.run(main())

This script will attempt to click the "Accept All" button in case the cookies consent modal shows up. This lets you continue scraping without interruption.

4. Inspect the page to select elements to scrape

To effectively scrape data, you first need to understand the DOM structure of the webpage. Suppose you want to extract the regular market price (224.72), change (+3.00), and change percent (+1.35%). These values are all contained within a div element. Inside this div, you'll find three fin-streamer elements, each representing the market price, change, and percent, respectively.

To target these elements precisely, you can use the following CSS selectors:

[data-testid="qsp-price"]

[data-testid="qsp-price-change"]

[data-testid="qsp-price-change-percent"]

Great! Next, let's look at how to extract the market close time, which is displayed as "4 PM EDT" on the page.

To select the market close time, use this CSS selector:

div[slot="marketTimeNotice"] > span

Now, let’s move on to extract critical company data like market cap, previous close, and volume from the table:

As you can see, the data is structured as a table with multiple li tags representing each field, starting from "Previous Close" and ending at "1y Target Est".

To extract specific fields like "Previous Close" and "Open", you can use the data-field attribute, which uniquely identifies each element:

[data-field="regularMarketPreviousClose"]

[data-field="regularMarketOpen"]

The data-field attribute provides a straightforward way to select the elements. However, there may be cases where such an attribute isn't present. For instance, extracting the "Bid" value, which lacks a data-field attribute or any unique identifier. In this case, we’ll first locate the "Bid" label using its text content and then move to the next sibling element to extract the corresponding value.

Here's a combined selector you can use:

span:has-text('Bid') + span.value

5. Scrape the stock data

Now that you've identified the elements you need, it's time to write the Playwright script to extract the data from Yahoo Finance.

Let’s define a new function named scrape_data that will handle the scraping process. This function takes a ticker symbol, navigates to the Yahoo Finance page, and returns a dictionary containing the extracted financial data.

Here's how it works:

from playwright.async_api import async_playwright, Playwright

async def scrape_data(playwright: Playwright, ticker: str) -> dict:

try:

# Launch the browser in headless mode

browser = await playwright.chromium.launch(headless=True)

context = await browser.new_context()

page = await context.new_page()

url = f"https://finance.yahoo.com/quote/{ticker}"

await page.goto(url, wait_until="domcontentloaded")

try:

# Click the "Accept All" button if present

await page.locator("button.accept-all").click()

except:

pass # If the button is not found, continue without any action

data = {"Ticker": ticker}

# Extract regular market values

data["Regular Market Price"] = await page.locator(

'[data-testid="qsp-price"]'

).text_content()

data["Regular Market Price Change"] = await page.locator(

'[data-testid="qsp-price-change"]'

).text_content()

data["Regular Market Price Change Percent"] = await page.locator(

'[data-testid="qsp-price-change-percent"]'

).text_content()

# Extract market close time

market_close_time = await page.locator(

'div[slot="marketTimeNotice"] > span'

).first.text_content()

data["Market Close Time"] = market_close_time.replace("At close: ", "")

# Extract other financial metrics

data["Previous Close"] = await page.locator(

'[data-field="regularMarketPreviousClose"]'

).text_content()

data["Open Price"] = await page.locator(

'[data-field="regularMarketOpen"]'

).text_content()

data["Bid"] = await page.locator(

"span:has-text('Bid') + span.value"

).text_content()

data["Ask"] = await page.locator(

"span:has-text('Ask') + span.value"

).text_content()

data["Day's Range"] = await page.locator(

'[data-field="regularMarketDayRange"]'

).text_content()

data["52 Week Range"] = await page.locator(

'[data-field="fiftyTwoWeekRange"]'

).text_content()

data["Volume"] = await page.locator(

'[data-field="regularMarketVolume"]'

).text_content()

data["Avg. Volume"] = await page.locator(

'[data-field="averageVolume"]'

).text_content()

data["Market Cap"] = await page.locator(

'[data-field="marketCap"]'

).text_content()

data["Beta"] = await page.locator(

"span:has-text('Beta (5Y Monthly)') + span.value"

).text_content()

data["PE Ratio"] = await page.locator(

"span:has-text('PE Ratio (TTM)') + span.value"

).text_content()

data["EPS"] = await page.locator(

"span:has-text('EPS (TTM)') + span.value"

).text_content()

data["Earnings Date"] = await page.locator(

"span:has-text('Earnings Date') + span.value"

).text_content()

data["Dividend & Yield"] = await page.locator(

"span:has-text('Forward Dividend & Yield') + span.value"

).text_content()

data["Ex-Dividend Date"] = await page.locator(

"span:has-text('Ex-Dividend Date') + span.value"

).text_content()

data["1y Target Est"] = await page.locator(

'[data-field="targetMeanPrice"]'

).text_content()

return data

except Exception as e:

print(f"An error occurred while processing {ticker}: {e}")

return {"Ticker": ticker, "Error": str(e)}

finally:

await context.close()

await browser.close()

The code extracts data using the identified CSS selectors, utilizes the locator method to fetch each element, and then applies the text_content() method to retrieve the text from these elements. The extracted metrics are stored in a dictionary, where each key represents a financial metric, and the corresponding value is the extracted text.

Finally, define the main function that orchestrates the entire process by iterating over each ticker and collecting data

async def main():

# Define the ticker symbol

ticker = "AAPL"

async with async_playwright() as playwright:

# Collect data for the ticker

data = await scrape_data(playwright, ticker)

print(data)

# Run the main function

if __name__ == "__main__":

asyncio.run(main())

At the end of the scraping process, the following data will be printed in the console:

6. Scrape historical stock data

After getting real-time data, let's look at Yahoo Finance's historical stock information. This data shows how a stock performed in the past, which helps with investment choices. You can get daily, weekly, or monthly data for different periods — last month, last year, or the stock's entire history.

To access historical stock data on Yahoo Finance, you need to customize the URL by modifying specific parameters:

frequency: Specifies the data interval, such as daily (1d), weekly (1wk), or monthly (1mo).period1andperiod2: These parameters set the start and end dates for the data in Unix timestamp format.

For example, the below URL retrieves weekly historical data for Amazon (AMZN) from August 16, 2023, to August 16, 2024:

https://finance.yahoo.com/quote/AMZN/history/?frequency=1wk&period1=1692172771&period2=1723766400

When you navigate to this URL, you’ll see a table containing the historical data. In our case, the data shown is for the last year with a weekly interval.

To extract this data, you use the query_selector_all method in Playwright and CSS selector .table tbody tr:

rows = await page.query_selector_all(".table tbody tr")

Each row contains multiple cells ( tags) that hold the data. Here's how to extract the text content from each cell:

for row in rows:

cells = await row.query_selector_all("td")

date = await cells[0].text_content()

open_price = await cells[1].text_content()

high_price = await cells[2].text_content()

low_price = await cells[3].text_content()

close_price = await cells[4].text_content()

adj_close = await cells[5].text_content()

volume = await cells[6].text_content()

Next, create a function to generate Unix timestamps, which we'll use to define the start (period1) and end (period2) dates for the data:

def get_unix_timestamp(

years_back: int = 0,

months_back: int = 0,

days_back: int = 0

) -> int:

"""Get a Unix timestamp for a specified number of years, months, or days back from today."""

current_time = time.time()

seconds_in_day = 86400

return int(

current_time

- (years_back * 365 + months_back * 30 + days_back) * seconds_in_day

)

Now, let's write a function to scrape historical data:

from playwright.async_api import async_playwright, Playwright

async def scrape_historical_data(

playwright: Playwright,

ticker: str,

frequency: str,

period1: int,

period2: int

):

url = f"https://finance.yahoo.com/quote/{ticker}/history?frequency={frequency}&period1={period1}&period2={period2}"

browser = await playwright.chromium.launch(headless=True)

context = await browser.new_context()

page = await context.new_page()

await page.goto(url, wait_until="domcontentloaded")

try:

await page.locator("button.accept-all").click()

except:

pass

# Wait for the table to load

await page.wait_for_selector(".table-container")

# Extract table rows

rows = await page.query_selector_all(".table tbody tr")

# Prepare data storage

data = []

for row in rows:

cells = await row.query_selector_all("td")

date = await cells[0].text_content()

open_price = await cells[1].text_content()

high_price = await cells[2].text_content()

low_price = await cells[3].text_content()

close_price = await cells[4].text_content()

adj_close = await cells[5].text_content()

volume = await cells[6].text_content()

# Add row data to list

data.append(

[date, open_price, high_price, low_price, close_price, adj_close, volume]

)

print(data)

await context.close()

await browser.close()

return data

The scrape_historical_data function constructs the Yahoo Finance URL using the given parameters, navigates to the page while managing any cookie prompts, waits for the historical data table to load fully, and then extracts and prints the relevant data to the console.

Finally, here's how to run the script with different configurations:

async def main():

async with async_playwright() as playwright:

ticker = "TSLA"

# Weekly data for last year

period1 = get_unix_timestamp(years_back=1)

period2 = get_unix_timestamp()

weekly_data = await scrape_historical_data(

playwright, ticker, "1wk", period1, period2

)

# Run the main function

if __name__ == "__main__":

asyncio.run(main())

Customize the data period and frequency by adjusting the parameters:

# Daily data for the last month

period1 = get_unix_timestamp(months_back=1)

period2 = get_unix_timestamp()

await scrape_historical_data(playwright, ticker, "1d", period1, period2)

# Monthly data for the stock's lifetime

period1 = 1

period2 = 999999999999

await scrape_historical_data(playwright, ticker, "1mo", period1, period2)

Here’s the complete script so far to scrape the historical data from Yahoo Finance:

from playwright.async_api import async_playwright, Playwright

import asyncio

import time

def get_unix_timestamp(

years_back: int = 0, months_back: int = 0, days_back: int = 0

) -> int:

"""Get a Unix timestamp for a specified number of years, months, or days back from today."""

current_time = time.time()

seconds_in_day = 86400

return int(

current_time

- (years_back * 365 + months_back * 30 + days_back) * seconds_in_day

)

async def scrape_historical_data(

playwright: Playwright, ticker: str, frequency: str, period1: int, period2: int

):

url = f"https://finance.yahoo.com/quote/{ticker}/history?frequency={frequency}&period1={period1}&period2={period2}"

browser = await playwright.chromium.launch(headless=True)

context = await browser.new_context()

page = await context.new_page()

await page.goto(url, wait_until="domcontentloaded")

try:

await page.locator("button.accept-all").click()

except:

pass

# Wait for the table to load

await page.wait_for_selector(".table-container")

# Extract table rows

rows = await page.query_selector_all(".table tbody tr")

# Prepare data storage

data = []

for row in rows:

cells = await row.query_selector_all("td")

date = await cells[0].text_content()

open_price = await cells[1].text_content()

high_price = await cells[2].text_content()

low_price = await cells[3].text_content()

close_price = await cells[4].text_content()

adj_close = await cells[5].text_content()

volume = await cells[6].text_content()

# Add row data to list

data.append(

[date, open_price, high_price, low_price, close_price, adj_close, volume]

)

print(data)

await context.close()

await browser.close()

return data

async def main() -> None:

async with async_playwright() as playwright:

ticker = "TSLA"

# Weekly data for the last year

period1 = get_unix_timestamp(years_back=1)

period2 = get_unix_timestamp()

weekly_data = await scrape_historical_data(

playwright, ticker, "1wk", period1, period2

)

if __name__ == "__main__":

asyncio.run(main())

Run this script to print all the historical stock data to the console based on your specified parameters.

7. Scrape multiple stocks

So far, we've scraped data for a single stock. To gather data for multiple stocks at once, we can modify the script to accept ticker symbols as command-line arguments and process each one.

async def main() -> None:

if len(sys.argv) < 2:

print("Please provide at least one ticker symbol as a command-line argument.")

return

tickers = sys.argv[1:]

async with async_playwright() as playwright:

# Collect data for all tickers

all_data = []

for ticker in tickers:

data = await scrape_data(playwright, ticker)

all_data.append(data)

print(all_data)

# Run the main function

if __name__ == "__main__":

asyncio.run(main())

To run the script, pass the ticker symbols as arguments:

python yahoo_finance_scraper/main.py AAPL MSFT TSLA

This will scrape and display data for Apple Inc. (AAPL), Microsoft Corporation (MSFT), and Tesla Inc. (TSLA).

8. Avoid getting blocked

Websites often spot and stop automated scraping. They use rate limits, IP blocks, and check browsing patterns. Here are some effective ways to stay undetected when web scraping:

1. Random Intervals Between Requests

Adding random delays between requests is a simple way to avoid detection. This basic method can make your scraping less obvious to websites.

Here's how to add random delays in your Playwright script:

import asyncio

import random

from playwright.async_api import Playwright, async_playwright

async def scrape_data(playwright: Playwright, ticker: str):

browser = await playwright.chromium.launch()

context = await browser.new_context()

page = await context.new_page()

url = f"https://example.com/{ticker}" # Example URL

await page.goto(url)

# Random delay to mimic human-like behavior

await asyncio.sleep(random.uniform(2, 5))

# Your scraping logic here...

await context.close()

await browser.close()

async def main():

async with async_playwright() as playwright:

await scrape_data(playwright, "AAPL") # Example ticker

if __name__ == "__main__":

asyncio.run(main())

This script introduces a random delay of 2 to 5 seconds between requests, making the actions less predictable and reducing the likelihood of being flagged as a bot.

2. Setting and Switching User-Agents

Websites often use User-Agent strings to identify the browser and device behind each request. By rotating User-Agent strings, you can make your scraping requests appear to come from different browsers and devices, helping you avoid detection.

Here's how to implement User-Agent rotation in Playwright:

import asyncio

import random

from playwright.async_api import Playwright, async_playwright

async def scrape_data(playwright: Playwright, ticker: str) -> None:

browser = await playwright.chromium.launch(headless=True)

context = await browser.new_context()

# List of user-agents

user_agents = [

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36",

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/91.0.4472.124 Safari/537.36",

"Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:91.0) Gecko/20100101 Firefox/91.0",

]

# Select a random user-agent from the list to rotate between requests

user_agent = random.choice(user_agents)

# Set the chosen user-agent for the current browser context

context.set_user_agent(user_agent)

page = await context.new_page()

url = f"https://example.com/{ticker}" # Example URL with ticker

await page.goto(url)

# Your scraping logic goes here...

await context.close()

await browser.close()

async def main():

async with async_playwright() as playwright:

await scrape_data(playwright, "AAPL") # Example ticker

if __name__ == "__main__":

asyncio.run(main())

This method uses a list of User-Agent strings and randomly selects one for each request. This technique helps mask your scraper's identity and reduces the likelihood of being blocked.

Note: You can refer to websites like useragentstring.com to get a comprehensive list of User-Agent strings.

3. Using Playwright-Stealth

To further minimize detection and enhance your scraping efforts, you can use the playwright-stealth library, which applies various techniques to make your scraping activities look like a real user.

First, install playwright-stealth:

poetry add playwright-stealth

Then, modify your script:

import asyncio

from playwright.async_api import Playwright, async_playwright

from playwright_stealth import stealth_async

async def scrape_data(playwright: Playwright, ticker: str) -> None:

browser = await playwright.chromium.launch(headless=True)

context = await browser.new_context()

# Apply stealth techniques to avoid detection

await stealth_async(context)

page = await context.new_page()

url = f"https://finance.yahoo.com/quote/{ticker}"

await page.goto(url)

# Your scraping logic here...

await context.close()

await browser.close()

async def main():

async with async_playwright() as playwright:

await scrape_data(playwright, "AAPL") # Example ticker

if __name__ == "__main__":

asyncio.run(main())

These techniques can help avoid blocking, but you might still face issues. If so, try more advanced methods like using proxies, rotating IP addresses, or implementing CAPTCHA solvers. You can check out the detailed guide 21 tips to crawl websites without getting blocked. It’s your go-to guide on choosing proxies wisely, fighting Cloudflare, solving CAPTCHAs, avoiding honeytraps, and more.

Blocked again? Apify Proxy will get you through

Improve the performance of your scrapers by smartly rotating datacenter and residential IP addresses.

Available on all Apify plans

9. Export scraped stock data to CSV

After scraping the desired stock data, the next step is to export it into a CSV file to make it easy to analyze, share with others, or import into other data processing tools.

Here's how you can save the extracted data to a CSV file:

# ...

import csv

async def main() -> None:

# ...

async with async_playwright() as playwright:

# Collect data for all tickers

all_data = []

for ticker in tickers:

data = await scrape_data(playwright, ticker)

all_data.append(data)

# Define the CSV file name

csv_file = "stock_data.csv"

# Write the data to a CSV file

with open(csv_file, mode="w", newline="", encoding="utf-8") as file:

writer = csv.DictWriter(file, fieldnames=all_data[0].keys())

writer.writeheader()

writer.writerows(all_data)

if __name__ == "__main__":

asyncio.run(main())

The code starts by gathering data for each ticker symbol. After that, it creates a CSV file named stock_data.csv. It then uses Python's csv.DictWriter method to writing the data, starting with the column headers using the writeheader() method, and adding each row of data using the writerows() method.

10. Putting everything together

Let’s pull everything together into a single script. This final code snippet includes all the steps from scraping data from Yahoo Finance to exporting it to a CSV file.

import asyncio

from playwright.async_api import async_playwright, Playwright

import sys

import csv

async def scrape_data(playwright: Playwright, ticker: str) -> dict:

"""

Extracts financial data from Yahoo Finance for a given stock ticker.

Args:

playwright (Playwright): The Playwright instance used to control the browser.

ticker (str): The stock ticker symbol to retrieve data for.

Returns:

dict: A dictionary containing the extracted financial data for the given ticker.

"""

try:

# Launch a headless browser

browser = await playwright.chromium.launch(headless=True)

context = await browser.new_context()

page = await context.new_page()

# Form the URL using the ticker symbol

url = f"https://finance.yahoo.com/quote/{ticker}"

# Navigate to the page and wait for the DOM content to load

await page.goto(url, wait_until="domcontentloaded")

# Try to click the "Accept All" button for cookies, if it exists

try:

await page.locator("button.accept-all").click()

except:

pass # If the button is not found, continue without any action

# Dictionary to store the extracted data

data = {"Ticker": ticker}

# Extract regular market values

data["Regular Market Price"] = await page.locator(

'[data-testid="qsp-price"]'

).text_content()

data["Regular Market Price Change"] = await page.locator(

'[data-testid="qsp-price-change"]'

).text_content()

data["Regular Market Price Change Percent"] = await page.locator(

'[data-testid="qsp-price-change-percent"]'

).text_content()

# Extract market close time

market_close_time = await page.locator(

'div[slot="marketTimeNotice"] > span'

).first.text_content()

data["Market Close Time"] = market_close_time.replace("At close: ", "")

# Extract other financial metrics

data["Previous Close"] = await page.locator(

'[data-field="regularMarketPreviousClose"]'

).text_content()

data["Open Price"] = await page.locator(

'[data-field="regularMarketOpen"]'

).text_content()

data["Bid"] = await page.locator(

"span:has-text('Bid') + span.value"

).text_content()

data["Ask"] = await page.locator(

"span:has-text('Ask') + span.value"

).text_content()

data["Day's Range"] = await page.locator(

'[data-field="regularMarketDayRange"]'

).text_content()

data["52 Week Range"] = await page.locator(

'[data-field="fiftyTwoWeekRange"]'

).text_content()

data["Volume"] = await page.locator(

'[data-field="regularMarketVolume"]'

).text_content()

data["Avg. Volume"] = await page.locator(

'[data-field="averageVolume"]'

).text_content()

data["Market Cap"] = await page.locator(

'[data-field="marketCap"]'

).text_content()

data["Beta"] = await page.locator(

"span:has-text('Beta (5Y Monthly)') + span.value"

).text_content()

data["PE Ratio"] = await page.locator(

"span:has-text('PE Ratio (TTM)') + span.value"

).text_content()

data["EPS"] = await page.locator(

"span:has-text('EPS (TTM)') + span.value"

).text_content()

data["Earnings Date"] = await page.locator(

"span:has-text('Earnings Date') + span.value"

).text_content()

data["Dividend & Yield"] = await page.locator(

"span:has-text('Forward Dividend & Yield') + span.value"

).text_content()

data["Ex-Dividend Date"] = await page.locator(

"span:has-text('Ex-Dividend Date') + span.value"

).text_content()

data["1y Target Est"] = await page.locator(

'[data-field="targetMeanPrice"]'

).text_content()

return data

except Exception as e:

# Handle any exceptions and return an error message

print(f"An error occurred while processing {ticker}: {e}")

return {"Ticker": ticker, "Error": str(e)}

finally:

# Ensure the browser is closed even if an error occurs

await context.close()

await browser.close()

async def main() -> None:

"""

Main function to run the Yahoo Finance data extraction for multiple tickers.

Reads ticker symbols from command-line arguments, extracts data for each,

and saves the results to a CSV file.

"""

if len(sys.argv) < 2:

print("Please provide at least one ticker symbol as a command-line argument.")

return

tickers = sys.argv[1:]

# Use async_playwright context to handle browser automation

async with async_playwright() as playwright:

# List to store data for all tickers

all_data = []

for ticker in tickers:

# Extract data for each ticker and add it to the list

data = await scrape_data(playwright, ticker)

all_data.append(data)

# Define the CSV file name

csv_file = "stock_data.csv"

# Write the extracted data to a CSV file

with open(csv_file, mode="w", newline="", encoding="utf-8") as file:

writer = csv.DictWriter(file, fieldnames=all_data[0].keys())

writer.writeheader()

writer.writerows(all_data)

print(f"Data for tickers {', '.join(tickers)

} has been saved to {csv_file}")

# Run the main function using asyncio

if __name__ == "__main__":

asyncio.run(main())

You can run the script from the terminal by providing one or more stock ticker symbols as command-line arguments.

python yahoo_finance_scraper/main.py AAPL GOOG TSLA AMZN META

After running the script, the CSV file named stock_data.csv will be created in the same directory. This file will contain all the data in an organized way. The CSV file will look like this:

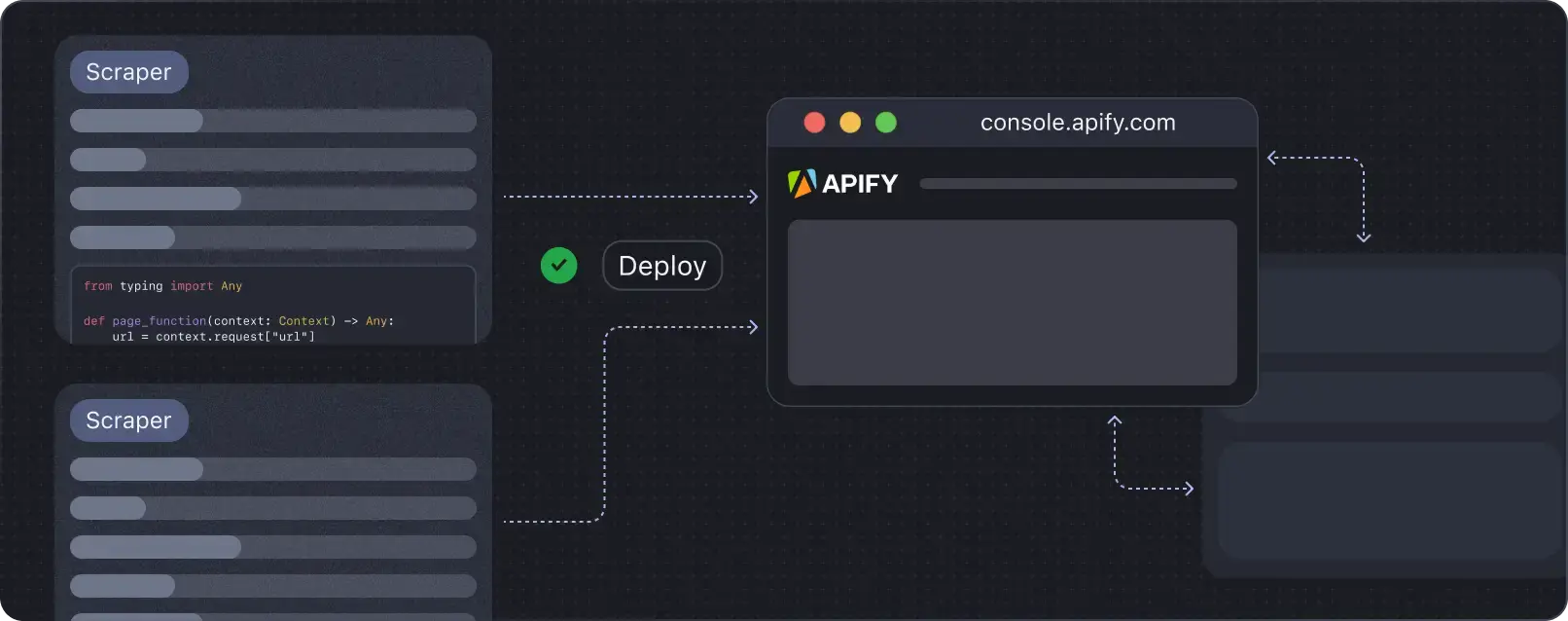

11. Deploying the code to Apify

With your scraper ready, it’s time to deploy it to the cloud using Apify. This will allow you to run your scraper on a schedule and utilize Apify’s powerful features. For this task, we’ll use the Python Playwright template for a quick setup. On Apify, scrapers are called Actors.

Start by cloning the Playwright + Chrome template from the Apify Python template repository.

To get started, you'll need to install the Apify CLI, which will help you manage your Actor. On macOS or Linux, you can do this using Homebrew:

brew install apify/tap/apify-cli

Or, via NPM:

npm -g install apify-cli

With the CLI installed, create a new Actor using the Python Playwright + Chrome template:

apify create yf-scraper -t python-playwright

This command will set up a project named yf-scraper in your directory. It installs all the necessary dependencies and provides some boilerplate code to get you started.

Navigate to your new project folder and open it with your favorite code editor. In this example, I’m using VS Code:

cd yf-scraper

code .

The template comes with a fully functional scraper. You can test it by running the command apify run to see it in action. The results will be saved in storage/datasets.

Next, modify the code in src/main.py to tailor it for scraping Yahoo Finance.

Here’s the modified code:

from playwright.async_api import async_playwright

from apify import Actor

async def extract_stock_data(page, ticker):

data = {"Ticker": ticker}

data["Regular Market Price"] = await page.locator(

'[data-testid="qsp-price"]'

).text_content()

data["Regular Market Price Change"] = await page.locator(

'[data-testid="qsp-price-change"]'

).text_content()

data["Regular Market Price Change Percent"] = await page.locator(

'[data-testid="qsp-price-change-percent"]'

).text_content()

data["Previous Close"] = await page.locator(

'[data-field="regularMarketPreviousClose"]'

).text_content()

data["Open Price"] = await page.locator(

'[data-field="regularMarketOpen"]'

).text_content()

data["Bid"] = await page.locator("span:has-text('Bid') + span.value").text_content()

data["Ask"] = await page.locator("span:has-text('Ask') + span.value").text_content()

data["Day's Range"] = await page.locator(

'[data-field="regularMarketDayRange"]'

).text_content()

data["52 Week Range"] = await page.locator(

'[data-field="fiftyTwoWeekRange"]'

).text_content()

data["Volume"] = await page.locator(

'[data-field="regularMarketVolume"]'

).text_content()

data["Avg. Volume"] = await page.locator(

'[data-field="averageVolume"]'

).text_content()

data["Market Cap"] = await page.locator('[data-field="marketCap"]').text_content()

data["Beta"] = await page.locator(

"span:has-text('Beta (5Y Monthly)') + span.value"

).text_content()

data["PE Ratio"] = await page.locator(

"span:has-text('PE Ratio (TTM)') + span.value"

).text_content()

data["EPS"] = await page.locator(

"span:has-text('EPS (TTM)') + span.value"

).text_content()

data["Earnings Date"] = await page.locator(

"span:has-text('Earnings Date') + span.value"

).text_content()

data["Dividend & Yield"] = await page.locator(

"span:has-text('Forward Dividend & Yield') + span.value"

).text_content()

data["Ex-Dividend Date"] = await page.locator(

"span:has-text('Ex-Dividend Date') + span.value"

).text_content()

data["1y Target Est"] = await page.locator(

'[data-field="targetMeanPrice"]'

).text_content()

return data

async def main() -> None:

"""

Main function to run the Apify Actor and extract stock data using Playwright.

Reads input configuration from the Actor, enqueues URLs for scraping,

launches Playwright to process requests, and extracts stock data.

"""

async with Actor:

# Retrieve input parameters

actor_input = await Actor.get_input() or {}

start_urls = actor_input.get("start_urls", [])

tickers = actor_input.get("tickers", [])

if not start_urls:

Actor.log.info(

"No start URLs specified in actor input. Exiting...")

await Actor.exit()

base_url = start_urls[0].get("url", "")

# Enqueue requests for each ticker

default_queue = await Actor.open_request_queue()

for ticker in tickers:

url = f"{base_url}{ticker}"

await default_queue.add_request({"url": url, "userData": {"depth": 0}})

# Launch Playwright and open a new browser context

Actor.log.info("Launching Playwright...")

async with async_playwright() as playwright:

browser = await playwright.chromium.launch(headless=Actor.config.headless)

context = await browser.new_context()

# Process requests from the queue

while request := await default_queue.fetch_next_request():

url = request["url"]

Actor.log.info(f"Scraping {url} ...")

try:

# Open the URL in a new Playwright page

page = await context.new_page()

await page.goto(url, wait_until="domcontentloaded")

# Extract the ticker symbol from the URL

ticker = url.rsplit("/", 1)[-1]

data = await extract_stock_data(page, ticker)

# Push the extracted data to Apify

await Actor.push_data(data)

except Exception as e:

Actor.log.exception(

f"Error extracting data from {url}: {e}")

finally:

# Ensure the page is closed and the request is marked as handled

await page.close()

await default_queue.mark_request_as_handled(request)

Before running the code, update the input_schema.json file in the .actor/ directory to include the Yahoo Finance quote page URL and also add a tickers field.

Here's the updated input_schema.json file:

{

"title": "Python Playwright Scraper",

"type": "object",

"schemaVersion": 1,

"properties": {

"start_urls": {

"title": "Start URLs",

"type": "array",

"description": "URLs to start with",

"prefill": [

{

"url": "https://finance.yahoo.com/quote/"

}

],

"editor": "requestListSources"

},

"tickers": {

"title": "Tickers",

"type": "array",

"description": "List of stock ticker symbols to scrape data for",

"items": {

"type": "string"

},

"prefill": [

"AAPL",

"GOOGL",

"AMZN"

],

"editor": "stringList"

},

"max_depth": {

"title": "Maximum depth",

"type": "integer",

"description": "Depth to which to scrape to",

"default": 1

}

},

"required": [

"start_urls",

"tickers"

]

}

Also, update the input.json file by changing the URL to the Yahoo Finance page to prevent conflicts during execution or you can simply delete this file.

To run your Actor, run this command in your terminal:

apify run

The scraped results will saved in storage/datasets, where each ticker will have its own JSON file as shown below:

To deploy your Actor, first create an Apify account if you don’t already have one. Then, get your API Token from Apify Console under Settings → Integrations, and finally log in with your token using the following command:

apify login -t YOUR_APIFY_TOKEN

Finally, push your Actor to Apify with:

apify push

After a few moments, your Actor should appear in the Apify Console under Actors → My actors.

Your scraper is now ready to run on the Apify platform. Click the "Start" button to begin. Once the run is complete, you can preview and download your data in various formats from the "Storage" tab.

Bonus: A key advantage of running your scrapers on Apify is the option to save different configurations for the same Actor and set up automatic scheduling. Let's set this up for our Playwright Actor.

On the Actor page, click on Create empty task.

Next, click on Actions and then Schedule.

Finally, select how often you want the Actor to run and click Create.

Perfect! Your Actor is now set to run automatically at the time you specified. You can view and manage all your scheduled runs in the "Schedules" tab of the Apify platform.

To begin scraping with Python on the Apify platform, you can utilize Python code templates. These templates are available for popular libraries such as Requests, Beautiful Soup, Scrapy, Playwright, and Selenium. Using these templates allows you to quickly build scrapers for various web scraping tasks.

Does Yahoo Finance have an API?

Yahoo Finance provides a free API that gives users access to a wealth of financial information. This includes real-time stock quotes, historical market data, and the latest financial news. The API offers various endpoints, allowing you to retrieve information in different formats like JSON, CSV, and XML. You can easily integrate the data into your projects, using it in whatever way best suits your needs.

Yahoo Finance is open for business

You've built a practical system to extract financial data from Yahoo Finance using Playwright. This code handles multiple ticker symbols and saves the results to a CSV file. You've learned how to navigate around blocking mechanisms, keeping your scrapers up and running.

The Apify platform and its Actor framework now allow you to scale your web scraping efforts. You can schedule your scraper to run when it's most useful for you. We hope you can put these tools to work for you and your understanding of the markets.

Deploy your scraping code to the cloud

Headless browsers, infrastructure scaling, sophisticated blocking.

Meet Apify - the full-stack web scraping and browser automation platform that makes it all easy.