Your very own Python web crawler, that's what we're going to build today. If you have no experience, that's fine. Everything will be explained and shown step-by-step. So don't worry, and let's get into it!

What is a web crawler in Python?

A Python web crawler is an automated program coded in the Python language that systematically browses websites to find and index their content. Search engines such as Google, Yahoo, and Bing rely heavily on web crawling to understand the web and provide relevant search results to users.

A web crawler starts with a list of URLs to visit, called seeds. These seeds serve as the entry point for any web crawler. For each URL, the crawler makes HTTP requests and downloads the HTML content from the page. This raw data is then parsed to extract valuable data, such as links to other pages. The new links will be added to a queue for future exploration, and the other data will be stored to be processed in a separate pipeline.

The crawler will then make GET requests to these new links to repeat the same process as it did with the seed URL. This recursive process enables the script to visit every URL on the domain and gather all the available information.

Python is a highly popular programming language for web crawling tasks due to its simplicity and rich ecosystem. It offers a vast range of libraries and frameworks specifically designed for web crawling and data extraction, including popular ones like Requests, BeautifulSoup, Scrapy, and Selenium.

Building a Python web crawler

Building a basic web crawler in Python requires two libraries: one to download the HTML content from a URL and another to parse it and extract links.

In my experience, the combination of Requests and BeautifulSoup is an excellent choice for this task. Requests, an HTTP library, simplifies sending HTTP requests and fetching web pages. BeautifulSoup then parses the content retrieved by Requests.

Apart from this, Python provides Scrapy, a complete web crawling framework for building scalable and efficient crawlers. Though, we’ll look into it in the later sections.

Let's build a Python web crawler using Requests and BeautifulSoup. For this tutorial, we'll use the Books to Scrape as a target website to perform crawling.

What you need to begin

Before you start, make sure you meet all the following requirements:

- Download the latest version of Python from the official website. For this tutorial, we’re using Python 3.12.2.

- Choose a code editor like Visual Studio Code or PyCharm, or you can use an interactive environment such as Jupyter Notebook.

Let’s start by creating a virtual environment using the venv module.

python -m venv myenv

myenv\\Scripts\\activate

Install the following libraries:

pip install requests beautifulsoup4 lxml

Crawling script

Create a new Python file named main.py and import the project dependencies:

from urllib.parse import urljoin

import requests

from bs4 import BeautifulSoup

We're using urljoin from the urllib.parse library to join the base URL and crawled URL to form an absolute URL for further crawling. As it's part of the Python standard library, there's no need for installation. We’ll look at the base URL and crawled URL later in this section.

Use the requests library to download the first page.

class MyWebCrawler:

def __init__(self):

# Initialize necessary variables

pass

def navigate(self):

html_content = requests.get("https://books.toscrape.com/").text

The variable html_content contains the HTML data that was retrieved from the server. You can parse the data using BeautifulSoup, with the lxml option specifying the parser library to use. All the parsed data will be stored in a soupvariable.

class MyWebCrawler:

# ...

def navigate(self, url):

# ...

soup = BeautifulSoup(html_content, "lxml")

Now, find all anchor tags in the parsed data with an href attribute and iterate through them to extract other relevant URLs within the page.

class MyWebCrawler:

# ...

def navigate(self, url):

# ...

for anchor_tag in soup.find_all("a", href=True):

link = urljoin(url, anchor_tag["href"])

The urljoin function combines the base URL with the relative URL found in the href attribute, resulting in the full, absolute URL for the linked webpage.

For example, (https://books.toscrape.com/, catalogue/category/books1/index.html) would be combined to form an absolute URL: https://books.toscrape.com/catalogue/category/books1/index.html.

The extracted link should not be present in the visited_urls list, which stores all the links previously visited by the crawler. It should also be absent from the urls_to_visit queue, which contains URLs scheduled for future crawling. Once valid links are extracted from a page, they are added to the urls_to_visit queue.

class MyWebCrawler:

# ...

def navigate(self, url):

# ...

if link not in self.visited_urls and link not in self.urls_to_visit:

self.urls_to_visit.append(link)

The while loop continues processing URLs in the urls_to_visit queue. Here's what happens for each URL:

- Dequeue the URL from the

urls_to_visitlist. - Call

navigate()to fetch the page and enqueue new links tourls_to_visit. - Mark the dequeued URL as visited by adding it to

visited_urls.

class MyWebCrawler:

# ...

# ...

def start(self):

while self.urls_to_visit:

current_url = self.urls_to_visit.pop(0)

self.navigate(current_url)

self.visited_urls.append(current_url)

Here’s the complete code:

from urllib.parse import urljoin

import requests

from bs4 import BeautifulSoup

class MyWebCrawler:

def __init__(self, initial_urls=[]):

self.visited_urls = []

self.urls_to_visit = initial_urls

def navigate(self, url):

try:

html_content = requests.get(url).text

soup = BeautifulSoup(html_content, "lxml")

for anchor_tag in soup.find_all("a", href=True):

link = urljoin(url, anchor_tag["href"])

if link not in self.visited_urls and link not in self.urls_to_visit:

self.urls_to_visit.append(link)

except Exception as e:

print(f"Failed to navigate: {url}. Error: {e}")

def start(self):

while self.urls_to_visit:

current_url = self.urls_to_visit.pop(0)

print(f"Crawling: {current_url}")

self.navigate(current_url)

self.visited_urls.append(current_url)

if __name__ == "__main__":

MyWebCrawler(initial_urls=["https://books.toscrape.com/"]).start()

The result is:

Our crawler visits all the URLs on the page step-by-step.

As shown on the page below, it will first visit the "Home" page, then click on the "Books" section, and then visit all the categories like Travel, Mystery, and so on.

Finally, it will visit every book.

Extract data

You can extend login to perform web scraping. This will allow you to extract product data and save it in a dictionary while crawling the web. By using CSS selectors, you can extract the book title, price, and availability and save this information in the dictionary.

self.book_details = []

book_containers = soup.find_all("article", class_="product_pod")

for container in book_containers:

title = container.find("h3").find("a")["title"]

price = container.find("p", class_="price_color").text

availability = container.find("p", class_="instock availability").text.strip()

self.book_details.append(

{"title": title, "price": price, "availability": availability}

)

The data will be extracted and stored in a dictionary. You can then export this scraped data to a CSV file. To do this, import the csv module and use the following logic for export.

def save_to_csv(self, filename="books.csv"):

with open(filename, "w", newline="") as csvfile:

fieldnames = ["title", "price", "availability"]

writer = csv.DictWriter(csvfile, fieldnames=fieldnames)

writer.writeheader()

for book in self.book_details:

writer.writerow(book)

Here's the complete Python script for the web crawler.

To demonstrate the functionality, I have set a limit that extracts only 25 books. However, you can remove the limit if you want to extract all the books on the website.

Please note that the process may take some time.

import csv

from urllib.parse import urljoin

import requests

from bs4 import BeautifulSoup

class MyWebCrawler:

def __init__(self, initial_urls=[]):

self.visited_links = []

self.book_details = []

self.links_to_visit = initial_urls

def navigate(self, url):

try:

html_content = requests.get(url).content

soup = BeautifulSoup(html_content, "lxml")

book_containers = soup.find_all("article", class_="product_pod")

for container in book_containers:

if len(self.book_details) >= 25:

return

title = container.find("h3").find("a")["title"]

price = container.find("p", class_="price_color").text

availability = container.find(

"p", class_="instock availability"

).text.strip()

self.book_details.append(

{"title": title, "price": price, "availability": availability}

)

for anchor_tag in soup.find_all("a", href=True):

link = urljoin(url, anchor_tag["href"])

if link not in self.visited_links and link not in self.links_to_visit:

self.links_to_visit.append(link)

except Exception as e:

print(f"Failed to navigate: {url}. Error: {e}")

def start(self):

while self.links_to_visit:

if len(self.book_details) >= 25:

break

current_link = self.links_to_visit.pop(0)

self.navigate(current_link)

self.visited_links.append(current_link)

def save_to_csv(self, filename="books.csv"):

with open(filename, "w", newline="") as csvfile:

fieldnames = ["title", "price", "availability"]

writer = csv.DictWriter(csvfile, fieldnames=fieldnames)

writer.writeheader()

for book in self.book_details:

writer.writerow(book)

if __name__ == "__main__":

crawler = MyWebCrawler(initial_urls=["https://books.toscrape.com/"])

crawler.start()

crawler.save_to_csv()

Run the script. Once finished, you’ll find a new file named ‘books.csv' in your project folder.

Congratulations, you've just learned how to build a basic web crawler!

Yet, there are certain limitations and potential disadvantages to this code:

- The crawler revisits the same pages multiple times, causing unnecessary network requests and processing overhead.

- It lacks proper error handling and doesn't address specific HTTP errors, connection timeouts, or other potential issues. Additionally, there's no retry mechanism in place.

- The URL queue is simply a list, making it inefficient for handling a large number of URLs.

- By ignoring the

robots.txtfile, the crawler can overwhelm the target server and lead to IP blocking. Therobots.txtfile often specifies acrawl-delayinstruction that should be respected to avoid overloading the server.

You can solve the above issues with custom code, implementing parallelism, retry mechanisms, and error handling separately.

However, this approach can become overly complex.

Thankfully, Python offers Scrapy, an all-in-one web crawling framework.

In the next section, we'll create a web crawler using Scrapy to address these limitations. We'll explore how Scrapy provides a set of functionalities and simplifies building custom crawlers.

Python web crawling with Scrapy

Beyond basic libraries like Requests and Beautiful Soup, Python offers powerful tools for tackling complex web crawling challenges.

Libraries like Playwright and Selenium are headless browser automation tools that allow you to control a headless browser to interact with web pages similarly to how a real user would do. These tools are ideal for scraping complex, JavaScript-heavy websites where you need to mimic user actions like clicking buttons or filling out forms.

However, when it comes to efficiently crawling large websites and extracting structured data, Scrapy is the go-to framework in Python.

Scrapy is the most popular web scraping and crawling Python framework with nearly 51k stars on GitHub. It utilizes asynchronous scheduling and handling of requests, allowing you to define custom spiders that navigate websites, extract data, and store it in various formats.

Scrapy provides a robust suite of tools: the Scheduler manages the URL queue, the Downloader retrieves web content, Spiders parse the downloaded content and extract data, and Item Pipelines clean and store the extracted data. This makes it well-suited for a variety of web crawling tasks.

1. Installing Scrapy

To start web crawling using Python, install the Scrapy framework on your system. Open your terminal and run the following command:

pip install scrapy

2. Creating a Scrapy project

Once Scrapy is installed, use the following command to create a new project structure:

scrapy startproject satyamcrawler

Choose any name for your project, for example, “satyamcrawler”. Once you execute the command successfully, a directory structure containing various Python files will be created. This is what the typical directory structure looks like:

The core directory for Scrapy is the spiders directory. This is where Scrapy looks for Python files containing the code that defines how to crawl websites.

To start your crawling process, create a new Python file (bookcrawler.py in our case) inside the spiders directory and write the following code:

from scrapy.spiders import CrawlSpider, Rule

from scrapy.linkextractors import LinkExtractor

class BookCrawler(CrawlSpider):

name = "satyamspider"

start_urls = [

"https://books.toscrape.com/",

]

allowed_domains = ["books.toscrape.com"]

rules = (Rule(LinkExtractor(allow="/catalogue/category/books/")),)

The code defines a BookCrawler class which is subclassed from the built-in crawlspider.

Crawlspider is designed to efficiently navigate and extract data from websites that have a hierarchical structure, where pages are interconnected through links.

The Rule class defines the set of rules for how to process the webpage encountered during crawling. The rule is defined using the LinkExtractor instance that specifies the rules and patterns to follow to extract links from the webpage.

In our code, only one rule is defined for now.

The allow parameter of the LinkExtractor is set to /catalogue/category/books/, which means the spider should only follow links that contain this string in their URL.

The start_urls list contains the URLs from where the spider will begin crawling. In this case, we’ve only one URL, which is the homepage URL of the website.

The allowed_domains specifies the domains that the spider is allowed to crawl. Here, it's set to "books.toscrape.com". This means the spider will only follow links that belong to this domain.

Let's run the crawler and see what happens. Open your terminal and navigate to the satyamcrawler/ directory before running the following command:

scrapy crawl satyamspider

As you fire the above command in the terminal, Scrapy initializes the BookCrawler spider class, creates requests for each URL in start_urls, and sends them to the scheduler.

The scheduler checks if the request's domain is allowed by the spider's allowed_domains attribute (if specified). If allowed, the request goes to the downloader, which fetches the response from the server.

Scrapy then uses the rules to extract matching links from the response. It follows these links recursively, creating new requests to continue crawling the website.

This process continues until there are no more links to follow or Scrapy's limits are reached (depth limits, maximum pages, etc.).

At this point, you’ll see every URL your spider has crawled in your output window.

As shown in the above result, only URLs are extracted according to our defined rules.

However, if you need to extract specific data, like book category, title, and price, you need to define a parse_item function within a crawler class (e.g., BookCrawler).

This function receives the response from each request made by the crawler and returns the necessary data extracted from the response.

You can use various methods, such as CSS selectors and XPaths, to extract data.

In the next section, we’ll focus on using CSS selectors to extract data from web page crawl responses.

3. Extracting data using Scrapy

Let's see how to extract data using Scrapy.

Our rules define that URLs must contain "catalogue/category/books".

This particular string is present in the URLs of the catalogs listed on the left side of the books.toscrape.com homepage.

As a result, Scrapy will visit each catalog and extract the relevant data.

As we discussed, you need CSS selectors to extract data.

To extract the title, you can use h3 a::text.

For the price, use p.price_color::text.

For book availability, use p.availability::text.

Here, the::text pseudo-element is used to select the text content.

Here’s the complete code (Note that the parse_item function only works after setting the callback attribute in your LinkExtractor.)

from scrapy.spiders import CrawlSpider, Rule

from scrapy.linkextractors import LinkExtractor

class BookCrawler(CrawlSpider):

name = "satyamspider"

start_urls = [

"https://books.toscrape.com/",

]

allowed_domains = ["books.toscrape.com"]

rules = (

Rule(LinkExtractor(allow="/catalogue/category/books/"), callback="parse_item"),

)

def parse_item(self, response):

category = response.css("h1::text").get()

books = []

for book in response.css("article.product_pod"):

title = book.css("h3 a::text").get()

price = book.css("p.price_color::text").get()

availability = book.css("p.availability::text")[1].get().strip()

books.append({"title": title, "price": price, "availability": availability})

yield {"category": category, "books": books}

In short, here's what the code does:

- It extracts the category of books from the page using a CSS selector

h1::text. - Then, it iterates over each book on the page using

article.product_pod. - For each book, it extracts the title, price, and availability using CSS selectors.

- It appends this information to a list of books.

- Finally, it yields a dictionary containing the category and the list of books.

You’ve created a spider that crawls a website and retrieves data. Run the spider and see the magic.

scrapy crawl satyamspider

The output in the console window shows that the data is extracted successfully and returned in the form of a dictionary.

Now, one last thing to discuss:

What if you want to exclude some categories?

For this, you can use the exclude parameter and pass a comma-separated list of the categories you want to exclude (e.g., Travel, Mystery, Historical Fiction).

rules = Rule(

LinkExtractor(

allow="/catalogue/category/books/",

deny=r"(travel_2|mystery_3|historical-fiction_4|sequential-art_5)",

),

callback="parse_item",

)

Now, when you run your code, all categories except for these 4 will be crawled.

4. Saving data to JSON

To save the crawled data as a JSON file, run the following command in your terminal:

scrapy crawl satyamspider -o data.json

When you execute the script, the web pages crawled by the scraper, and their corresponding data are displayed in the console.

By using the -o flag, Scrapy will store all of the retrieved data in a JSON file called data.json.

Once the crawl is complete, a new file named data.json will be created in the project directory.

This file will contain all of the book-related data retrieved by the crawler.

Here’s the result:

Best practices for web crawling

If you're using Scrapy to crawl large websites like Amazon or eBay with millions of pages, you need to crawl responsibly by adjusting some settings.

Scrapy settings allow you to customize the behavior of all its components, including the core, extensions, pipelines, and spiders.

Some of the settings are:

- USER_AGENT: Allows you to specify the user agent. The default user agent is "Scrapy/VERSION (+https://scrapy.org)".

- DOWNLOAD_DELAY: Prefer it to throttle your crawling speed and avoid overwhelming servers. This setting specifies the minimum number of seconds to wait between two consecutive requests to the same domain.

- DOWNLOAD_TIMEOUT: The amount of time (in seconds) that the downloader will wait before timing out. The default is 180.

- CONCURRENTREQUESTSPER_DOMAIN: The maximum number of concurrent (i.e. simultaneous) requests that will be performed to any single domain. The default is 8.

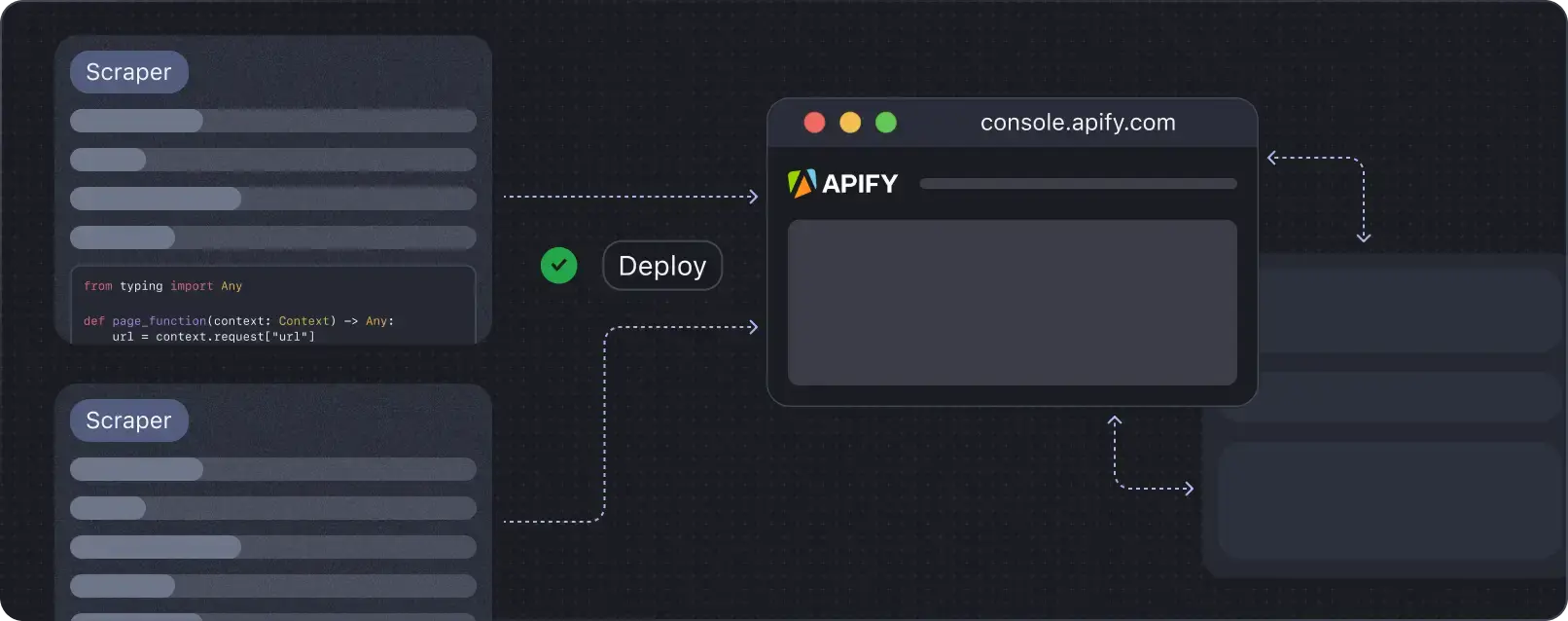

Run your Scrapy spiders on Apify

Run, monitor, schedule, and scale your spiders in the cloud.

Scrapy crawls are optimized for a single domain by default.

If you intend to crawl across multiple domains, you'll need to adjust these settings for broad calls.

You can limit the total number of pages crawled using the CLOSESPIDER_PAGECOUNTsetting of the close spider extension. If the spider exceeds this limit, it will be stopped with the reason closespider_pagecount.

Similarly, when crawling websites with millions of pages, configure Scrapy settings appropriately to ensure optimal performance next time.

Conclusion

I've guided you through building a web crawler and then scraping data using Requests and Scrapy. You can use what you've learned here to start crawling basic websites like the one in this tutorial. Once you've built the crawler and it's working as expected, consider deploying your Scrapy code to the cloud to optimize your crawler's performance.

Deploy your scraping code to the cloud

Headless browsers, infrastructure scaling, sophisticated blocking.

Meet Apify - the full-stack web scraping and browser automation platform that makes it all easy.