Apify is a full-stack web scraping and browser automation platform. As a result, it serves as a bridge between the web's vast data resources and sophisticated AI tools like LlamaIndex and LangChain. Check us out.

LlamaIndex and LangChain. You must have heard of them by now!

These tools have emerged as prominent players in the AI arena in a very short time, but if you're a developer who's a little confused about when to use one or the other, you're not alone.

That's why we're here to explain the functionalities of both LlamaIndex and LangChain and guide you through their uses, and scenarios where one might be preferred over the other.

Finally, we'll explore how Apify's integrations with these tools can help you with your data handling and AI-driven applications.

What is LlamaIndex?

LlamaIndex serves as a conduit between your custom data and large language models like GPT-4. It streamlines the process of integrating diverse data sources — APIs, databases, PDFs — into conversation with AI systems. This integration not only makes your data more accessible but also transforms it into a powerful tool for developing custom applications and workflows.

Key functionalities of LlamaIndex include:

- Data ingestion: Bringing data from its original source into the system.

- Data structuring: Organizing data in an AI-understandable format.

- Data retrieval: Fetching the right pieces of data as needed.

- Integration: Simplifying the melding of your data with various application frameworks.

What is LangChain?

LangChain allows for the modular construction of applications using language models. These modules can either stand alone or be composed together for more complex use cases. Its essential modules include Model I/O, Retrieval, Agents, Chains, and Memory, each targeting specific development needs and making LangChain a comprehensive toolkit for advanced language model applications.

The core functionalities of LangChain include:

- Model I/O: Efficiently managing interactions with various language models.

- Retrieval: Accessing application-specific data critical for dynamic data utilization.

- Agents: Enhancing decision-making capabilities in applications.

- Chains and Memory: Serving as building blocks for application development and maintaining application state across executions.

When to choose LlamaIndex and when to choose LangChain

What is LlamaIndex used for?

LlamaIndex is designed for building search and retrieval applications. It provides a simple interface for querying LLMs and retrieving relevant documents. LlamaIndex is also better suited for processing large amounts of data.

What is LangChain used for?

Langchain is a more general-purpose framework used for a wide variety of applications. It provides tools for interacting with LLMs, as well as for loading, processing, and indexing data. LangChain essentially acts as an interface between different language models, vector databases, and a range of different libraries.

These features make both LlamaIndex and LangChain ideal for building RAG models and other LLM solutions. So, let's continue to explore the differences.

LlamaIndex for custom data-driven AI applications

LlamaIndex is fantastic when you need to bridge your custom data with large language models. It's particularly effective for:

- Data diversity: When you're dealing with a variety of data sources like APIs, databases, or PDFs and need a unified approach to make this data conversational.

- Efficient data management: When you require smooth data ingestion, structuring, retrieval, and integration into diverse application frameworks.

- Custom application development: When your aim is to create sophisticated QA systems, chatbots, or intelligent agents powered by tailored data (the same can be said for LangChain).

LangChain for modular AI application development

LangChain, with its modular approach, is the go-to choice when you need:

- Flexible integration: When working with various language models, LangChain provides a system that offers efficient Model I/O functionalities.

- Dynamic data utilization: If your application demands access to specific, real-time data, the Retrieval module of LangChain is invaluable.

- Complex use cases: You're building applications that require the orchestration of different modules like Agents and Memory for advanced decision-making and maintaining application state.

- Custom application development: When your aim is to create sophisticated QA systems, chatbots, or intelligent agents powered by tailored data.

LlamaIndex excels in data indexing and language model enhancement. Choose LlamaIndex to build a search and retrieval application that needs to be efficient and simple.

LangChain stands out for its versatility and adaptability. Choose LangChain to build a general-purpose application that needs to be flexible and extensible.

Integrating Apify with LlamaIndex and LangChain

What is Apify?

Apify is a full-stack web scraping and browser automation platform. As a result, it serves as a bridge between the web's vast data resources and sophisticated AI tools like LlamaIndex and LangChain.

Reasons to use Apify

- Access to diverse and dynamic data

The web is an ever-evolving source of information. Apify's web scraping and automation capabilities allow you to access a wide range of data to ensure your AI models are fed with the most current and relevant information.

- Customizable data extraction

Different AI applications require different types of data. Apify provides the flexibility to target specific websites, formats, and content. This allows for tailored data extraction that aligns with your unique requirements.

- Enhancing AI models

LlamaIndex and LangChain, though powerful, are limited by the data they can access. Integrating web data via Apify expands their capabilities, as it enables them to process and analyze information that isn't readily available in standard datasets.

- Scalability and efficiency

Manual data collection is time-consuming and prone to errors. Apify automates this process, offering scalable solutions that save time and resources while ensuring high-quality data for your AI models.

By integrating with LlamaIndex and LangChain, Apify not only enhances the data input for these AI tools but also expands their potential applications.

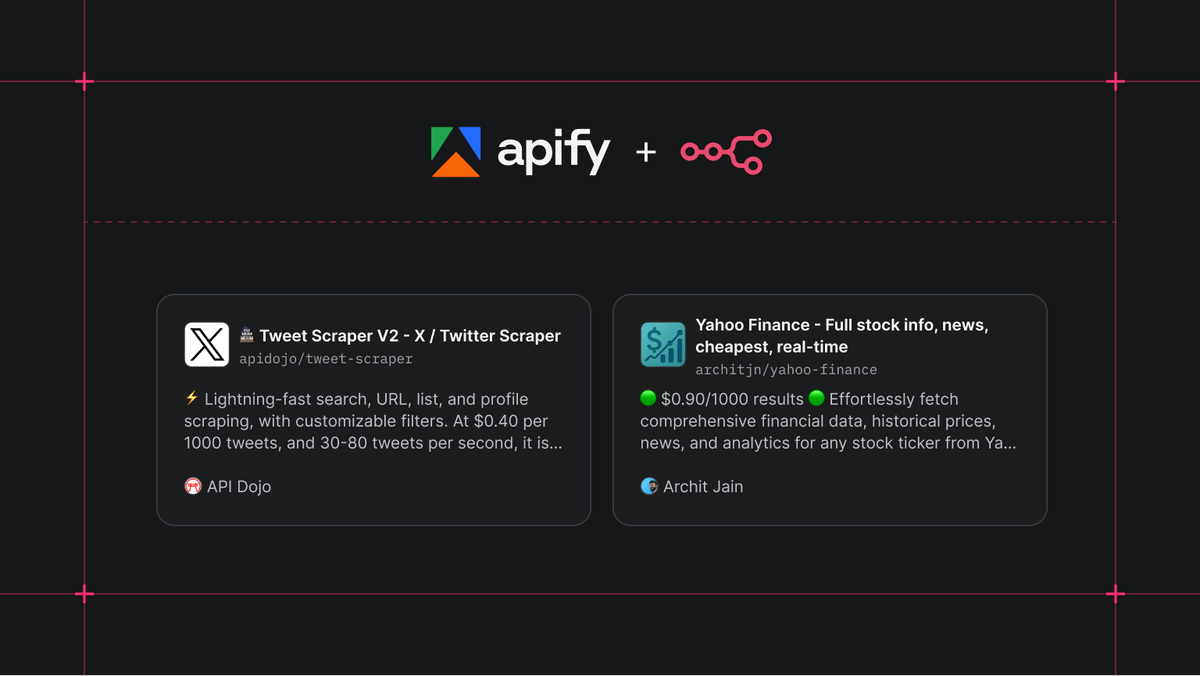

Apify's integration with LlamaIndex

Apify's integration with LlamaIndex empowers you to feed vector databases and LLMs with web-crawled data via serverless cloud programs called Actors.

There are two components that you can integrate with LlamaIndex:

- An Apify dataset

- An Apify Actor

- Install the necessary dependencies (

pip install apify-client llama-index). - After successfully installing all dependencies, you can start writing Python code. To use the Apify Actor loader, import

download_loader,Document. - Import the loader using the method

download_loader.

Apify's Integration with LangChain

The integration of Apify with LangChain allows for the enrichment of vector databases and LLMs with data scraped from the web via Apify Actors. This is ideal for applications that benefit from dynamic and up-to-date web content.

- Set up the environment and install the necessary packages (

pip install apify-client langchain openai chromadb). - Import

os,Document,VectorstoreIndexCreator, andApifyWrapperinto your source code. - Find your Apify API token and OpenAI API key and initialize these into the environment variable.

- Run the Actor, wait for it to finish, and fetch its results from the Apify dataset into a LangChain document loader.

- Initialize the vector index from the crawled documents and query it.

Take your first steps

So, now what? Well, here are some next steps for you!

- Visit the Apify website to explore the LlamaIndex and LangChain integrations. You can start by checking out the documentation or reading our blog posts on LangChain and other AI-related topics.

- Sign up for a free Apify account to start integrating web data into your AI projects and begin transforming your data processing and application development.