Imagine you're in a bar with a couple of your fellow programming buddies.

You're huddled together in the corner, geeking out over programming stuff. Over in the other corner is a group of loud, thuggish football fans, annoying everyone with their obnoxious behavior. Meanwhile, all the pretty girls are strutting their stuff on the dance floor, having a good time.

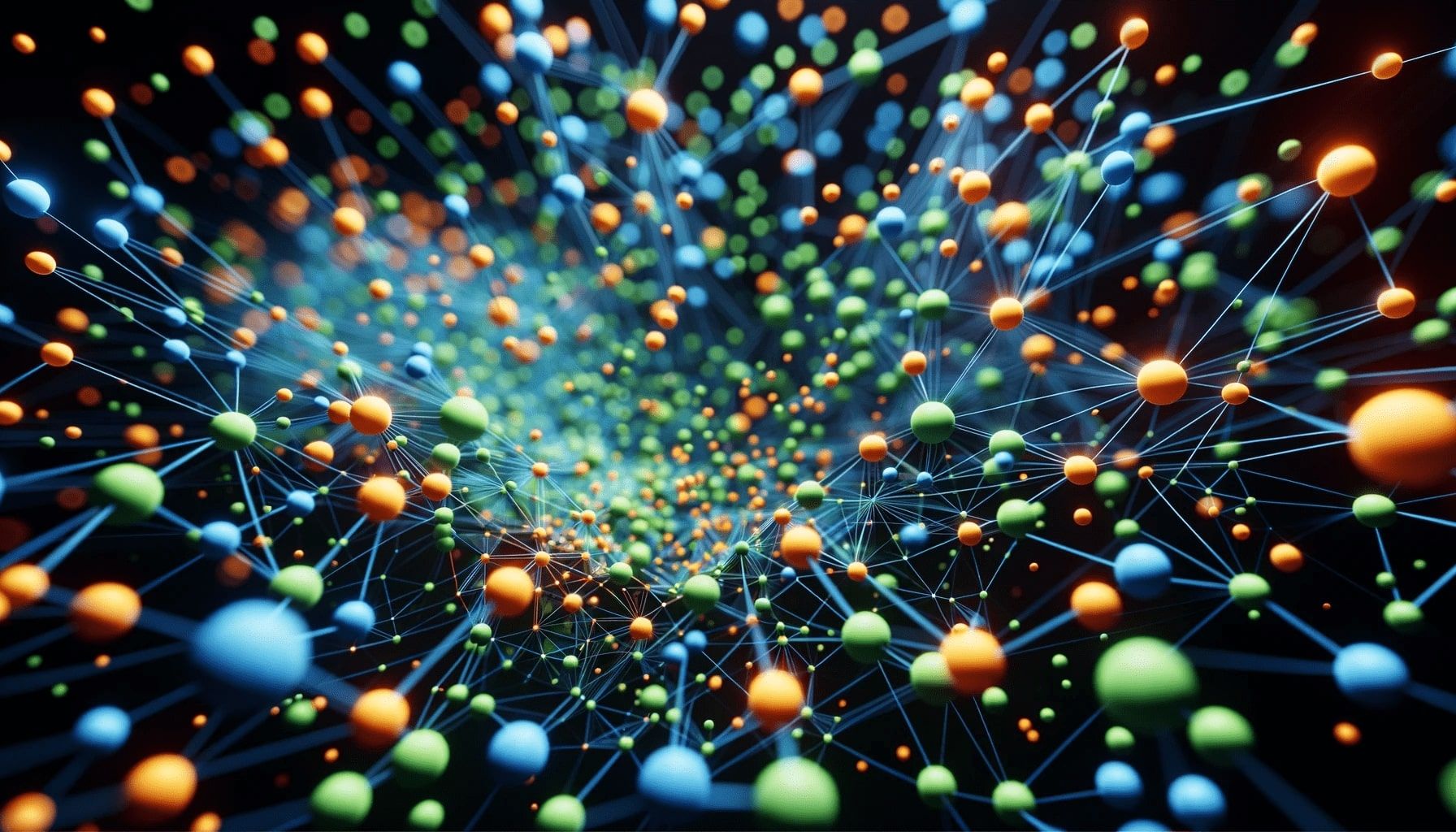

Notice how different people in this one space are grouped together based on similarities and shared interests.

In AI, embeddings work the same way. They group similar features in virtually any data type.

Embeddings are a way to represent data points (like words or even users) in a mathematical space, often multi-dimensional. These embeddings are generated in such a way that similar items are closer together while dissimilar items are farther apart, much like in the bar.

Applications of embeddings

Recommendation systems

You've probably watched a few movies on a streaming platform at some point in your life. Whenever you do this, the system tries to understand your taste by placing you in a certain position within its "taste space." Based on your position (and the position of movies you liked), it recommends other movies that are close by in this space.

Search engines

When you type a query, the search engine translates your words into their embedding space and fetches documents that are semantically closer to your query. The closer the semantic relationship, the more likely it is that it's relevant to your search.

Text generation

Embeddings also help in understanding the context of words. If a language model wants to generate a sentence following "The king and the...", it would look at the proximity of words to "king" in the embedding space to predict the next word. That's pretty much how LLMs like ChatGPT work.

Word embeddings: why are they important for LLMs?

Despite the widespread (mis)use of the term 'AI' for large language models, LLMs are not intelligent. Machines, by their nature, don't understand text. They understand numbers (yet LLMs suck at math: go figure!). An NLP task, whether it's sentiment analysis, machine translation, or document classification, needs numerical data.

Word embeddings convert words into numbers, but not just any numbers. These numbers (or vectors) capture the semantics, or the meaning, of a word in relation to other words in a given dataset. A well-trained set of word embeddings will place words with similar meanings or contexts close to each other in this multi-dimensional space. The word "king", for example, might be close to "queen" and "monarch", but farther from "apple" or "car". This is what is called semantic relationships.

Learn more about NLP in the context of LLMs

Semantic relationships

When we say "semantic relationships," we mean the connections between words that have similar meanings or associations.

Let's continue with the monarch example: consider the words "king," "man," "queen," and "woman." These words have relationships based on gender and monarchy. Good word embeddings can detect these relationships. They can tell us that the difference between "king" and "man" is similar to the difference between "queen" and "woman." In other words, embeddings can mathematically capture the idea that kings and queens are associated with their respective genders.

This is super useful in various language tasks because it allows computers to recognize not just individual words but also how words relate to each other in meaning.

Dimensionality

While a word can be represented as a one-hot encoded vector of the size of the entire vocabulary, embeddings compress this representation into a dense vector of much smaller dimensions.

Initially, each word is like a huge switchboard with as many switches as there are words in the entire language - it's gigantic! If we have 100,000 words in our vocabulary, that's 100,000 switches for each word. This is what we call a "one-hot encoded vector".

Now, think about the mess and inefficiency of handling these giant "switchboards". It's not only impractical but also computationally heavy. This is where dimensionality comes into play. We can make things more manageable.

Word embeddings are like smart compressors. They take each word's "switchboard" and squeeze it down into a much smaller size, typically between 50 to 300 "switches" (dimensions) instead of tens of thousands.

Contextual information

Words don't live in isolation; they depend on the words around them to convey their full meaning.

Modern word embeddings, especially contextual ones, can grasp the meaning of a word based on the words that surround it in a sentence or a paragraph.

For instance, if you see the word "bank" in a sentence like "I deposited money in the bank," a contextual embedding knows it's talking about a financial institution. But if you see "I sat by the bank," it understands that it's referring to the side of a river. This ability to consider context makes embeddings incredibly powerful for tasks like language comprehension, translation, and text generation because they capture the subtleties and nuances of meaning that words carry in different situations.

These contextual embeddings are the reason LLMs can understand the subtleties of language and accurately interpret the meaning of words in different sentences.

How to create embeddings (tools and methods)

Now that we've established the necessity of embeddings, the question naturally arises: how on earth do you generate them?

Fortunately, there's a range of tools, methods, libraries, and platforms out there for creating embeddings. Here are some of the most popular, together with some of the features they offer:

Word2Vec

Word2Vec is a popular method developed by Google that uses neural networks to learn word representations. It can capture semantic relationships between words.

- Continuous Bag of Words (CBOW) & Skip-Gram: two architectures to produce a distributed representation of words.

- Captures semantic relationships. For instance, the vector math "King" - "Man" + "Woman" might get you close to "Queen".

- Generates a fixed-size dense vector for each word.

GloVe (Global Vectors for Word Representation)

Developed by Stanford, GloVe is an unsupervised learning algorithm to obtain vector representations for words. It does this by aggregating global word-word co-occurrence statistics from a corpus.

- Focuses on word co-occurrence statistics.

- Tries to capture meaning based on how frequently words appear together in large corpora.

- Generates fixed-size dense vectors for words.

BERT

BERT (Bidirectional Encoder Representations from Transformers) is a deep learning model that generates contextualized word embeddings. Unlike Word2Vec or GloVe, which generate a single word embedding for each word, this model produces embeddings that consider the context in which a word appears.

- Contextual embeddings that represent words based on their meaning in a sentence.

- Deep bidirectional transformer architecture.

- Can handle polysemy (words with multiple meanings).

Hugging Face

Hugging Face is a wildly popular deep learning library that offers a wide array of pre-trained models for NLP tasks, from BERT and GPT-2 to newer models like T5 and BART.

- Comes with tokenizers for different models to ensure that text is preprocessed in a manner consistent with the model's original training data.

- While offering pre-trained models, the library is designed to fine-tune these models on custom datasets easily.

- Provides out-of-the-box solutions for tasks like text classification, named entity recognition, and translation, simplifying the process for developers.

- Built on both TensorFlow and PyTorch, which gives users the freedom to choose their preferred framework.

Learn more about Hugging Face in this introduction to transformers and pipelines

TensorFlow

Developed by Google, TensorFlow is designed to provide a flexible platform for building and deploying ML models.

- Supports training on multiple GPUs and TPUs, which enables faster computations.

- Allows for more intuitive operations and debugging, behaving more like standard Python operations.

- An integrated visualization tool to monitor the training process, visualize model architecture, and more.

Learn more about TensorFlow in this PyTorch and TensorFlow comparison

Keras

Keras acts as an interface for TensorFlow, which makes it simpler to develop deep learning models.

- Comes with an integrated

Embeddinglayer, which simplifies the process of creating word embeddings. - Allows you to use pre-trained embeddings or train them from scratch.

- Converts tokenized text to dense vectors of fixed size.

- Provides sequential and functional APIs for building complex architectures.

- Offers various pre-trained models for tasks like classification, segmentation, etc., which can be easily imported and fine-tuned.

Learn more about Keras

Gensim

A Python library specialized for topic modeling and document similarity analysis, Gensim provides tools for working with Word2Vec and other embedding models.

- Designed to work with large text corpora without consuming vast memory.

- Supports Word2Vec, FastText, and other popular embedding algorithms out of the box.

- Known for its implementation of the Latent Dirichlet Allocation (LDA) algorithm for topic modeling.

- Provides functionalities to compute document similarities based on their semantic meanings.

- Uses a dictionary to manage and map tokens to their IDs, which can be updated without having to recompute the entire dictionary.

- Supports incremental training, meaning you can update your model with new data without starting from scratch.

How LLMs generate embeddings

Deep architectures

LLMs use deep neural architectures, often transformer-based, which allow them to consider a wide context when generating embeddings. They don't just look at one or two words; they consider the entire context, capturing the complex patterns and relationships in language.

Token and position embeddings

In models like BERT, each word is initially represented using token embeddings. Think of these as traditional word embeddings, but not static. Then, they add another layer called position embeddings, which indicate the word's position in the sentence. This way, the model knows the order of words and can understand how they relate to each other.

Pre-training and fine-tuning

Generating embeddings isn't enough, though. AI models need to be pre-trained on the embeddings and fine-tuned.

For models like BERT, during pre-training, some words in a sentence are masked, which is to say they're hidden, and the model is trained to predict them based on surrounding words. This helps the model learn contextual representations.

After pre-training on massive datasets, models can be fine-tuned on specific tasks. This allows the embeddings to be further refined for particular applications.

But hang on! We forgot the first step!

Collecting data to create embeddings

Before you can train high-quality embeddings, you'll need to get your paws on vast amounts of data. Then that data needs to be ingested and prepared for machine learning purposes. So the first step to generating and training embeddings is data acquisition and pre-processing. Here's a brief overview of how to go about it:

- Collect text data: Use open datasets, web scraping, or access APIs to collect textual data.

- Clean the data: Remove noise and irrelevant information, and perform stemming and lemmatization.

- Label the data (if needed): While embeddings often use unsupervised learning, some models might benefit from labeled data. This can be done manually, via crowdsourcing, or using semi-supervised techniques.

The crucial last step: every embedding needs a home

So, now we've covered the beginning (data acquisition) and the middle (generating and training embeddings). But the vital final part is storing the embeddings, and that's where vector databases come in.

Storing embeddings in a vector database

Once embeddings are generated, they can be stored in a vector database for quick retrieval and similarity search. Because embeddings are high-dimensional vectors, traditional databases aren't optimized for vector search operations. A vector database allows you to find the most similar items quickly, handle literally billions of vectors without sacrificing search speed, and reduces the need for scanning the entire dataset. With a vector database, you can:

- Convert embeddings to a suitable format (like numpy arrays).

- Use database-specific APIs or tools to insert the vectors.

- Index the database for efficient search.

Examples of vector databases: Pinecone, Milvus, Weaviate, Chroma, FAISS.

You can read more about these and other vector databases in What is Pinecone? and 6-open source Pinecone alternatives

Let's end where we began

Now you know what embeddings are, how they work, and how to generate and use them. But where does Apify fit into all this?

We gave the game away right at the start:

Apify is all about collecting the right data at scale in the most efficient possible way. So if you need a web scraping platform to extract large volumes of real-time web data to generate and train embeddings and other AI solutions, your project begins with Apify.