If you’re using Google Maps for lead generation - whether you’re in SaaS sales, running a lead gen agency, organizing events, or preparing B2B prospecting - you’ve probably hit some limits. Google's Places API caps Text Search at 60 results across all pages, while Nearby Search returns up to 20 per query. The Google Maps interface itself shows only about 120 listings per search. To get more results, you’d need to repeatedly zoom into the map's subsections, which would take a lot of time and effort.

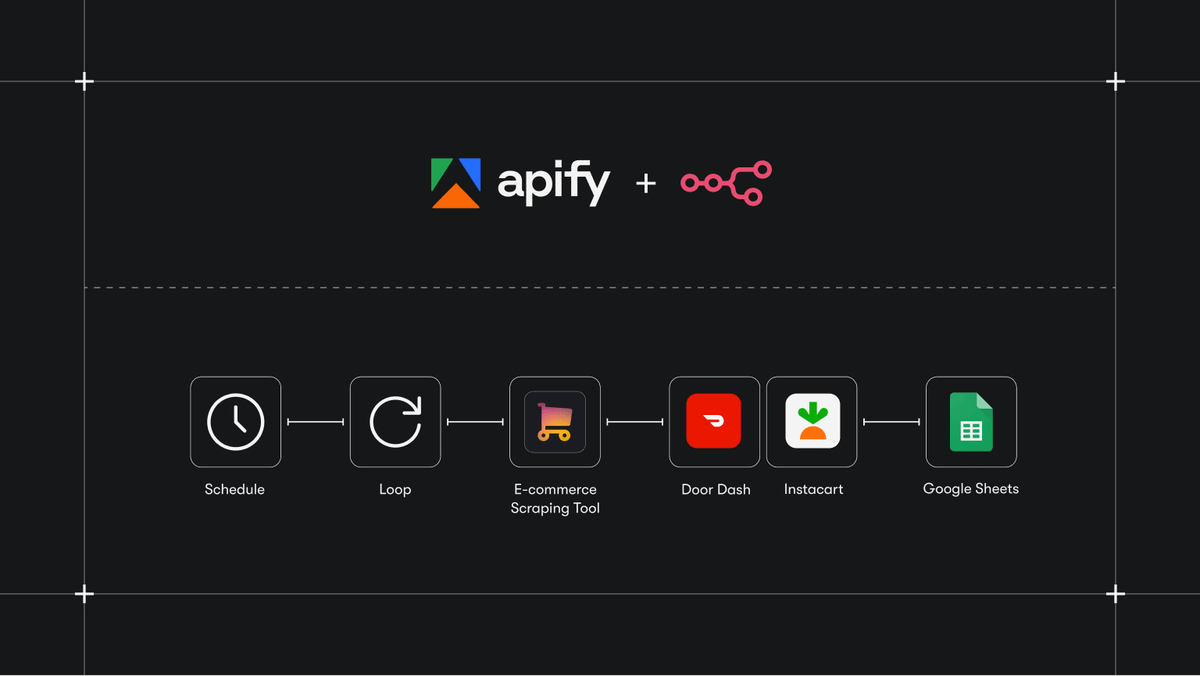

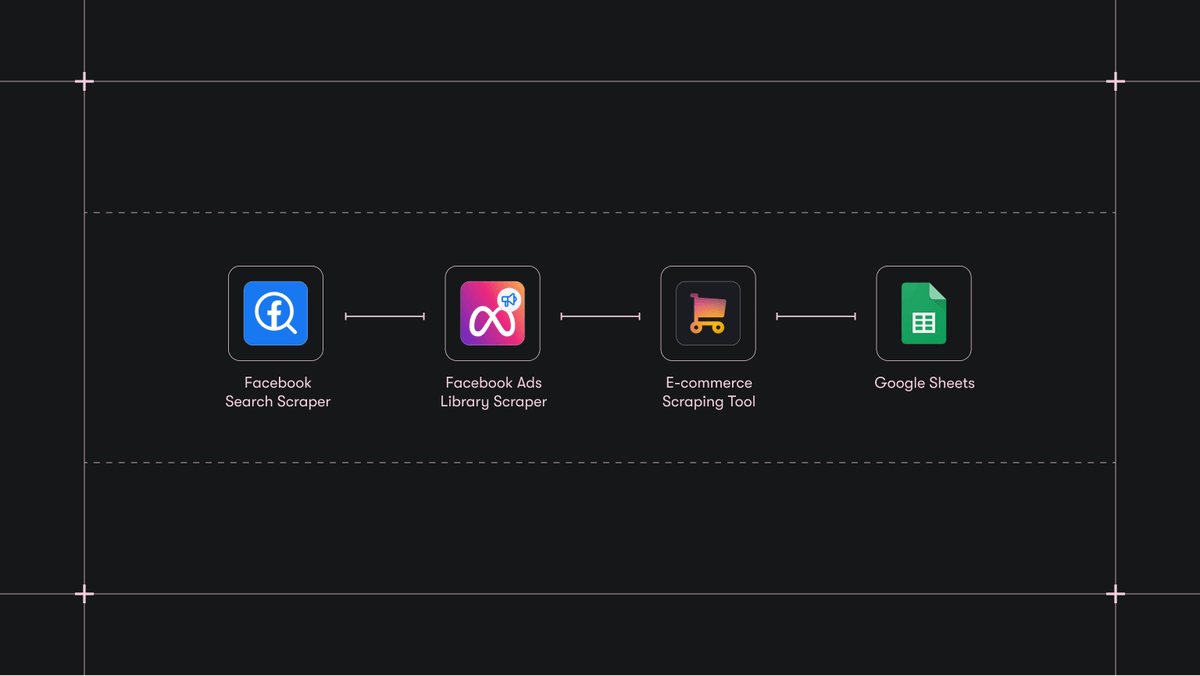

No matter how big your search area is, the same limits apply, costing you valuable leads in the process. Fortunately, you can overcome these obstacles with Google Maps Scraper, one of 30,000+ ready-made tools on Apify, the largest marketplace of tools for AI.

Google Maps limitations for scraping

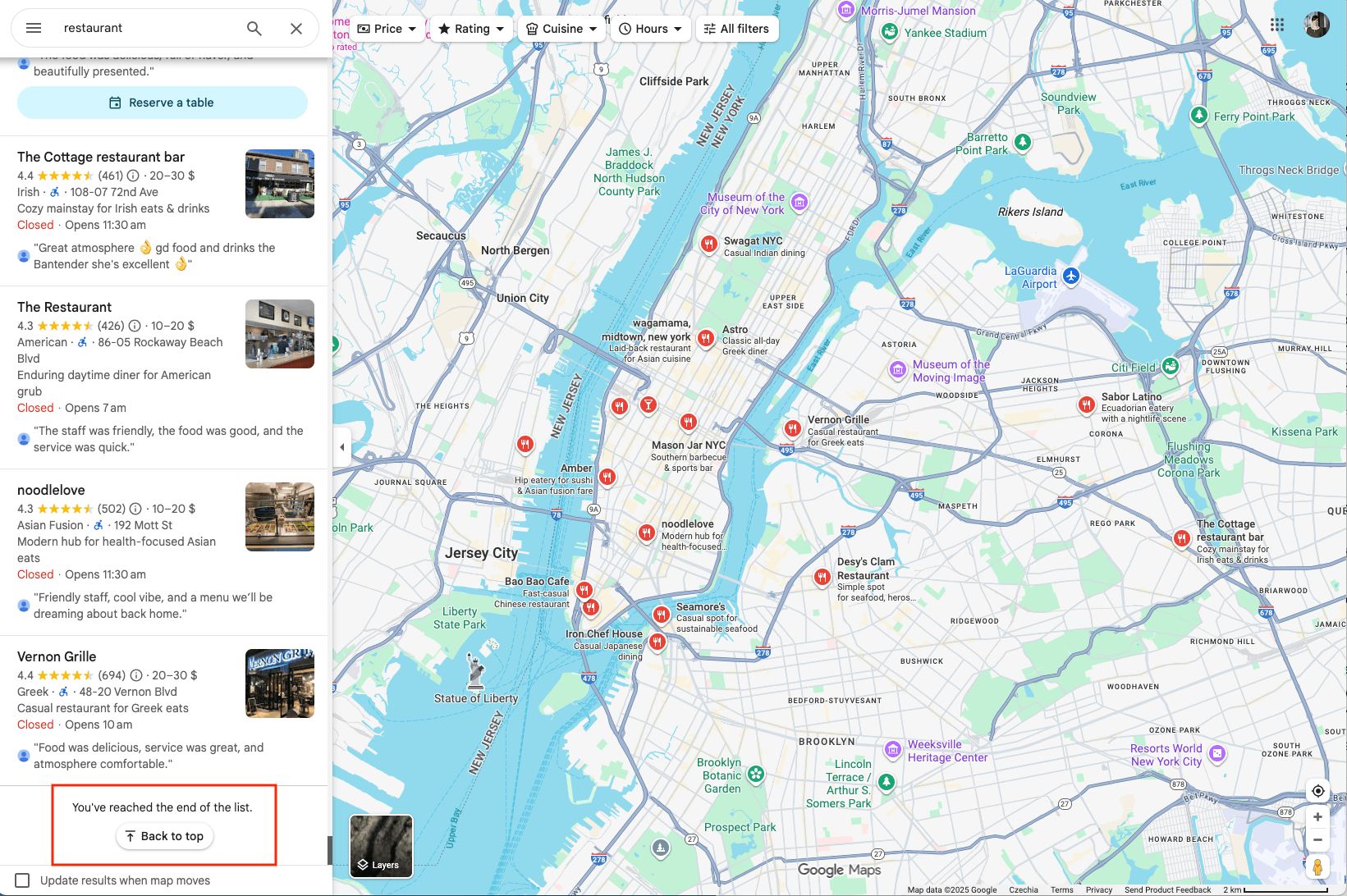

The Google Maps website's results cap is a UX decision: showing every single pin on a large map would clutter the screen and overwhelm users. While this doesn’t impact everyday map browsing, it makes scraping business data at scale difficult: It effectively hides thousands of potential leads until you zoom in to a smaller area. Let’s take New York City’s restaurants as an example: When browsing the Google Maps website as a user, you might notice that no matter how many places there are in a city, at some point, the sidebar on the left will say You’ve reached the end of the list.

If you count how many items are actually on this list, you will always end up with 120 at most. The same thing will happen if you try to search a bigger area (a state, for example).

How to overcome Google Places API limits

Google Maps Scraper not only helps you combat the 120-listings limit, it also generates more leads by running multiple location-targeted queries automatically, captures business info (names, phone numbers, websites, opening hours), and lets you enrich the data further with emails, social profiles, and leads contact details via paid add-ons. Then export everything as clean data. This tool can double as both a scraper and an unofficial Google Maps API or geolocation API. It can find or scrape hundreds of thousands of places at a time, leaving no stone unturned.

Apify's Google Maps API gives you four ways to scrape the same location: by creating Custom geolocation (Circle, Polygon, or Multipolygon) or by using Location parameters (search term plus city, or search term plus extended address). Each takes a bit of a learning curve, so let's break them down.

How to overcome the Google Maps limit of 120 places (video guide)

1. Create a custom area by using pairs of coordinates

It is a more complex but also the most autonomous way to use Google Maps Scrapers. Defining the area by geolocation will give you full control over the map area, no matter how big or small. Here are a few interesting shapes you can create using the Custom geolocation section of the Google Maps Scraper.

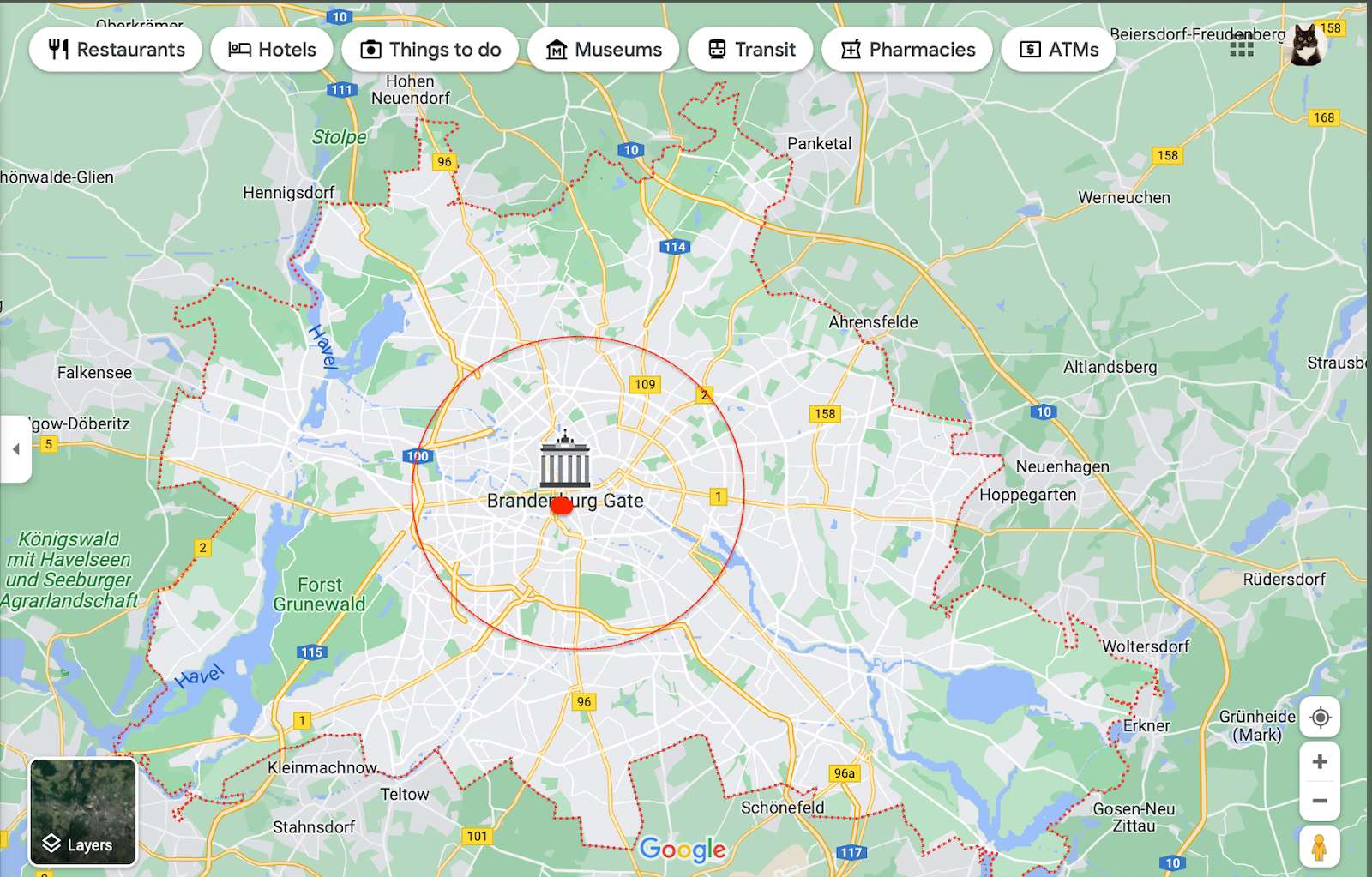

Circle

- If you don’t care about scraping places on the outskirts, the circle is your best option.

This is where we dive into the geometry-meets-JavaScript part. A circle feature is very useful for scraping places in specific, typically circular and dense areas, such as the city center.

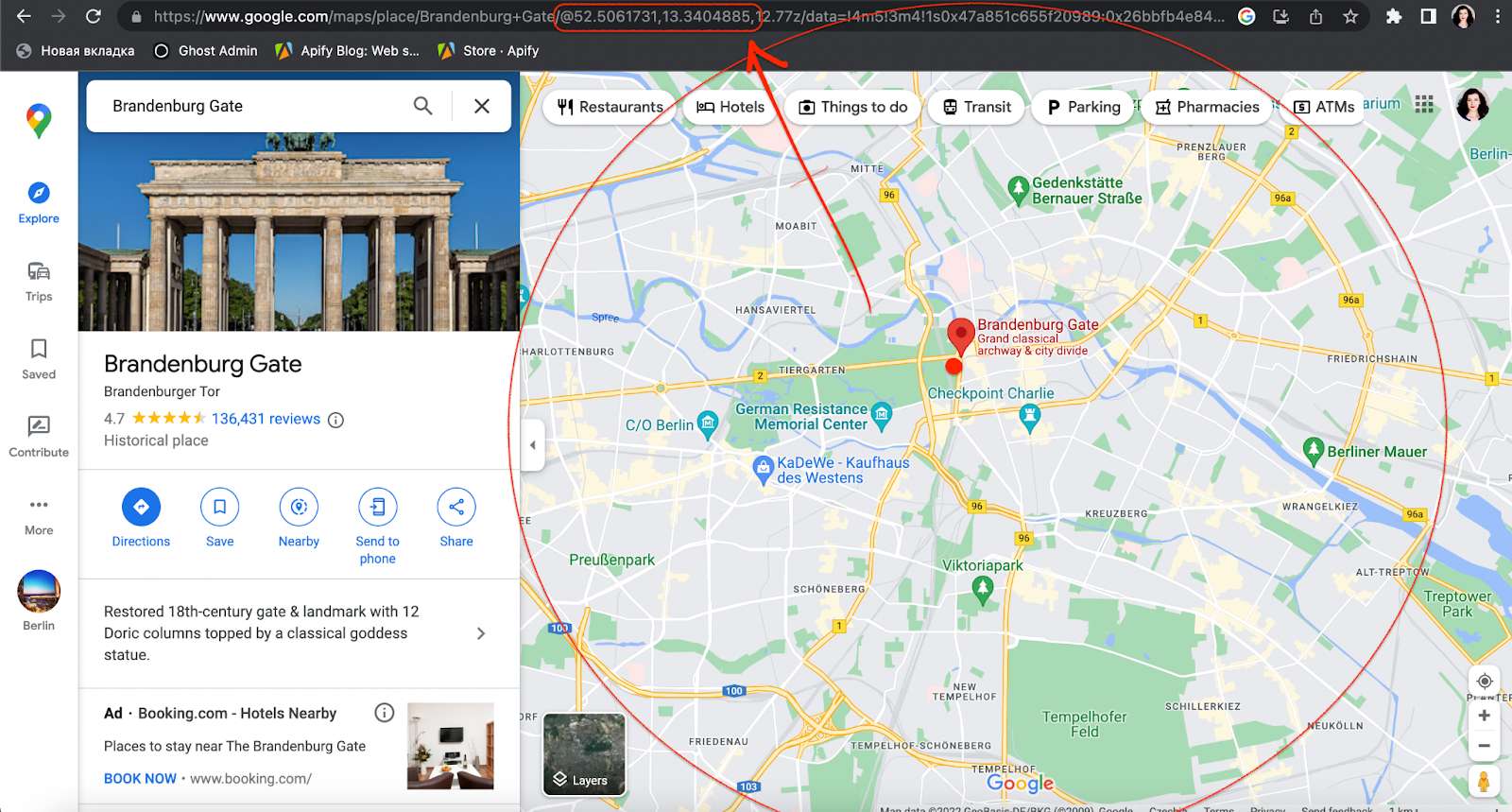

First, define the focus of your circle on Google Maps (longitude and latitude):

Let's scrape all places from an area in a circular shape. The easiest way to find your circle's coordinates is directly in the URL.

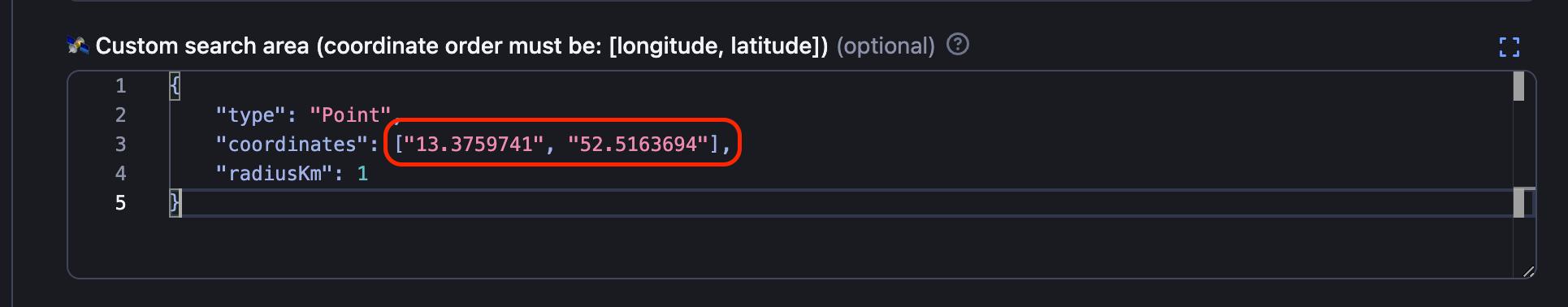

Then, add your circle coordinates into the Custom search area field. Don’t forget to pick the kilometer radius of how far your circle should reach.

Polygon

- Polygon is perfect for scraping places from custom or unusual shapes (a district, a peninsula or an island, for example). It can have as many points as you need (triangle, square, pentagon, hexagon, etc.)

Do not be intimidated - a polygon uses the same principle for interacting with Google Maps geolocation as the circle. However, it means you will have to add more than one coordinate pair.

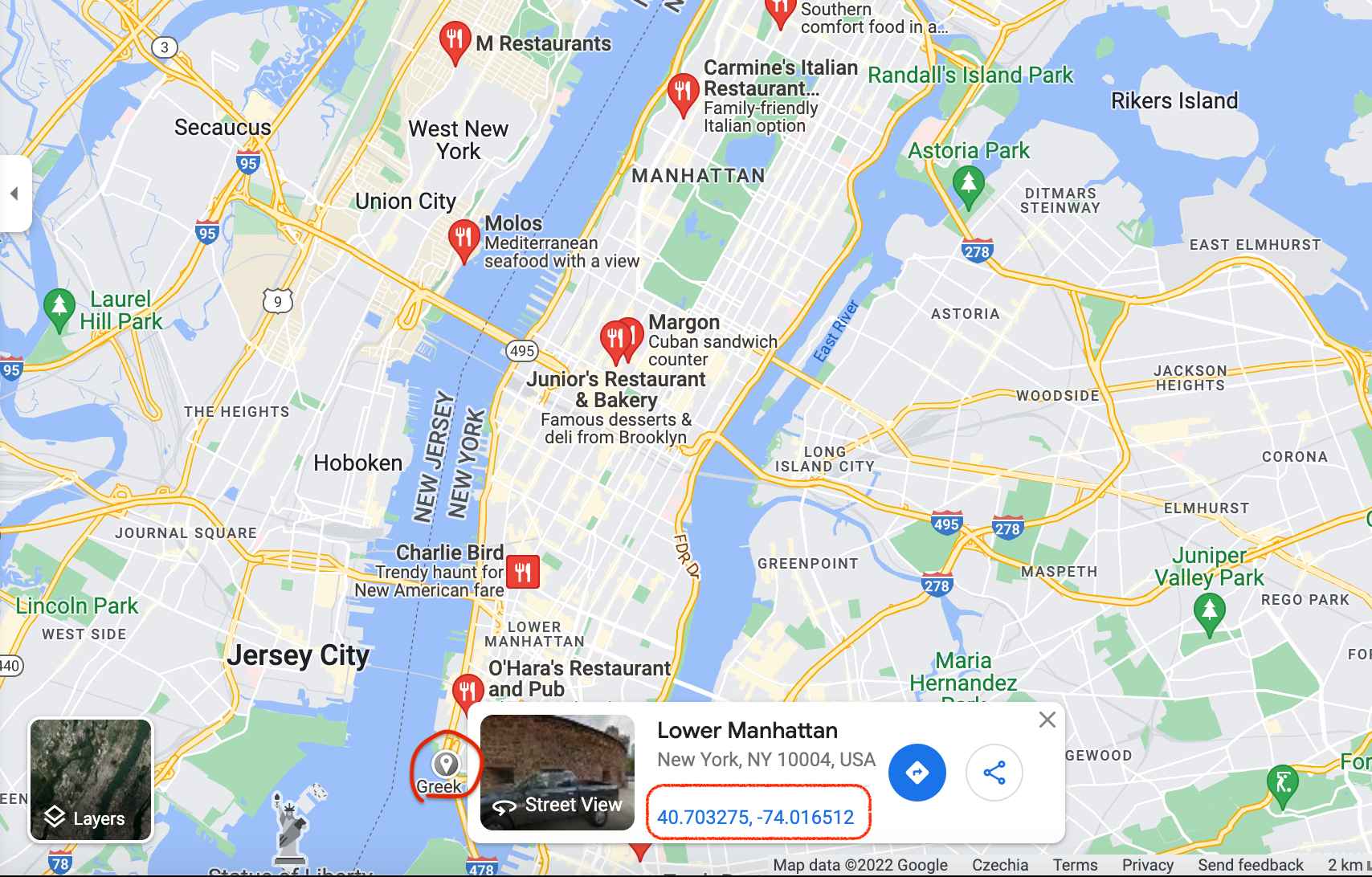

To form a free polygon, just pick a few different points on the map (at least three) and take note of their coordinates. You can find any pair of coordinates by clicking anywhere on the map:

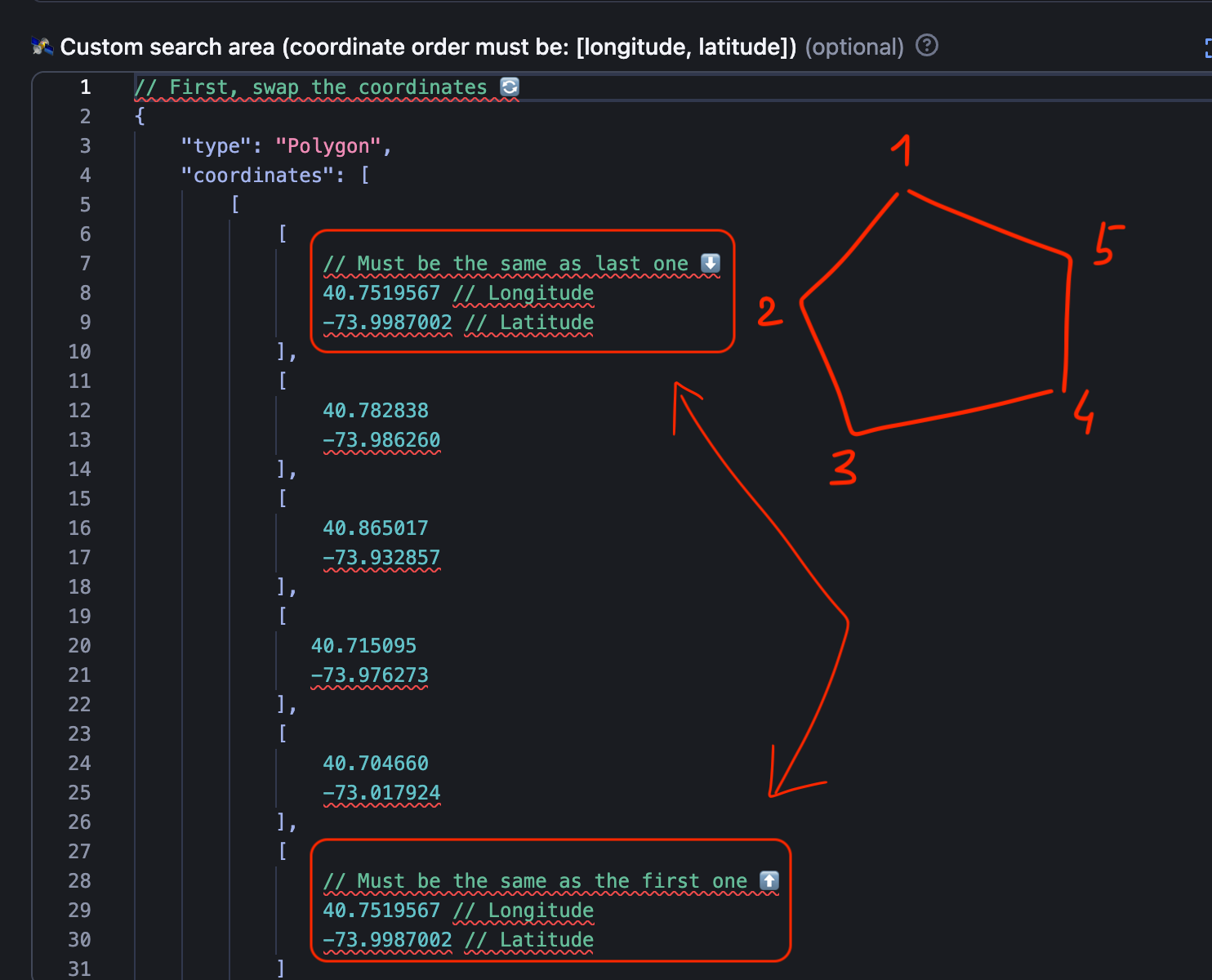

You can add as many points as you want to define your area in the most accurate shape possible. We're gonna go with the pentagon shape. Now, copy and paste each coordinate pair in a sequence into the Custom search area. This is what creating a pentagon for part of Manhattan would look like:

Don’t forget to close the polygon by making sure the first and the last coordinates are the same

1. They have to come in pairs (longitude-latitude per each point).

2. The first pair and the last pair of coordinates have to be the same (thus completing the shape of the area).

3. They have to be reversed compared to the coordinates on Google Maps website. The first field must be longitude ↕️, second field must be latitude ↔️.

If in doubt, use the readme 🔗 as your formatting guide.

Now click Start to begin extracting the data. Of course, we could add even more points to this shape to make it more accurate for the island, but you get the gist. You can choose to scrape Google Maps using polygons if your priority is speed, consuming fewer platform credits, or scraping a specific area with unusual geometry.

Multipolygon

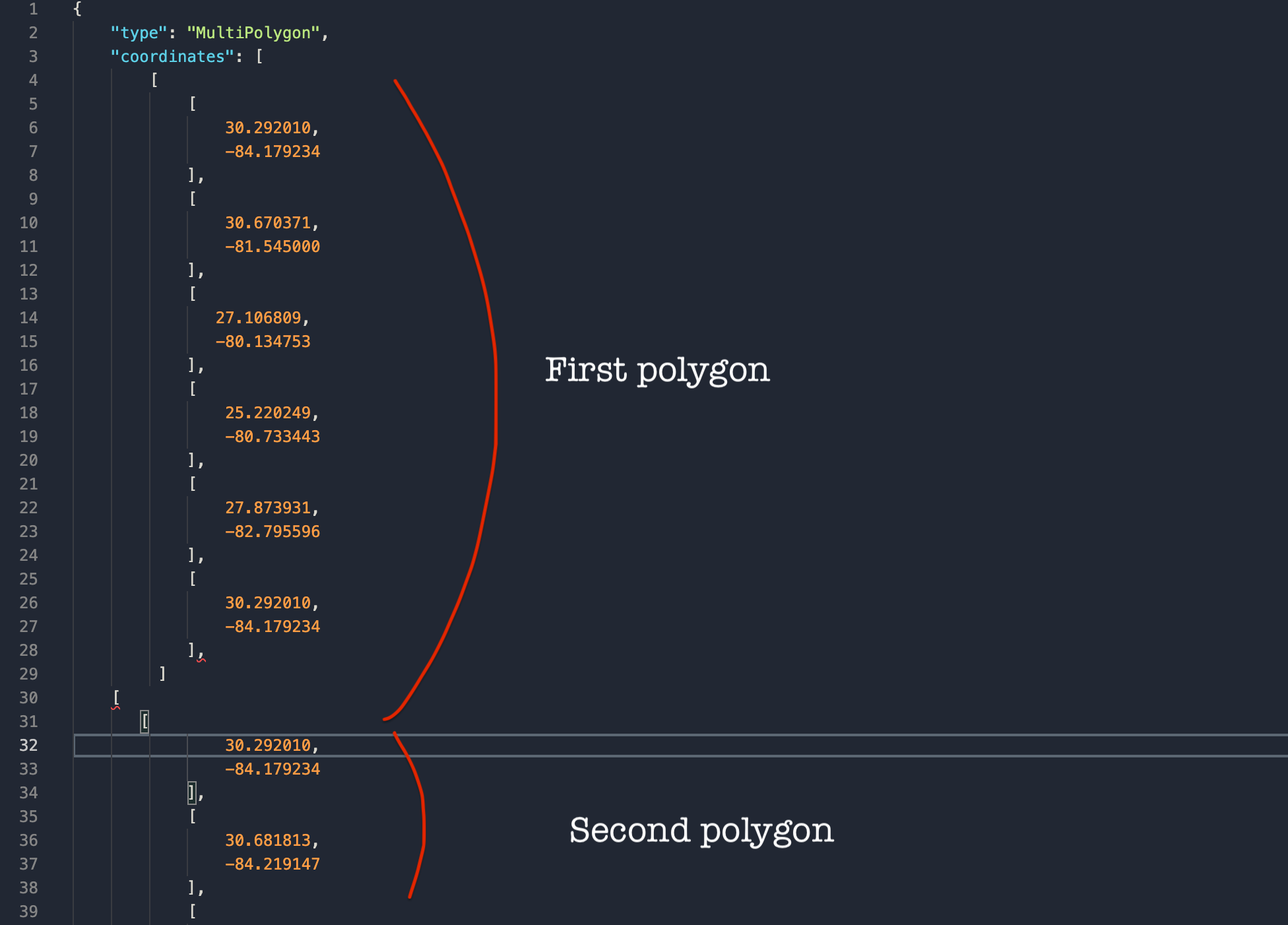

- It is best used for areas that are difficult to fit into one polygon or areas that are quite far away geographically but still have to be scraped together (for instance, an island together with the mainland).

The most complicated and most flexible in custom geolocation is Multipolygon. It is not a scraping option for everyone, but it can definitely solve a few headaches.

For instance, this multipolygon would include two polygons since that seems to be the only way to encompass all of Florida. Don't doubt it, even though you, as a user, see no Google Maps pins displayed on this map, the Google Maps Scraper will find them all.

So you do the same thing as with the polygons, but twice or more times if needed. Here's how you paste the coordinates of a multipolygon (by combining two or more polygon shapes) to Google Maps Scraper. The three rules above on how to insert the coordinates apply to multipolygons as well.

2. Choose the location using regular toponymy

This method of Google places scraping is a better choice if you don't need to customize the area you're scraping. The area is already defined by its name - a city, a region, a zip code area, or even an entire country.

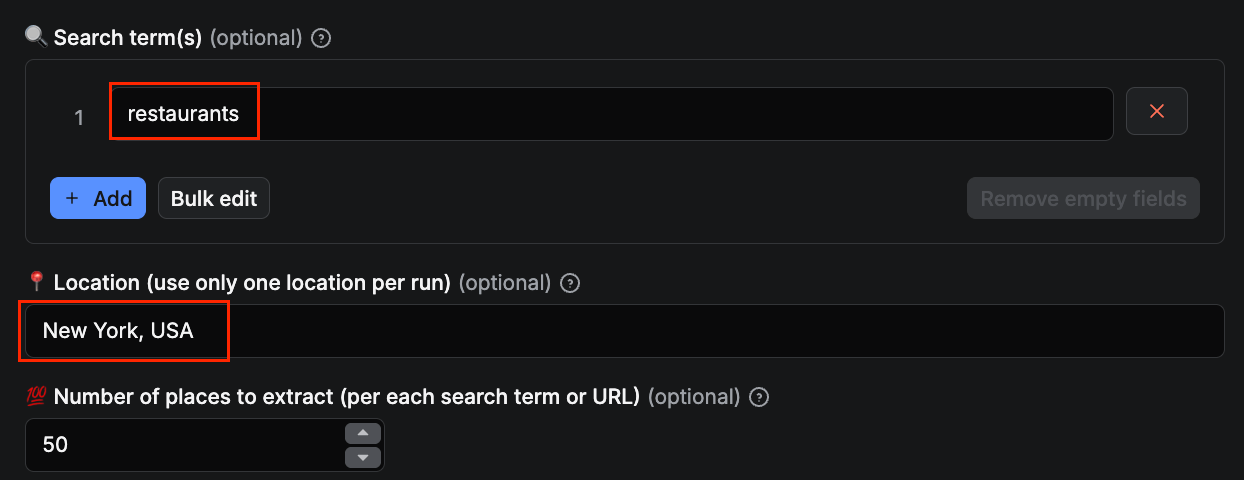

Where + What to scrape (Search term + city)

- Perfect for scraping trials and fast results.

This method is much easier than geolocation settings. Just indicate what you are searching for (restaurants) and where (New York). Then hit Start, and the scraper will do all the work and extract every place that matches your search.

1. There must be only 1 location per 1 search. You can have multiple search terms but there has to be only one location for all of them.

2. Don't put location and search term into the same field. Keep them separate. The scraper will still work but it won't overcome the limit of 120 places per map.

3. Don't put only location (without search term). Otherwise, you will scrape all places possible from that area instead of a specific category of places.

A Search term can be anything. Types of places: restaurants, cafes, vegan museums, gas stations, pubs, bakeries, ATMs - whatever category you’re searching for. They can also be names of places: Starbucks, Zara, or Pizza Hut. Here are just a few examples of Search terms:

You can also use search terms in languages other than English.

Where + What to scrape (Search term + extended address)

- This method is good for scraping areas by zip code or smaller cities and towns.

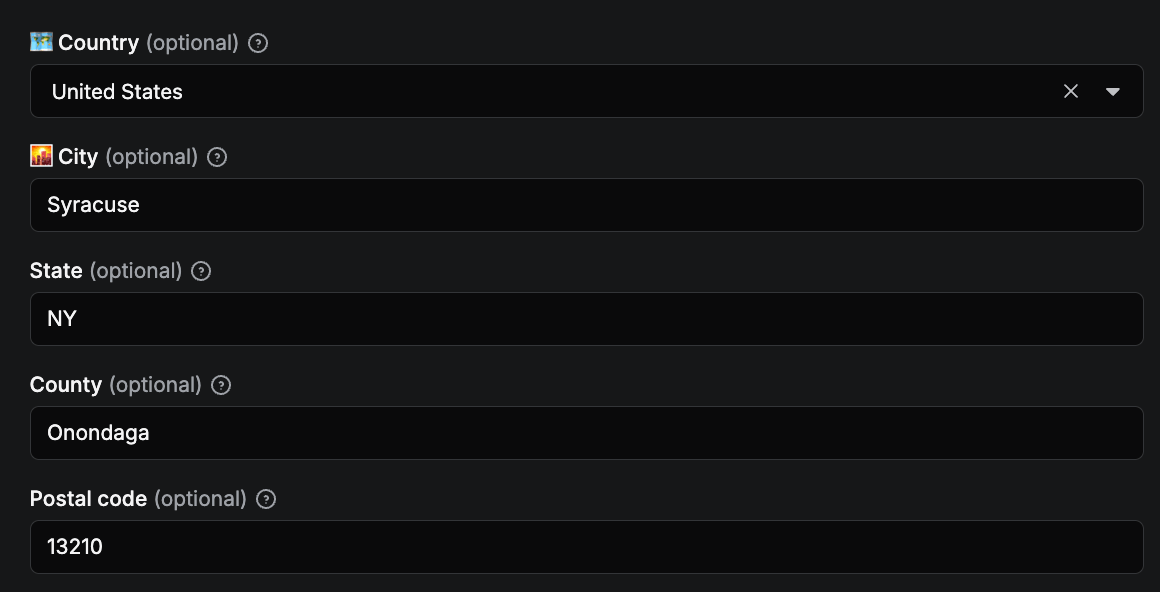

For this method, you define the location with the Country, State, US County if applicable, City & Postal code of the area that interests you. In our case, we’re scraping all restaurants in Syracuse by knowing the city specs. The more specs, the better the results. And don’t forget to fill out the Search term as well.

For the most accurate search, fill out as many fields as possible in this section. The scraper will create map grids and adjust the zoom level in the background; no need to set that up. Just hit the Start button.

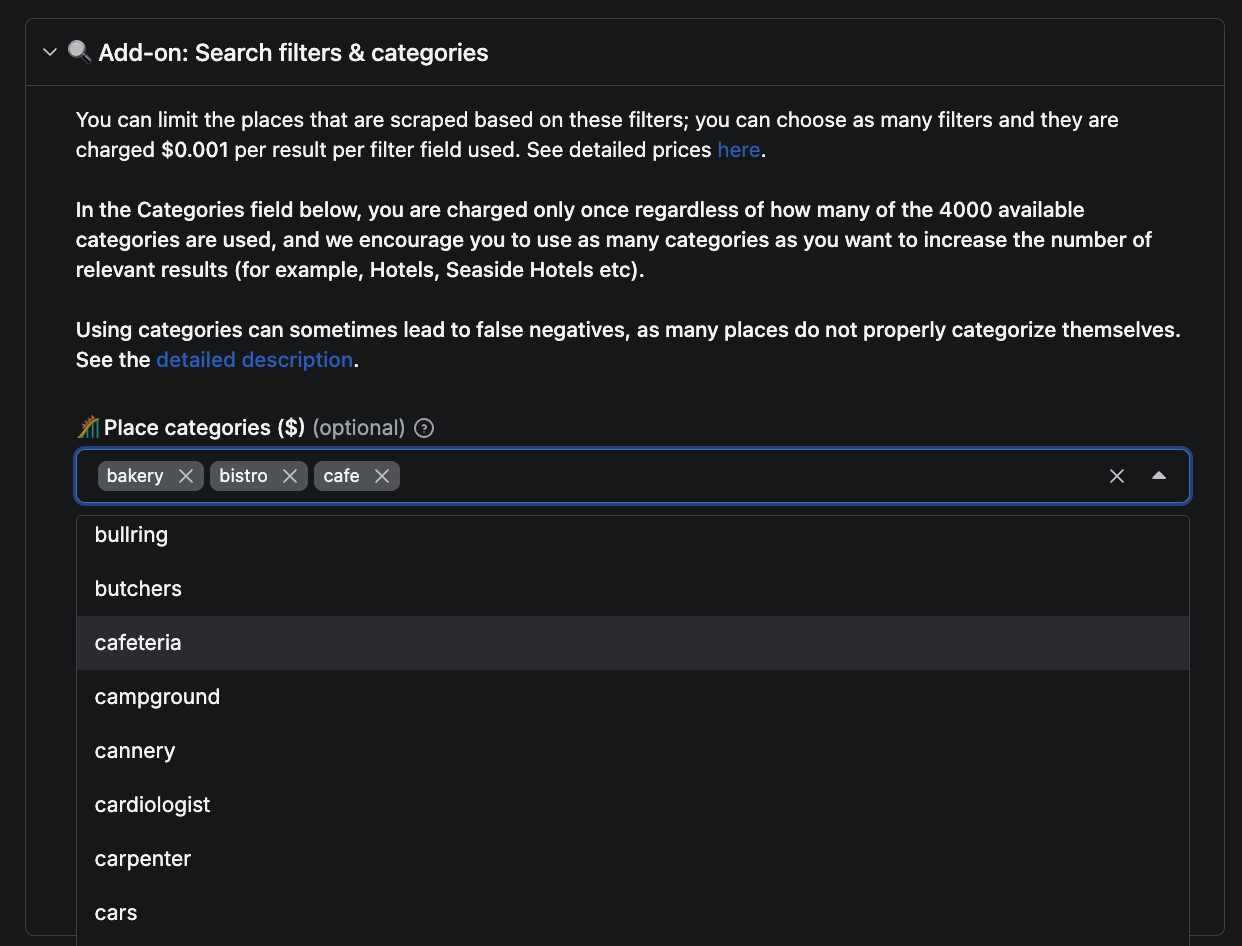

You can also replace search terms with Categories - the Actor's Categories field accepts Google Maps' built-in category names directly. The chance is, Google Maps already has your search term listed in their collection of 4,000+ categories.

How Google Maps Scraper gets results

Creating map grids

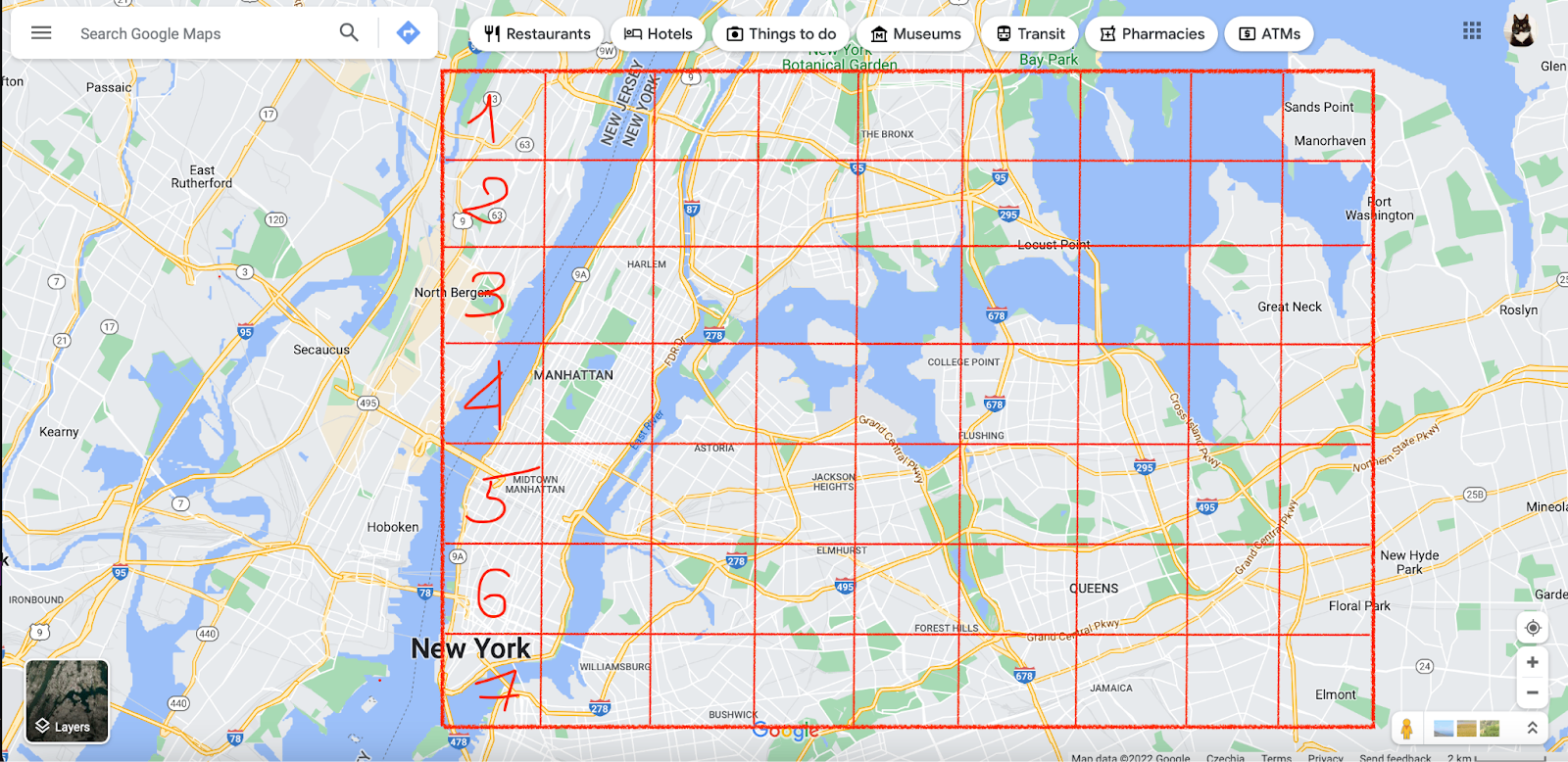

In order to get more listings, Google Maps Scraper splits a large map into many smaller maps. It will then search or scrape each small map separately and combine the end results later.

Automatic zooming on Google Maps

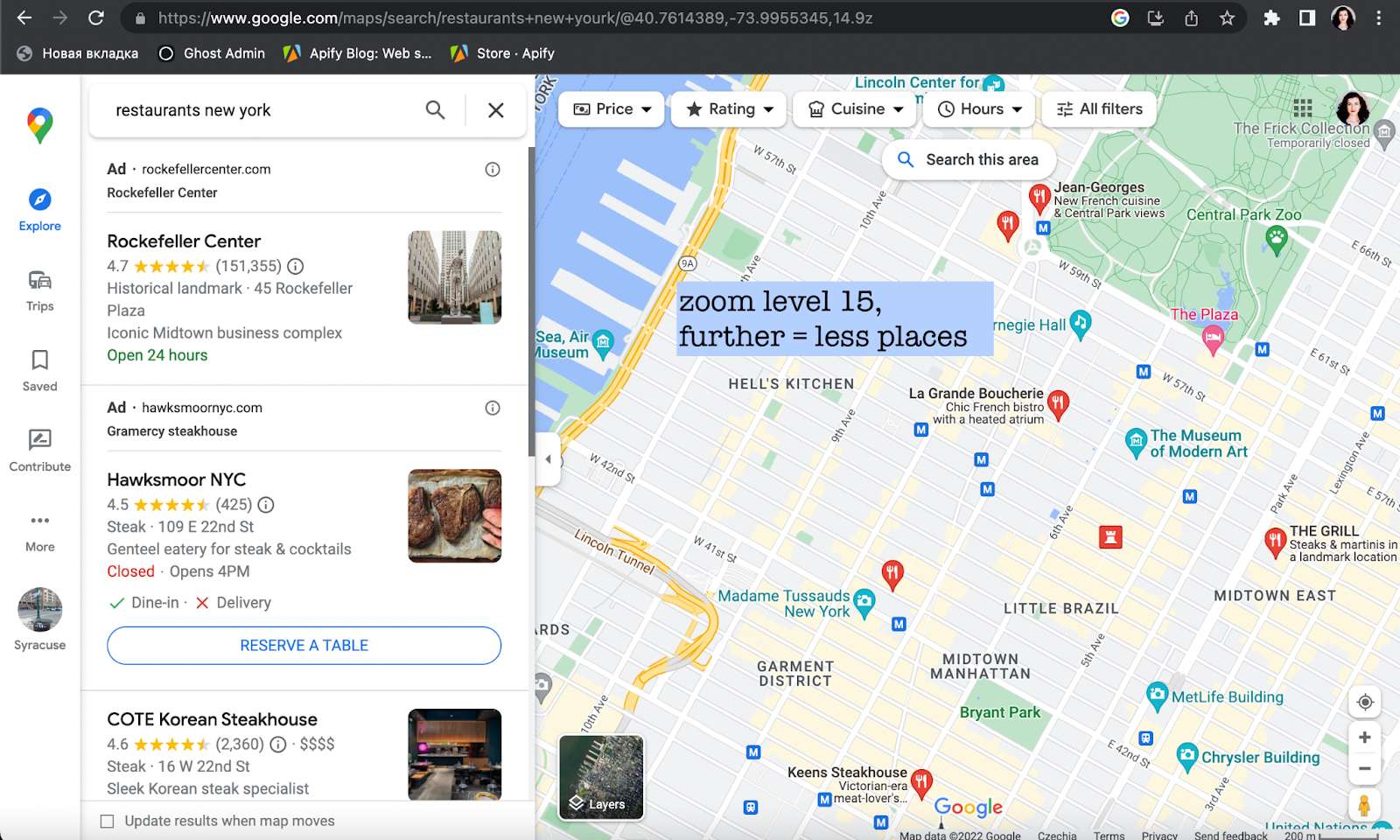

If you zoom out from the map far enough, you won’t see many places on the map, only city and state names. The further you zoom out, the fewer places (represented by Google Maps pins 📍) you can see, and the lower the zoom level is.

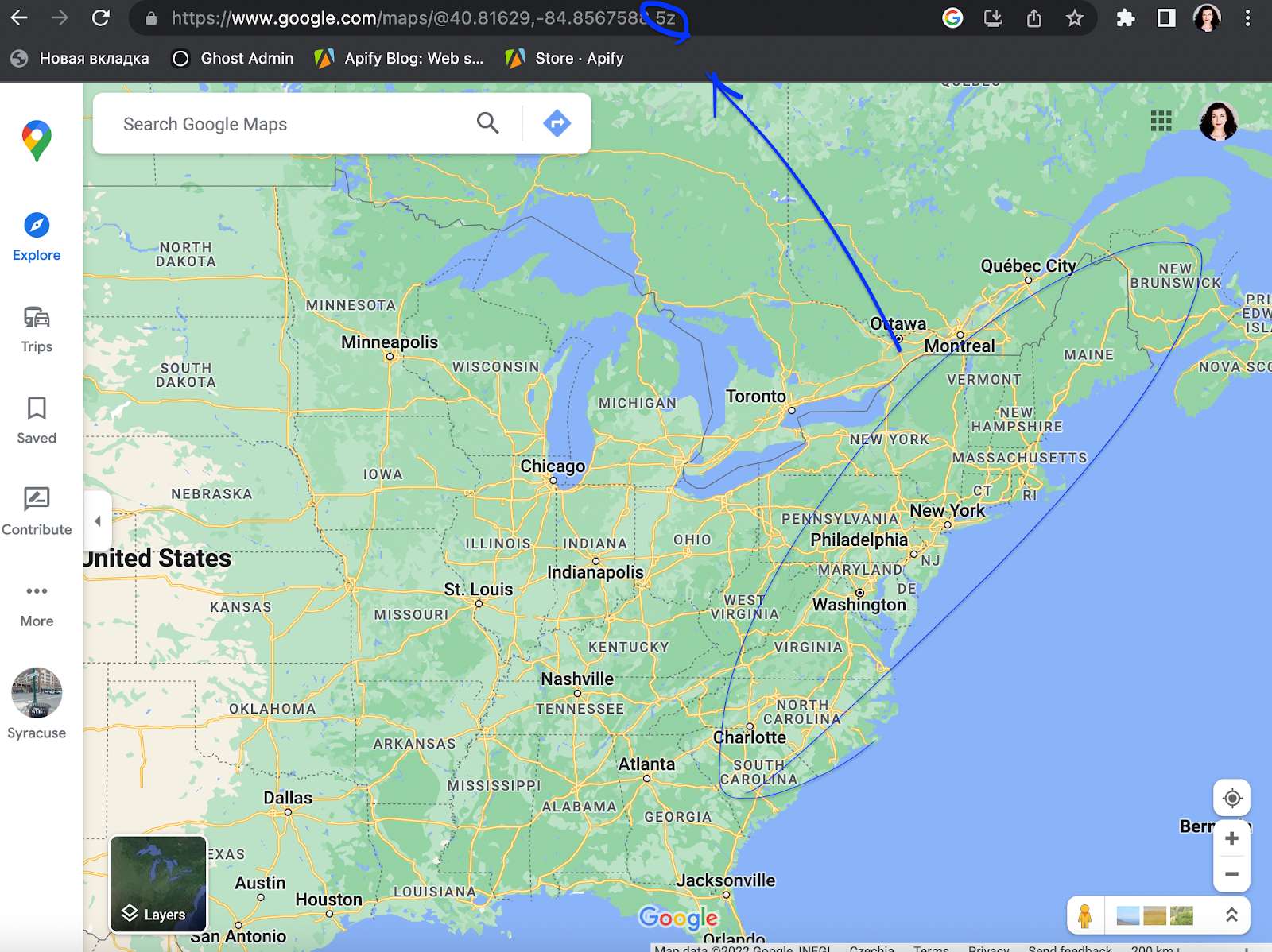

Zoom 0 on Google Maps shows you the whole planet, zoom 22 - a tiny street. You can always check the zoom level in the URL. For instance, to display the whole East Coast, Google Maps uses 5 for the zoom level (as seen in the URL). But the more you zoom in, the higher the level is, the more detailed the map is, and the more places it displays.

For our New York City example, compare the number of pins at zoom level 16 (closer) vs. zoom level 15 (further). Can you see how many places are missing in the latter?

Same map, different zoom levels, and different number of results visible

This zoom difference would influence your API results as well because - you’ve guessed it – the 120 places limitation applies no matter the zoom level. So how does the scraper deal with this issue?

The scraper will split the NYC map into a number of smaller, more detailed mini-maps. For each map, the zoom level will be adjusted automatically to the most optimal and rich-in-results level (usually 16), so the mini-maps won’t be empty. This way, the scraper will be able to find and scrape every single place on a small map. It’s important to note that the scraper does allow you to override the standard zoom level and get even more results (but for higher usage of compute units).

Finally, the scraper sums up and patches together the results of all mini-maps. That way we can get accurate map data from the whole city of New York, which makes this method the best for large-scale scraping.

However, this method’s advantage comes at a cost: while being thorough, map grids can be quite slow and consume a lot of credits. There might be very few restaurants on the mini-maps, way less than 120, so why does it take so long to scrape them? The reason is that every small map is a separate page that the scraper has to open. So that's one extra request for the scraper to do, hence extra work, hence extra consumed credits.

1. You provide the scraper with a set of coordinates: country, city, state, county, or postal code.

2. The scraper splits this map into smaller maps.

3. It also automatically adjusts the zoom level on each minimap to reach the maximum density of pins.

4. The scraper extracts the results from each mini-map.

5. The scraper combines results from all mini-maps.

Start scraping leads

If you want to collect high-quality business data for lead gen, market research, or competitive analysis, and you’re tired of hitting Google Maps' hard limits, you can use Google Maps Scraper to:

- uncover thousands of relevant places in your target geography.

- filter for the exact type of business you need.

- export contact data and integrate it into your sales / outreach stack.

Google Maps Scraper is one of 30,000+ Actors on Apify Store, the largest marketplace of tools for AI. Use it to pull more than contact information: reviews, images, opening hours, detailed characteristics, and popular times. Or browse the marketplace if you need a different tool entirely.